As edge computing gains traction, developers are discovering unexpected kernel limitations when running security-focused container runtimes like gVisor on Raspberry Pi 5. The issue stems from a kernel configuration option that most developers have never heard of: virtual address space size. This article explores why this matters, how it affects container security, and what it means for the future of edge computing.

The edge computing revolution has brought powerful computing capabilities to small, affordable devices like the Raspberry Pi 5. Developers are increasingly deploying containerized workloads on these platforms, but a subtle kernel configuration issue is creating unexpected roadblocks for security-focused container runtimes like gVisor. This isn't just a technical curiosity—it's revealing fundamental tensions between embedded systems design and cloud-native computing expectations.

What Is gVisor, and Why Does It Matter?

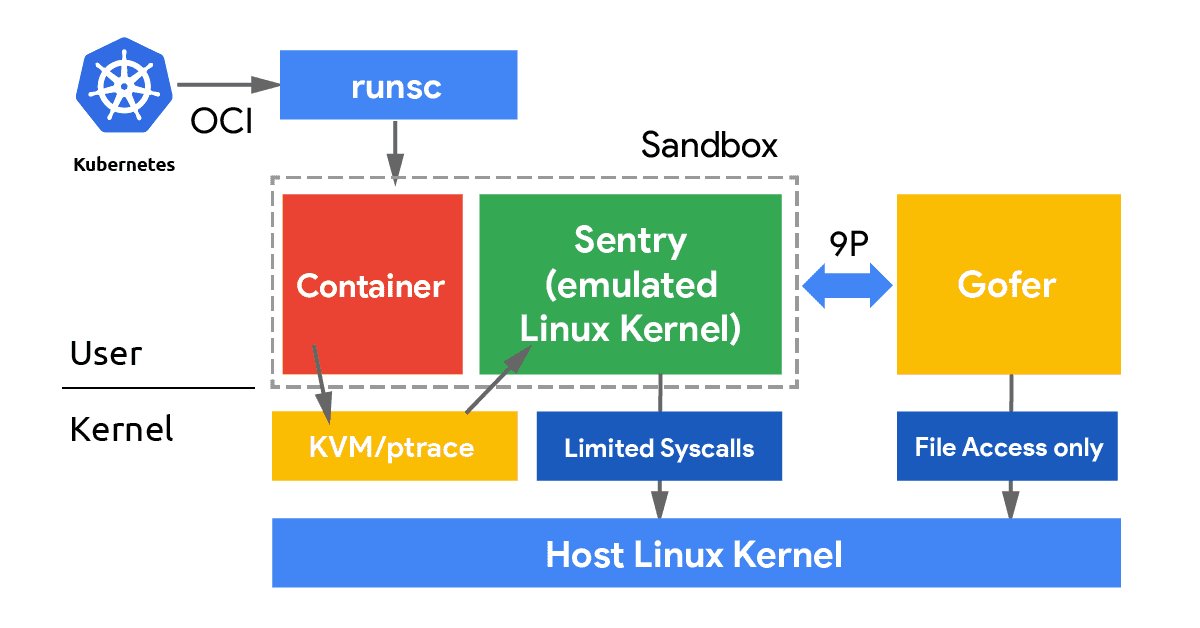

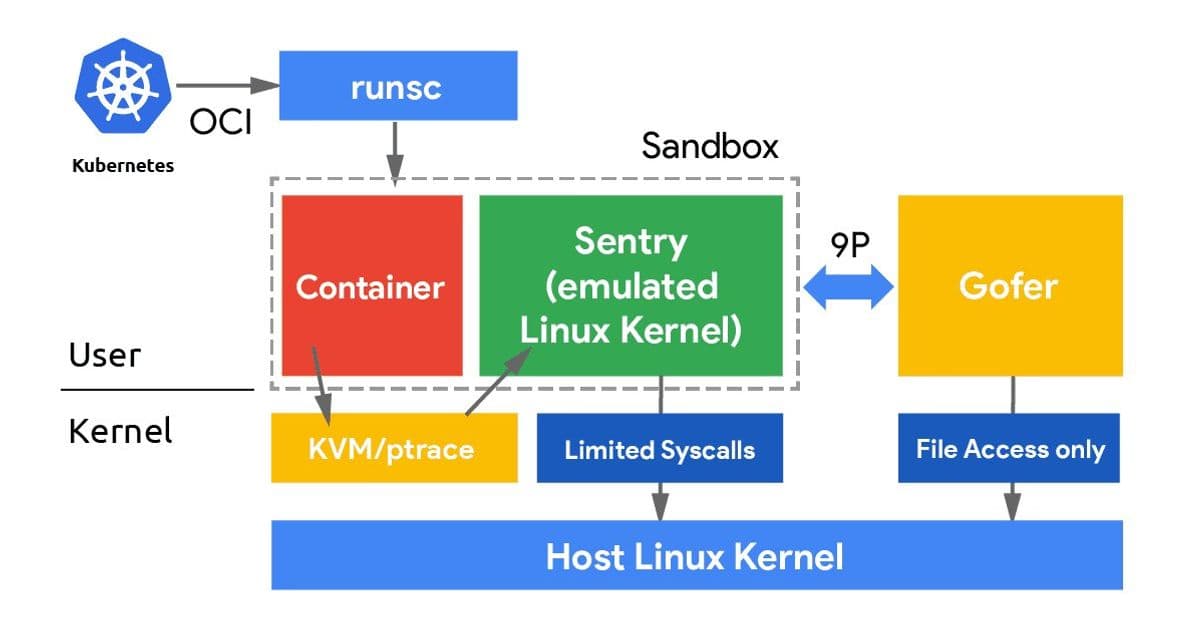

Regular containers (Docker, containerd, etc.) are fast and lightweight, but they share the host kernel. That means a compromised container could potentially attack the host OS, a real concern in multi-tenant or security-sensitive environments. Virtual machines solve this with strong isolation, but at the cost of booting a full separate kernel, pre-allocating memory, and added overhead.

gVisor sits in between these two worlds. It implements a Linux kernel entirely in userspace (called the Sentry) and intercepts all syscalls from your container, handling them in its own sandboxed kernel rather than passing them to the host. Your container thinks it's talking to a normal Linux kernel; in reality, it's talking to gVisor. Only a very small, carefully filtered set of host syscalls ever reaches the real kernel.

The result is VM-like isolation with container-like efficiency. To put it differently: with KVM or Xen, your workload runs inside a hardware-enforced virtual machine managed by a hypervisor. With gVisor, your workload runs inside a userspace-enforced sandbox managed by a software kernel. No VM overhead, no pre-allocated guest memory, no separate boot sequence, but a very strong security boundary.

This makes gVisor a great fit for running untrusted workloads, adding defense-in-depth around sensitive services, or just experimenting with sandboxed containers on edge hardware like a Raspberry Pi 5. The CNCF project has gained significant adoption in environments where security isolation is paramount, but its requirements are more stringent than traditional container runtimes.

The Virtual Address Space Mystery

The issue developers are encountering with Raspberry Pi 5 isn't immediately obvious. It manifests as cryptic errors like "cannot allocate memory in static TLS block" or silent failures when trying to run gVisor containers. The root cause turns out to be a single kernel configuration option: CONFIG_ARM64_VA_BITS.

To understand why this matters, we need to examine how virtual memory works on 64-bit ARM. When a process runs on your system, it doesn't work directly with physical RAM addresses. Instead, the CPU and OS cooperate to give each process its own private virtual address space, a range of addresses that the kernel maps to actual physical memory behind the scenes.

On 64-bit hardware, you technically have 64 bits to play with, which would give you an astronomical 18 exabytes of address space per process. Nobody actually needs that much, so ARM64 Linux uses only a subset of those bits for addressing:

| Configuration | VA Bits | Virtual Address Space |

|---|---|---|

| VA_BITS_39 | 39 bits | 512 GB |

| VA_BITS_48 | 48 bits | 256 TB |

The number of VA bits also determines how many levels of page tables the kernel needs to maintain. With 39-bit VA, you get 3 levels of page tables. With 48-bit, you get 4 levels. More levels means more bookkeeping overhead, but massively more addressable space.

gVisor is the kind of software that needs to organize a city's worth of books. It runs as a userspace process but behaves like a kernel, managing its own internal memory layout, tracking guest virtual address mappings, maintaining page table structures for each sandboxed process, and mapping its own code alongside all of that.

Consider what lives inside the gVisor Sentry's address space:

- Its own code and runtime memory: the Go runtime, gVisor's kernel emulation code, internal data structures.

- Guest memory mappings: the sandboxed container's virtual memory, mapped into the Sentry's address space so it can inspect and manage it.

- Shadow page tables: gVisor maintains its own representation of the guest's page table hierarchy to intercept and validate memory accesses.

- Per-goroutine stacks: the Go runtime uses many concurrent goroutines to handle syscalls, timers, and I/O, each needing its own stack region.

With only 39 bits (512 GB) of virtual address space available, the kernel's own structures already eat into a substantial chunk of that budget. What's left for gVisor's internal allocations, guest memory mappings, and page table structures is simply not enough. The sandbox can't set itself up and fails, often with a cryptic error or a silent exit.

Community Reactions and Workarounds

The discovery of this issue has sparked interesting discussions in the developer community. Some view it as a fundamental limitation of edge hardware, while others see it as a configuration oversight that should be addressed.

"This is exactly why I've been pushing for 48-bit VA as the default for ARM64 Linux," commented one kernel developer on a popular forum. "The embedded world's obsession with backward compatibility is creating security problems for modern applications."

Others take a more nuanced view:

"The Raspberry Pi Foundation has to balance many factors," explains a systems architect who works with embedded systems. "They need to support legacy 32-bit applications while providing 64-bit performance. For most use cases, 39-bit VA is perfectly adequate. It's only when you start running userspace kernels that you hit these limits."

The community has developed several workarounds:

- Switching to Ubuntu for Raspberry Pi, which uses 48-bit VA by default.

- Rebuilding the Raspbian kernel with 48-bit VA support.

- Using alternative security approaches that don't require as much virtual address space.

The kernel rebuild process, while effective, has created a new class of "power users" who are comfortable compiling custom kernels for their Pi hardware. This represents an interesting shift in the Raspberry Pi ecosystem from purely hobbyist use to more professional, security-conscious deployments.

Comparing Approaches: gVisor vs. Traditional Hypervisors

A natural question arises: do traditional hypervisors like KVM or Xen have the same requirement? The answer is complex.

With KVM, the hypervisor needs to map the entire guest physical memory into the host process's virtual address space. Each vCPU and its memory regions live as mmap-ed regions in the KVM process. The more RAM your VM has, the more virtual address space the host process needs.

On ARM64, KVM itself runs in a hardware-enforced privileged mode (EL2), so it has its own separate address space and is less tightly squeezed, but individual KVM-based VMs still need to fit their memory maps within the host VA range.

Xen operates similarly: it runs at the highest privilege level (EL2 on ARM64) as a bare-metal hypervisor and manages guest physical memory directly. Guest kernels run in EL1, and Xen maps guest memory regions independently. Because Xen controls the hardware from the start, it isn't subject to the same userspace VA constraints that gVisor faces.

gVisor, by contrast, is just a userspace process. It must fit everything—its own code, guest memory mappings, shadow page tables—into a single process's virtual address space as seen by the host kernel. This is precisely why the VA size matters so much more for gVisor than for KVM or Xen.

The Embedded vs. Cloud Divide

This issue highlights a fundamental tension in computing architecture: the different priorities of embedded systems versus cloud infrastructure.

Embedded systems like the Raspberry Pi prioritize:

- Backward compatibility with legacy software

- Resource efficiency (memory, CPU cycles)

- Power consumption

- Broad hardware support

Cloud infrastructure prioritizes:

- Security isolation

- Performance consistency

- Feature completeness

- Future scalability

The CONFIG_ARM64_VA_BITS setting is a perfect example of this divide. Raspbian's choice of 39-bit VA is a reasonable tradeoff for a general-purpose, 32-bit-compatible embedded OS kernel. But if you're running gVisor, K3s, or other cloud-native tooling on your Pi, you'll want 48-bit VA.

This isn't just about making gVisor work—it's about what kind of computing we expect from edge devices. As edge computing becomes more sophisticated, we're seeing the boundaries between embedded and cloud systems blur, creating new challenges and opportunities.

Looking Forward: Edge Computing and Security

The Raspberry Pi 5 is rapidly becoming a preferred platform for edge computing experiments. Understanding kernel-level constraints like VA bits will give developers an edge when building secure, scalable systems on resource-constrained hardware.

Several trends are emerging from this situation:

Security-first edge computing: As edge devices handle more sensitive data, the demand for strong isolation like what gVisor provides will grow.

Custom kernel compilation: We're seeing more developers compiling custom kernels for edge devices, moving beyond the "just use the default OS" mentality.

OS specialization: Different edge use cases may require different OS optimizations, leading to more specialized distributions rather than one-size-fits-all solutions.

Hardware-software co-design: The limitations of current hardware are driving conversations about how future edge devices should be designed to better support modern software paradigms.

The gVisor and Raspberry Pi 5 issue is a microcosm of larger trends in computing. As we push more sophisticated workloads onto smaller devices, the mismatch between embedded design assumptions and cloud-native requirements will become increasingly apparent. Developers who understand these underlying tensions will be better positioned to build the next generation of edge computing systems.

For those interested in exploring this further, the gVisor project documentation provides excellent insights into userspace kernel design, and the Raspberry Pi kernel source offers a window into embedded systems optimization. As edge computing continues to evolve, understanding these fundamental tradeoffs will be essential for building secure, efficient systems that bridge the gap between cloud and embedded worlds.

Comments

Please log in or register to join the discussion