An empirical examination of unity builds versus traditional compilation methods on a large C++ codebase reveals significant performance trade-offs between full project compilation and incremental development workflows.

The Trade-Offs of Unity Builds: Compiling Large C++ Projects

In the world of C/C++ development, compilation times have long been a source of frustration, particularly for large projects. A recent experiment by a developer using the Inkscape codebase provides valuable insights into an alternative compilation approach known as "unity build"—a method that merges multiple translation units into a single compilation unit. The results reveal a fascinating trade-off between full build speed and incremental development efficiency.

Understanding Unity Builds

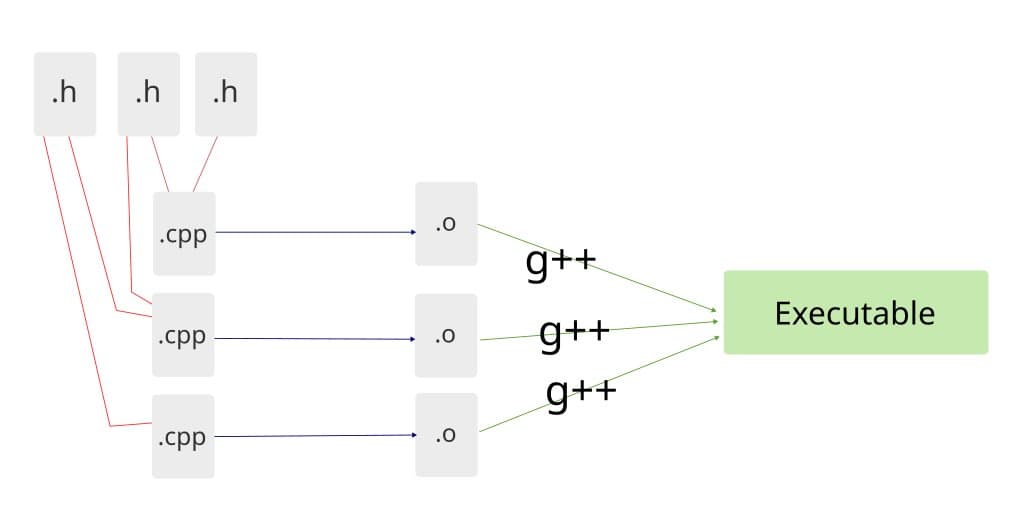

Unity build, or unified build, represents a departure from traditional compilation methods. Instead of compiling each source file separately and later linking the resulting object files, unity builds use the preprocessor's #include directive to merge multiple C/C++ files into one large file before compilation. This single, massive file is then passed directly to the compiler.

The traditional approach creates multiple intermediary .o files that are later linked into an executable, while unity builds merge all files during preprocessing, allowing the compiler to create the executable directly. This fundamental difference in process leads to distinct performance characteristics.

The Inkscape Experiment

To test these compilation approaches empirically, the author selected Inkscape, a substantial open-source C++ project with impressive scale:

- 1,317 C++ files with 453,842 lines of code

- 1,287 header files with 93,914 lines of code

- 76 C files with 51,776 lines of code

The experiment began with establishing baselines using different build methods:

- Regular CMake build: 34 minutes 47 seconds

- Custom build script (without CMake): 26 minutes 52 seconds

The custom script, which replicated the build process without CMake's overhead, revealed a 7-minute improvement, suggesting that build system overhead can significantly impact compilation times.

The Unity Build Revelation

When applying the unity build approach—creating separate all_*.cpp files that included all source files for each library—the results were dramatic:

Total build time: 2 minutes 45 seconds

This represented a 9-12x speedup compared to the custom script and a remarkable 12x improvement over the original CMake build. The most significant improvement came from compiling the main inkscape codebase (877 C++ files) as a single unit, which after preprocessing, resulted in a file containing over 1 million lines with approximately 760,000 actual code lines.

Performance Analysis

The detailed measurements revealed several interesting patterns:

- The compiler processed approximately 59,000 lines per second during unity builds

- C files appeared to compile faster, but this was due to less preprocessing overhead rather than fundamental compiler differences

- When measuring actual source code (excluding headers), compilation rates varied significantly, with C files generally showing higher lines-per-second rates

The main inkscape compilation unit, despite containing 277,253 lines of source code, achieved a rate of about 2,020 lines per second, highlighting the compiler's ability to handle massive compilation units efficiently.

Optimization Levels and Build Systems

The experiment also explored how different optimization levels and build systems affected performance:

| Method | Time |

|---|---|

| cmake + make | 34:52 |

| cmake + make -j 12 | 5:48 |

| cmake + Ninja | 5:50 |

| Custom build script (-O0) | 26:52 |

| Custom build script (-O2) | 32:12 |

| Custom build script (-O3) | 31:08 |

| Unity build (-O0) | 2:57 |

| Unity build (-O2) | 6:08 |

| Unity build (-O3) | 6:17 |

Notably, unity builds with -O0 optimization were significantly faster than with higher optimization levels, suggesting that the compiler's optimization phase consumes substantial resources when processing large compilation units.

Incremental vs. Full Builds

Perhaps the most critical trade-off emerged when comparing incremental builds (changing a single line of code) versus full builds:

Changing one line in a .cpp file:

- Ninja: 2.7 seconds

- Make: 7.8 seconds

- Make with -j12: 5 seconds

Changing one line in a .h file:

- Ninja: 43 seconds

- Make: 3 minutes 30 seconds

- Make with -j12: 46 seconds

In contrast, any change with a unity build requires a full rebuild of the affected compilation unit, taking a minimum of 2 minutes 57 seconds even with -O0 optimization.

Implications for C++ Development

These findings suggest several important considerations for C++ project development:

Full build optimization: For scenarios requiring complete rebuilds—such as continuous integration, distribution, or initial development setup—unity builds offer compelling performance advantages.

Development workflow: For day-to-day development with frequent small changes, traditional incremental builds remain superior, as they can recompile only affected files.

Build system selection: Modern build systems like Ninja, designed for parallel execution, can dramatically reduce build times when compared to traditional Make.

Optimization level impact: The performance characteristics of different optimization levels vary significantly between traditional and unity builds, suggesting that projects might benefit from different optimization strategies for different build scenarios.

Counter-Perspectives and Limitations

While the results are impressive, several limitations and counter-arguments should be considered:

Memory usage: Unity builds likely consume significantly more memory during compilation, which could become a limiting factor on systems with limited RAM.

Debugging complexity: Error messages and debugging become more challenging when working with unified files, as line numbers may not correspond to the original source files.

Codebase suitability: Not all projects may benefit equally from unity builds, particularly those with complex interdependencies or template-heavy code.

Multi-core utilization: The experiment focused on single-core compilation; traditional builds with proper parallelization might be more competitive in multi-core environments.

The Path Forward

The author's initial expectation of a 2-3x speedup was dramatically exceeded, suggesting that unity builds may offer even greater benefits than commonly assumed for large C++ projects. However, the elimination of incremental build capabilities presents a significant drawback for development workflows.

A potential middle ground might involve selective application of unity builds—using them for performance-critical libraries while maintaining traditional compilation for frequently modified code. Additionally, build systems could potentially incorporate unity build techniques for specific targets while preserving incremental compilation capabilities for others.

The experiment also highlights the importance of build system optimization. The 7-minute difference between CMake and the custom script demonstrates that build system overhead can be substantial, suggesting that optimizing the build process itself may yield significant improvements.

As C++ continues to evolve with new language features and larger codebases, compilation techniques like unity builds may become increasingly important. However, the fundamental trade-off between full build speed and incremental development efficiency will likely remain a critical consideration for project architecture and build system design.

For developers interested in exploring unity builds further, the experiment's source code and detailed measurements provide an excellent starting point. The modified Inkscape build with unity support can be found at https://github.com/hereket/inkscape-unity-build, offering a practical reference for implementing similar approaches in other projects.

Comments

Please log in or register to join the discussion