LLMs

Why LLMs Aren't the Next Generation of Compilers

2/7/2026

LLMs

Rethinking Transformer Efficiency: FORTH-Style Postfix Outperforms Prefix in LLM Benchmarks

2/7/2026

LLMs

Datadog Integrates Google Agent Development Kit into LLM Observability Tools

2/6/2026

LLMs

Context Engineering for Coding Agents

2/5/2026

LLMs

Fragments: February 4 - AI, Code, and the State of Minnesota

2/4/2026

LLMs

The Hidden Quadratic: How LLM Agent Cache Reads Are Eating Your Budget

2/3/2026

LLMs

Inside Nano-vLLM: How Modern Inference Engines Transform Prompts into Tokens

2/2/2026

LLMs

AutoBe's Hardcore Function Calling Benchmark Pushes LLM Backend Generation to New Limits

2/2/2026

LLMs

LLMs as Emacs Elisp Assistants: Reducing the Friction of Infinite Customization

2/2/2026

LLMs

Arcee AI launches Trinity Large, a 400B-parameter open-weight model challenging Meta's Llama 4 Maverick

1/29/2026

LLMs

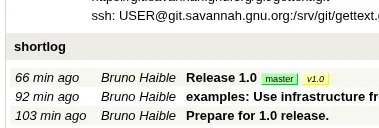

GNU gettext Reaches Version 1.0 After 30+ Years In Development - Adds LLM Features

1/29/2026

LLMs

The Double-Edged Sword of AI-Assisted Code Porting: Lessons from Translating TinyEMU to Go

1/28/2026

LLMs

Turns out I was wrong about TDD

1/25/2026