New ORCA benchmark tests show AI models are getting better at math but still can't reliably solve problems, with even top performers like Gemini 3 Flash only achieving C-grade accuracy.

New benchmark testing reveals that while today's leading AI models have improved their mathematical capabilities, they still fundamentally struggle with arithmetic and quantitative reasoning tasks. The findings, published by Omni Calculator's ORCA research team, show that even the best-performing models would receive only a C grade if assessed by traditional academic standards.

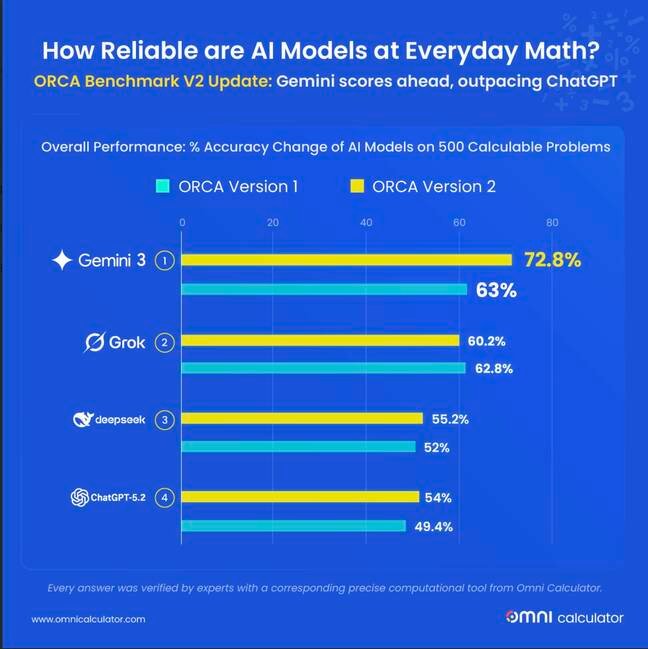

Gemini 3 Flash leads but still falls short

The latest ORCA Benchmark tests, which consist of 500 practical math questions across various domains, show Google's Gemini 3 Flash achieving the highest accuracy at 72.8 percent. This represents a 9.8 percentage point improvement from its predecessor, but still falls well short of the accuracy needed for reliable mathematical computation. DeepSeek V3.2 reached 55.2 percent accuracy, ChatGPT 5.2 achieved 54.0 percent, and notably, xAI's Grok 4.1 actually regressed to 60.2 percent accuracy, losing 2.6 percentage points from its previous version.

The fundamental limitation of prediction engines

According to Dawid Siuda, researcher at ORCA, the core issue lies in how these models operate. "AI models are essentially prediction engines rather than logic engines," Siuda explained. "Because they work on probability, they are basically guessing the next most likely number or word based on patterns they have seen before. It's like a student who memorizes every answer in a math book but never actually learns how to add."

This fundamental limitation means that models can produce correct answers through pattern recognition without understanding the underlying mathematical principles. The researchers note that it's entirely possible for a model to get a question right one day and wrong the next, simply due to the probabilistic nature of their responses.

Inconsistent performance across domains

The benchmark revealed significant variation in performance across different mathematical domains. Gemini 3 Flash showed particular strength in Math & Conversions, reaching 93.2 percent accuracy, up from 83 percent previously. However, other models showed concerning weaknesses. Grok 4.1 lost 9 percentage points in Health & Sports problems and 5.3 percentage points in Biology & Chemistry questions, suggesting recent updates may have deprioritized quantitative reasoning capabilities.

The instability problem

Beyond accuracy issues, the researchers identified a significant "instability" problem, measuring how often models changed their answers when asked the same question twice. Gemini 3 Flash proved the most consistent, changing only 46.1 percent of incorrect responses. ChatGPT changed its answer 65.2 percent of the time, while DeepSeek V3.2 changed answers for 68.8 percent of errors. This inconsistency makes AI models unreliable for applications requiring precise mathematical calculations.

Shifting error patterns

Interestingly, the nature of errors is evolving. Calculation errors now account for 39.8 percent of all mistakes, up from 33.4 percent, while rounding errors have decreased to 25.8 percent from 34.7 percent. The researchers conclude that AI models are getting better at making math look right through formatting and presentation, while still struggling with the actual arithmetic.

Potential solutions on the horizon

Several approaches may help address these limitations. Function calling, where AI models farm out arithmetic to deterministic sources, is already being implemented by major companies like Google and OpenAI. "The real headache happens with long, messy problems," Siuda noted. "The AI has to keep track of every little result at each stage, and it usually gets overwhelmed or confused."

Another promising avenue involves teaching models to verify responses through formal proofs. Google's DeepMind has developed an approach using reinforcement learning based on proofs developed with the Lean programming language and proof assistant, which scored a silver medal result on the International Mathematical Olympiad.

The current reality

For now, the ORCA researchers have a clear message: trust no AI for mathematical calculations. While these models continue to improve in many areas, their fundamental nature as prediction engines rather than logic engines means they will likely always struggle with the kind of precise, deterministic reasoning that mathematics requires. The gap between appearing to do math and actually doing math remains as wide as ever, even as the surface-level performance continues to improve.

Comments

Please log in or register to join the discussion