AMD's MLIR-AIE 1.3 release introduces a new C++ aiecc compiler alongside performance improvements and Windows support, expanding Ryzen AI NPU capabilities beyond traditional AI workloads.

AMD has released MLIR-AIE 1.3, a major update to their compiler toolchain for AMD AI Engine devices including Ryzen AI NPUs. This release introduces a new C++ aiecc compiler alongside performance improvements and early Windows support, marking a significant step in AMD's efforts to expand NPU capabilities beyond traditional AI workloads.

Expanding Ryzen AI NPU Capabilities

The MLIR-AIE project provides Python APIs and other tools for leveraging AMD NPUs as an alternative to traditional Ryzen AI software workflows focused on AI inferencing. The goal has been to open up Ryzen AI NPUs to digital signal processing and other non-AI/ML workloads thanks to the versatility of LLVM and MLIR.

This expansion comes at a time when Ryzen AI NPUs are already seeing increased Linux support, with recent developments like Lemonade 10.0 server and FastFlowLM 0.9.35 adding Linux compatibility for running large language models.

The New C++ AIECC Compiler

The headline feature of MLIR-AIE 1.3 is the new C++ aiecc compiler, which serves as an alternative to the project's existing Python-based tooling. According to code comments in the new AIECC C++ file, this compiler acts as the main entry point for the AIE compiler driver, orchestrating the compilation flow for AIE devices.

The C++ implementation provides similar functionality to the Python aiecc.py tool with a comprehensive architecture:

- Command-line argument parsing using LLVM CommandLine library

- MLIR module loading and parsing

- MLIR transformation pipeline execution

- Core compilation (xchesscc/peano)

- NPU instruction generation

- CDO/PDI/xclbin generation

- Multi-device support

The C++ aiecc compiler delivers several advantages over its Python counterpart, including better performance, C++17 support, and enhanced capabilities for MLIR module loading and parsing, NPU instruction generation, and ELF instruction generation.

Performance and Feature Improvements

Beyond the new compiler, MLIR-AIE 1.3 includes substantial improvements to AIE2 vector operations. These enhancements focus on BF16 (Brain Floating Point 16) support, FMA (Fused Multiply-Add) operations, reductions, and tanh (hyperbolic tangent) functions.

The release also brings more robust runtime support and additional IR (Intermediate Representation) features, improving the overall development experience for those working with AMD's AI Engine devices.

Cross-Platform Support

One of the more practical additions in this release is early native Windows support, providing developers with an alternative to Linux use. This cross-platform capability aligns with AMD's broader strategy of making their AI Engine technology accessible across different development environments.

Ecosystem Integration

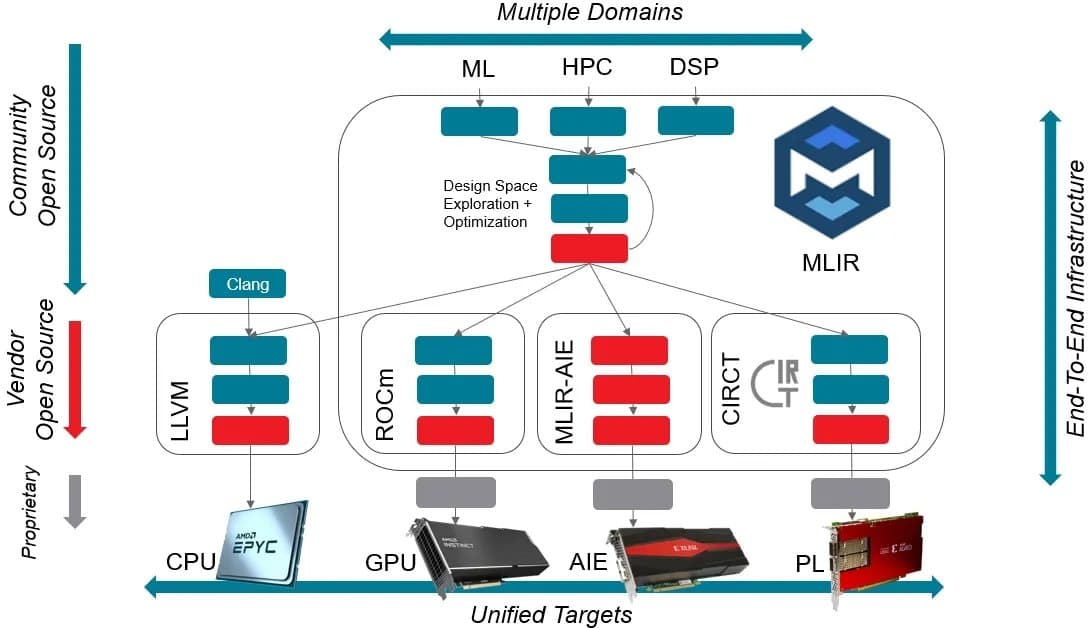

MLIR-AIE's approach leverages the broad support for MLIR in the software ecosystem and across hardware vendors. This strategy allows versatility in targeting other AMD products, including Radeon/Instinct GPUs via ROCm, CPUs via LLVM, and other AMD-Xilinx accelerator products.

The project also ties into AMD's Peano code, creating a more cohesive development ecosystem for AMD's various accelerator technologies.

Availability

MLIR-AIE 1.3 is available now via GitHub, where developers can access downloads and detailed documentation about the new features and improvements. The README file provides comprehensive information about the new AIECC C++ compiler and other changes in this release.

This update represents AMD's continued investment in making their AI Engine technology more accessible and versatile, potentially opening new use cases for Ryzen AI NPUs beyond their original AI/ML focus.

Comments

Please log in or register to join the discussion