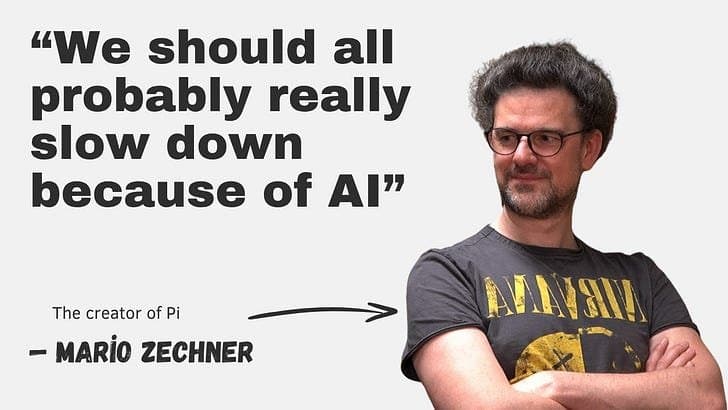

An in-depth exploration of Pi, a minimalist self-modifying AI coding agent created by Mario Zechner, and a discussion with Armin Ronacher about why human judgment remains crucial in an AI-driven development world.

Building Pi: The Self-Modifying AI Coding Agent and the Limits of Automation

In the latest episode of The Pragmatic Engineer Podcast, Mario Zechner, creator of Pi, and Armin Ronacher, creator of Flask, engage in a fascinating conversation about AI coding, its limitations, and the enduring importance of human judgment in software development.

The Genesis of Pi

Pi emerged from Mario Zechner's frustration with Claude Code. Initially a big fan of Claude Code, Mario observed that as the team behind it added more features and increased velocity, bugs multiplied and the tool's behavior became unpredictable. This led him to create Pi with a different philosophy: minimalism and stability.

"I wanted an AI harness that behaves in a stable, consistent way," Mario explains. "The addition of new features caused Claude Code to act unpredictably, so I resolved to add as few features as possible to Pi."

Pi is built on the principle of self-modification, allowing users to create specialized harnesses for specific tasks. This approach represents a preview of how self-modifiable software might evolve in the future.

The Philosophy of Specialized Tools

Mario emphasizes that different projects require different harness types, as "the same hammer is not ideal for every single construction job." Pi's design allows it to modify itself to create bespoke harnesses tailored to specific needs.

"It should be MUCH easier to build specialized tools for specific tasks," Mario argues. "Pi is built with the goal of allowing the creation of specialized harnesses. It can modify itself so that a user can create the bespoke harness needed for any task."

The Risks of AI Agents

Both Mario and Armin express significant concerns about the risks of over-reliance on AI agents:

Automation Bias

"Automation bias is one of the biggest risks of working with AI agents," Mario warns. "Once devs confirm that an AI agent can produce acceptable code, they start to review its output less often, even though agents can – and do! – produce slop."

He advises being far more skeptical with agents and cautions that "the quality of their output isn't guaranteed, however well they performed previously."

Declining Code Quality

From his conversations with 30+ engineering teams, Armin has observed a troubling trend: "AI agents decrease code quality, but this is not on purpose. Code quality is down everywhere, and serious projects are shipping with 'vibe slop.'"

The causes include:

- Keeping agentic output clean requires deliberate effort

- Many developers aren't clear on how to maintain quality

- PR review fatigue

- Automation bias (assuming AI agents invariably generate good code)

Junior Engineers vs. AI Agents

An interesting point Mario makes is that junior engineers are more valuable than AI agents: "Unlike humans, agents don't retain lessons in the same way, nor feel the pain of bad code. Junior engineers do, and the pain of maintenance teaches them to simplify interfaces and avoid bad abstractions – which are both qualities of an effective senior engineer."

The Problem of Refactoring

"Agents refactor less because they feel no 'pain,'" Mario explains. "Humans rewrite bad interfaces because maintaining them hurts, whereas agents will obliviously churn out and extend a terrible structure, ad infinitum. This is a big reason why AI agents keep adding more tech debt."

Complexity and Decision-Making

Armin observes a new trend: "AI makes it harder for senior engineers to reject pointless complexity. Historically, senior engineers kept software complexity at bay simply by saying 'no' a lot. But these days, more junior engineers and product managers deploy agent-scripted counterarguments when a senior colleague kicks an idea to the curb. This makes decision-making exhausting, and more bad ideas make it into production as a result."

The Value of Friction

Counterintuitively, Armin argues that some friction in development processes is beneficial: "Frictionless shipping can actually be harmful. Some friction is desirable; for example, multi-reviewer approvals on critical services, SLO gates (different gates based on the service level objective offered), and migration checklists. The good thing about friction is that it makes humans stop and think."

Staying Grounded in an AI-Driven World

When asked how he maintains perspective while building one of the most popular AI agent harnesses, Mario credits factors beyond technology: "In response, he credits living in Austria, being a father, and enjoying the great outdoors, as his antidotes to all the hype."

The Future of AI in Development

Looking ahead, both Mario and Armin emphasize that while AI tools like Pi have value, they should augment rather than replace human judgment. The conversation touches on open source's ability to withstand a tidal wave of agent-generated code, the challenges of working with code written by non-engineers, and the importance of maintaining quality standards in an increasingly automated development world.

For those interested in exploring Pi further, you can visit the official Pi website. To learn more about OpenClaw, which is built on Pi, check out their site.

This conversation serves as an important reminder that while AI tools continue to evolve, the human element of software development – judgment, experience, and the ability to feel the pain of bad code – remains irreplaceable.

Comments

Please log in or register to join the discussion