LinkedIn has introduced a Cognitive Memory Agent (CMA) as part of its generative AI application stack to enable stateful, context-aware AI systems that retain and reuse knowledge across interactions.

LinkedIn has introduced a Cognitive Memory Agent (CMA) as part of its generative AI application stack to enable stateful, context-aware AI systems that retain and reuse knowledge across interactions. The system is designed to power applications such as its Hiring Assistant, addressing a fundamental limitation of large language model-based workflows: statelessness and the resulting loss of continuity across sessions.

CMA functions as a shared memory infrastructure layer between application agents and underlying language models. Instead of reconstructing context through repeated prompting, agents can persist, retrieve, and update memory through a dedicated system. This enables continuity across sessions, reduces redundant reasoning, and improves personalization in production environments where user context evolves.

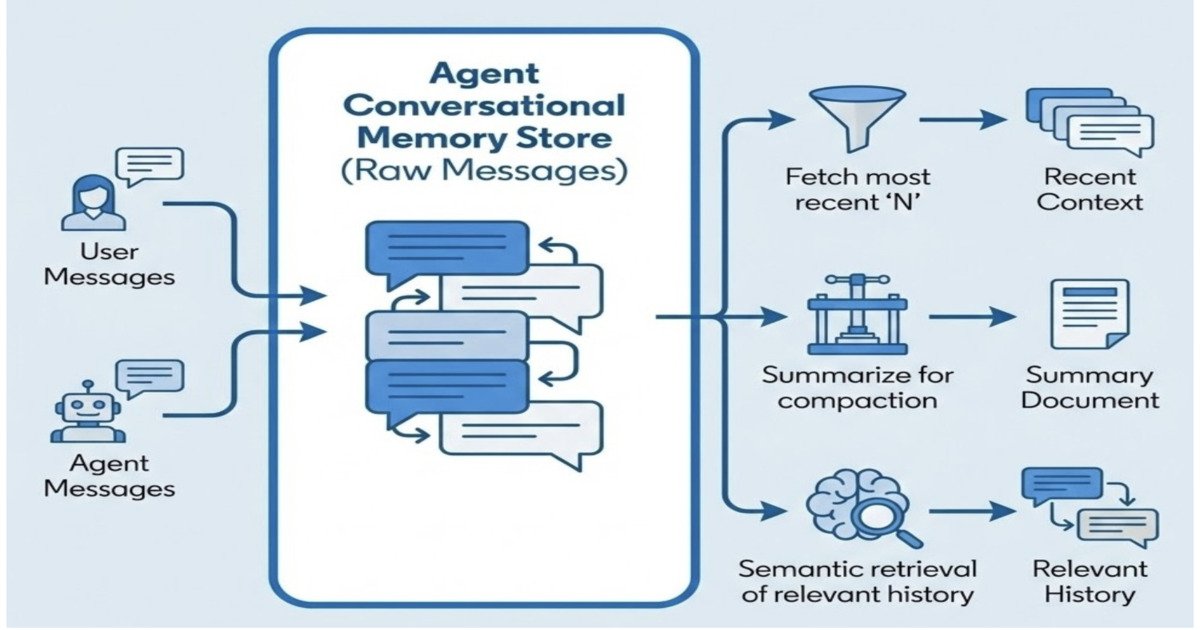

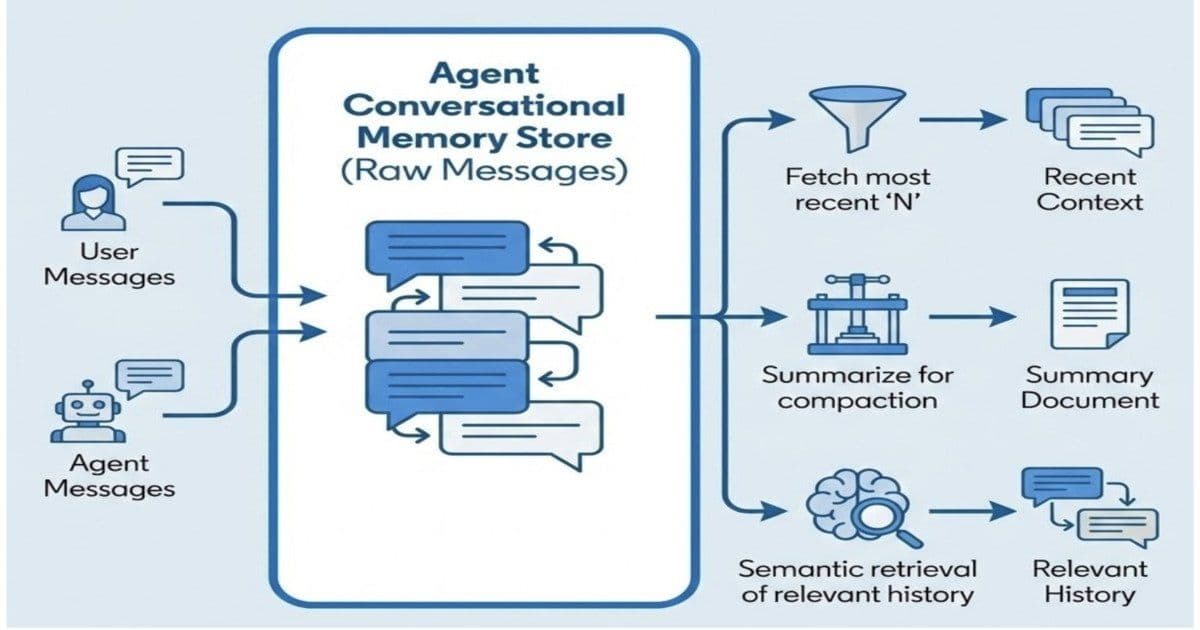

Conversational memory layer illustration (Source: LinkedIn Blog Post)

The architecture organizes memory into three distinct layers. Episodic memory captures interaction history and conversational events, allowing agents to recall past exchanges. Semantic memory stores structured knowledge derived from interactions, enabling reasoning over persistent facts about users, entities, or preferences. Procedural memory encodes learned workflows and behavioral patterns, helping agents improve task execution strategies over time. Together, these layers shift agent behavior from single-turn responses to longitudinal adaptation.

Xiaofeng Wang, an engineer at LinkedIn, noted in a post, "Memory is one of the most challenging and impactful pieces of building production agents," adding that it enables real personalization, continuity, and adaptation at scale.

CMA also plays a critical role in multi-agent systems. Rather than each agent maintaining an isolated context, CMA provides a shared memory substrate accessible across specialized agents responsible for planning, reasoning, and execution. This shared layer reduces state duplication, improves coordination, and ensures consistency in outputs across distributed workflows.

From a systems perspective, CMA integrates multiple retrieval and lifecycle management mechanisms. Recent context retrieval supports short-term relevance, while semantic search enables access to long-term historical interactions. Memory compaction through summarization helps control storage growth and maintain performance at scale. These mechanisms introduce core engineering challenges around relevance ranking, staleness management, and consistency of evolving user context.

Karthik Ramgopal, Distinguished Engineer at LinkedIn, emphasized the shift toward persistent context in agentic systems, stating "Good agentic AI isn't stateless: It remembers, adapts, and compounds. One of the key capabilities enabling this is memory that lives beyond context windows"

Operationally, persistent memory systems introduce classic trade-offs in distributed systems. Determining what to store, when to retrieve it, and how to handle staleness becomes central to system correctness. Subhojit Banerjee, a MLOPS Data Engineer, highlights, "Cache invalidation is one of the hardest problems in computer science, and glad you made the caveat clear. The obvious challenge in extracting this memory is correctly identifying episode boundaries, staleness, and conflict resolution."

In user-facing applications such as recruiting, LinkedIn also incorporates human validation into the workflow. This hybrid approach helps ensure that AI-generated outputs, augmented by persistent memory, remain aligned with user intent and business requirements, particularly in high-stakes decision environments.

CMA reflects a broader architectural shift in AI systems from stateless generation to stateful, memory-driven agent design. By externalizing memory into a dedicated infrastructure layer, LinkedIn positions CMA as a horizontal platform for building adaptive, personalized, and collaborative agentic systems at scale. The direction highlights a growing industry consensus: production-grade AI systems are not defined by models alone, but by the memory, context management, and infrastructure layers that surround them.

About the Author Leela Kumili Leela is a Lead Software Engineer at Starbucks with deep expertise in building scalable, cloud-native systems and distributed platforms. She drives architecture, delivery, and operational excellence across the Rewards Platform, leading efforts to modernize systems, improve scalability, and enhance reliability. In addition to her technical leadership, Leela serves as an AI Champion for the organization, identifying opportunities to improve developer productivity and workflows using LLM-based tools and establishing best practices for AI adoption. She is passionate about building production-ready systems, enhancing developer experience, and mentoring engineers to grow in both technical and strategic impact. Her interests include platform engineering, distributed systems, developer productivity, and bridging technical solutions with business and product goals.

Comments

Please log in or register to join the discussion