As AI makes mass pull requests trivial to generate, Good Egg provides data-driven trust scoring for GitHub contributors by analyzing their contribution history across the ecosystem.

The democratization of software development through AI tools has created an unexpected challenge: the signal-to-noise ratio in open source contributions is rapidly deteriorating. When anyone can generate dozens of pull requests with minimal effort, how do maintainers distinguish genuine contributors from automated noise? Enter Good Egg, a trust scoring system that analyzes a contributor's entire GitHub footprint to provide context about their reliability and investment in the ecosystem.

The Problem: AI-Generated PRs and Eroded Trust Signals

The proliferation of AI coding assistants has made it trivial to generate pull requests at scale. While this democratizes contribution opportunities, it simultaneously floods maintainers with submissions that may lack the thoughtful engagement traditionally associated with quality contributions. A pull request that took minutes to generate through an AI tool carries fundamentally different weight than one crafted through hours of careful analysis and testing.

Good Egg addresses this by shifting from manual vouching to data-driven assessment. Instead of relying on subjective impressions or requiring maintainers to research contributor histories manually, it automatically mines a contributor's existing track record across the GitHub ecosystem. This approach recognizes that genuine investment in open source typically leaves a trail of consistent, quality contributions across multiple projects.

How Good Egg Works

The system offers three distinct scoring models, each representing different approaches to measuring contributor trustworthiness:

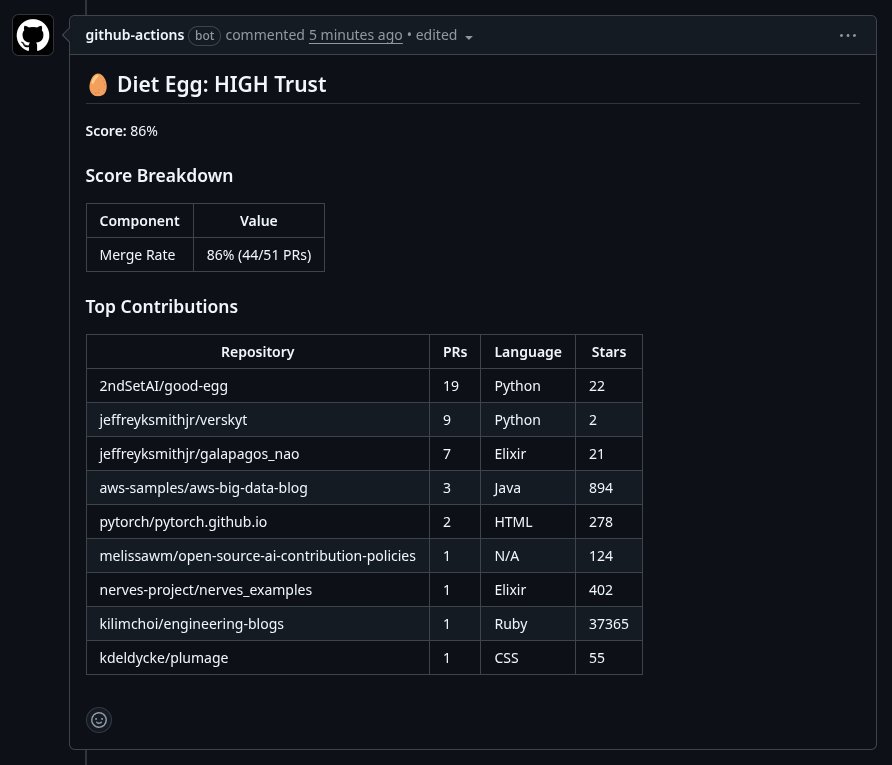

Diet Egg (v3) - The default model takes a straightforward approach: calculating the all-time merge rate by dividing merged pull requests by total pull requests (merged plus closed). This simple metric captures whether a contributor's submissions tend to be accepted or rejected across their entire GitHub history.

Better Egg (v2) - This more sophisticated model combines graph scoring with merge rate and account age through logistic regression. It builds a weighted contribution graph that captures not just raw numbers but the quality and breadth of a contributor's engagement across the ecosystem.

Good Egg (v1) - The original model focuses purely on graph-based scoring from contribution history, creating a network analysis of how contributors interact with projects and maintainers over time.

Trust Levels and Fresh Egg Advisory

Good Egg categorizes contributors into distinct trust levels:

- HIGH: Established contributors with strong cross-project track records

- MEDIUM: Some contribution history but limited breadth or recency

- LOW: Little to no prior contribution history requiring manual review

- UNKNOWN: Insufficient data for meaningful scoring

- BOT: Automatically detected bot accounts (Dependabot, Renovate, etc.)

- EXISTING_CONTRIBUTOR: Authors with merged PRs in the target repository, where scoring is skipped

A particularly thoughtful feature is the Fresh Egg advisory, which flags accounts less than 365 days old. This isn't a penalty but rather an informational signal, as validation data shows fresh accounts correlate with lower merge rates. This allows maintainers to apply appropriate scrutiny without automatically dismissing potentially valuable new contributors.

Implementation Flexibility

Good Egg's versatility shines through its multiple integration options:

Quick Start with uv: The command uvx good-egg score <username> --repo <owner/repo> allows immediate testing without installation, requiring only a GitHub personal access token.

Python Library: For programmatic integration, the async library provides direct access to scoring functions, enabling custom workflows and analysis.

GitHub Action: Native integration into pull request workflows automatically scores contributors when PRs are opened, reopened, or synchronized. The action requires minimal configuration and can be customized through inputs and outputs.

MCP Server: Integration with Claude Desktop through the Model Context Protocol allows AI assistants to leverage Good Egg's scoring capabilities within their workflows.

Configuration and Customization

The system offers extensive configuration options through environment variables prefixed with GOOD_EGG_ and YAML configuration files. Maintainers can adjust trust thresholds (defaulting to 0.7 for high trust and 0.3 for medium trust) and select scoring models based on their specific needs and risk tolerance.

The Broader Context: Trust in an AI-Generated World

Good Egg represents a fascinating evolution in how we establish trust in digital communities. As AI tools become increasingly capable of generating human-like content and interactions, the need for systems that can distinguish genuine human investment from automated activity becomes paramount.

This isn't just about filtering spam or low-quality contributions. It's about preserving the social fabric of open source communities, where trust, reputation, and demonstrated commitment have traditionally played crucial roles in collaboration and decision-making. By quantifying these traditionally qualitative aspects, Good Egg provides a bridge between the efficiency gains of AI tools and the human values that make open source communities thrive.

The system acknowledges an important nuance: not all contributions need to come from established contributors. Fresh accounts with low scores might still contain valuable contributions deserving of consideration. The goal isn't to create barriers but to provide context that enables better decision-making.

Practical Implications for Maintainers

For open source maintainers, Good Egg offers several practical benefits:

Efficiency: Automatically filtering low-trust contributors reduces the time spent reviewing low-quality or potentially harmful contributions.

Consistency: Data-driven scoring provides objective criteria for contribution evaluation, reducing bias and inconsistency in maintainer decisions.

Risk Management: Identifying bot accounts and low-trust contributors helps prevent potential security issues or spam from entering the codebase.

Community Building: By providing clear signals about contribution quality, maintainers can more effectively nurture promising new contributors while maintaining project standards.

Looking Forward

As AI continues to transform software development, tools like Good Egg will likely become increasingly important. The challenge isn't just technical but philosophical: how do we preserve the human elements of collaboration and trust in an environment where machines can increasingly mimic human behavior?

Good Egg's approach—focusing on demonstrated track records rather than real-time assessment—offers one compelling answer. It recognizes that genuine investment in open source communities leaves traces that AI-generated activity typically cannot replicate, at least not without substantial effort and time.

The system also raises interesting questions about the future of contribution assessment. As AI tools become more sophisticated, will merge rates become less meaningful? Will new metrics emerge to capture different aspects of contribution quality? Good Egg's modular architecture, supporting multiple scoring models, positions it well to evolve alongside these changes.

For now, Good Egg provides a practical solution to an immediate problem while also contributing to the broader conversation about trust, authenticity, and community in an AI-augmented world. It's a reminder that even as technology transforms how we create software, the human elements of trust and reputation remain as important as ever—they just need new tools to measure and manage them effectively.

Comments

Please log in or register to join the discussion