Google researchers and MIT collaborators have developed a predictive framework for scaling multi-agent systems that reveals critical trade-offs between tool usage and coordination strategies, enabling organizations to optimize their agentic architectures based on specific task requirements.

Google Publishes Scaling Principles for Agentic Architectures

Researchers from Google and MIT have published a comprehensive paper detailing a predictive framework for scaling multi-agent systems that moves beyond heuristic approaches to quantitative principles. This research provides organizations with a methodology to select optimal agentic architectures based on specific task requirements, addressing a critical challenge as enterprises increasingly adopt multi-agent systems for complex workflows.

The Scaling Framework: Beyond Heuristics

The framework developed by Google's research team introduces a systematic approach to understanding how multi-agent systems scale based on several predictive factors:

- The underlying LLM's intelligence index

- The baseline performance of a single agent

- The number of agents deployed

- The number of tools available

- Coordination metrics between agents

Unlike previous approaches that relied on trial-and-error or general principles, this framework provides mathematical models to predict performance outcomes before implementation. The researchers developed a regression model with 20 terms based on nine predictor variables and their interactions, carefully excluding interactions without clear mechanistic justification to avoid overfitting.

Three Dominant Effects in Multi-Agent Scaling

The research identified three primary effects that govern how multi-agent systems scale:

Tool-coordination trade-off: Tasks requiring many tools actually perform worse with multi-agent overhead due to increased coordination complexity. This creates a fundamental tension that organizations must navigate when designing systems.

Capability saturation: Adding additional agents yields diminishing returns when the single-agent baseline performance exceeds a certain threshold. This effect helps organizations determine the optimal number of agents for a given task without over-provisioning.

Topology-dependent error amplification: Centralized orchestration reduces error amplification compared to decentralized approaches, though this comes with different trade-offs in terms of communication overhead and single points of failure.

Architecture Classification and Coordination Strategies

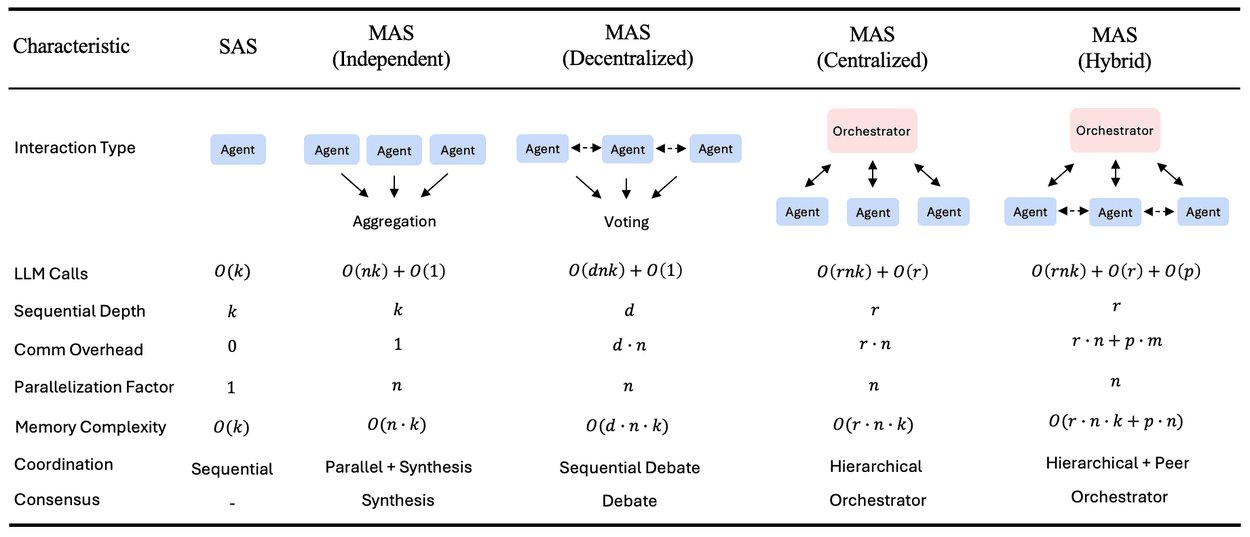

Google classified multi-agent architectures into four categories based on coordination patterns:

- Independent: No inter-agent coordination, simplest but least effective for complex tasks

- Centralized: Agents communicate only with a central orchestrator, reducing error amplification

- Decentralized: Peer-to-peer coordination, offering better distribution but higher error potential

- Hybrid: Balanced approach between centralized and decentralized coordination

Each architecture type has different configuration parameters, computational complexities, and memory requirements, creating a complex decision matrix for organizations implementing these systems.

Task-Dependent Coordination Strategies

One of the most significant findings is that the optimal coordination strategy varies by task type:

- Financial reasoning tasks benefit from centralized orchestration, where a central coordinator can maintain consistent logic and reduce error propagation

- Web navigation tasks perform better with decentralized strategies, allowing parallel exploration and reducing bottlenecks

When evaluated on held-out test data, the scaling framework successfully predicted the optimal coordination strategy 87% of the time, demonstrating its practical value for real-world implementations.

Business Implications and Implementation Considerations

For organizations adopting multi-agent systems, this research provides concrete guidance for architecture decisions:

Resource allocation: Understanding capability saturation helps prevent over-provisioning of agents, optimizing cloud resource utilization and associated costs.

Tool selection: The tool-coordination trade-off suggests that organizations should carefully evaluate which tools are essential versus which create coordination overhead.

Orchestration strategy: The task-dependent nature of optimal coordination means organizations must analyze their specific use cases rather than adopting a one-size-fits-all approach.

Error management: Topology-dependent error amplification considerations should influence architecture decisions based on the criticality and error tolerance of specific applications.

Limitations and Future Research Directions

The Google team acknowledges several limitations in their current model:

- "Tool-heavy" tasks cause inefficiencies in multi-agent coordination, suggesting the need for specialized coordination protocols for tool-intensive scenarios

- The model may not capture all edge cases in complex, real-world environments

- Additional research is needed for specialized domains with unique requirements

These limitations point toward opportunities for further refinement and domain-specific adaptations of the framework.

Industry Context and Competitive Landscape

Google's research builds on and differentiates from other industry approaches:

- Amazon's multi-agent collaboration framework for Amazon Bedrock enables specialized agents to work under a supervisor's coordination

- Google's previous guide on eight essential design patterns for multi-agent systems provided concrete explanations with sample code for their Agent Development Kit

- The research positions Google's approach as more quantitative and predictive compared to competitors' more heuristic or pattern-based methodologies

For organizations evaluating multi-agent solutions, this research provides a framework for comparing approaches beyond marketing claims, focusing instead on measurable performance characteristics.

Practical Implementation Advice

Based on the research findings and community feedback, organizations should consider the following when implementing multi-agent systems:

Start with task analysis: Before selecting an architecture, thoroughly analyze the specific requirements and characteristics of the tasks the system will perform.

Benchmark single-agent performance: Establish a baseline with a single agent to understand the potential gains from multi-agent approaches and identify capability saturation points.

Consider hybrid approaches: For complex workflows, consider hybrid coordination strategies that can adapt based on task phases or components.

Implement monitoring: Given the topology-dependent error amplification, implement robust monitoring to detect and coordinate error handling across agents.

Iterative optimization: Use the framework's predictive capabilities to model different configurations before implementation, then refine based on actual performance data.

As noted in the research paper: "As foundational models like Gemini continue to advance, our research suggests that smarter models don't replace the need for multi-agent systems, they accelerate it, but only when the architecture is right. By moving from heuristics to quantitative principles, we can build the next generation of AI agents that are not just more numerous, but smarter, safer, and more efficient."

For organizations developing AI strategies, this research provides a foundation for making informed decisions about multi-agent system design, moving beyond theoretical benefits to practical, quantifiable outcomes that align with business objectives and technical constraints.

Comments

Please log in or register to join the discussion