Google has announced significant updates to its Home platform addressing long-standing user frustrations while introducing a groundbreaking Live Search feature that uses Gemini to analyze and describe live camera feeds in real-time.

Google's recent Home platform updates mark a significant evolution in the company's approach to smart home technology, addressing years of user frustrations while introducing a potentially groundbreaking feature that could redefine how people interact with their connected devices. At the center of these updates is Live Search, a feature that leverages Google's Gemini AI to analyze and describe live camera feeds in real-time, offering a glimpse into the future of AI-powered home assistance.

The Home platform has long been a source of contention among users, with complaints ranging from inconsistent device integration to unintuitive controls and limited functionality. Google's latest update appears to directly confront these issues, implementing a redesigned interface that centralizes device control and simplifies automation setup. This represents a notable admission from Google that its previous smart home experience failed to meet user expectations, despite the growing popularity of connected devices.

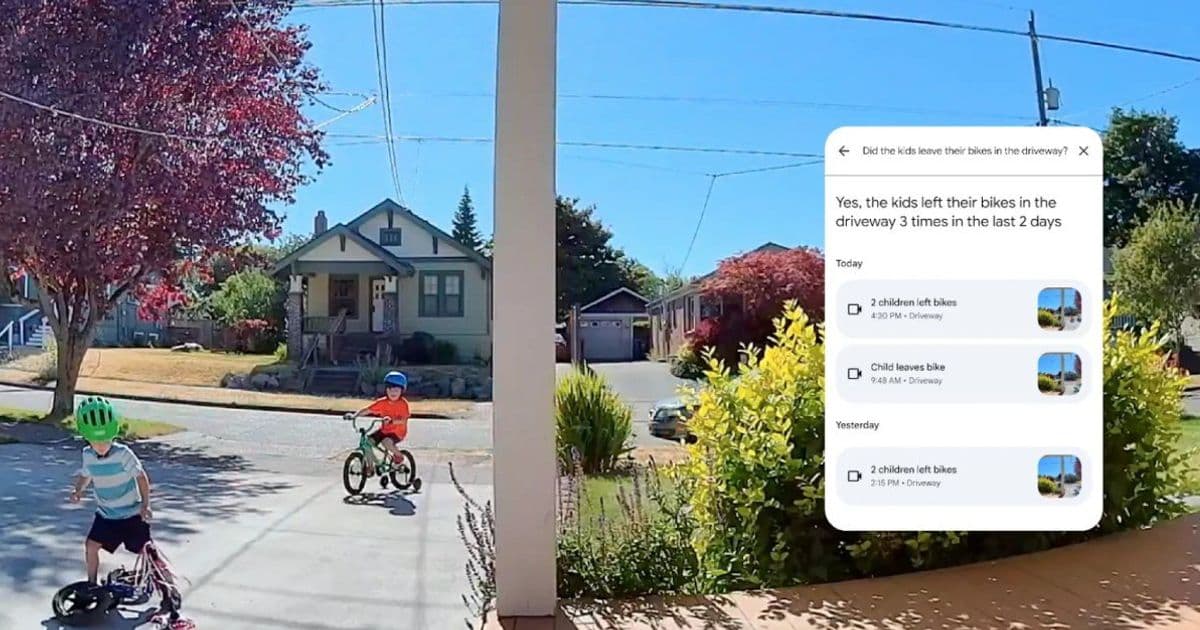

Live Search emerges as the most innovative addition to the platform, transforming smart cameras from simple recording devices into intelligent observers. The feature allows users to point their device's camera at any scene and ask natural language questions about what they're seeing. For instance, a user could inquire "What items are on the kitchen counter?" or "Is there any mail on the table?" and receive immediate, descriptive responses from Gemini. This functionality extends beyond basic object recognition, providing contextual information about scenes and potentially identifying specific items, people, or activities.

The technical implementation behind Live Search represents a fascinating intersection of computer vision and natural language processing. When activated, the system processes visual input through a series of neural networks to identify objects, people, and spatial relationships. This information is then fed into Gemini, which formulates a natural language response based on its training data and contextual understanding. The result is a sophisticated system that can interpret complex scenes and provide meaningful descriptions in real-time.

Community reactions to these updates have been varied, reflecting both excitement and skepticism. On platforms like Reddit and Twitter, tech enthusiasts have welcomed the fixes to longstanding annoyances while expressing curiosity about Live Search's capabilities. Early adopters have shared positive experiences with the improved interface, noting that device management now feels more intuitive and responsive. However, concerns about privacy and data security remain prominent, with many questioning how Google handles and stores information from live camera feeds.

Adoption signals suggest Google may be onto something significant. The company reports that internal testing showed high engagement rates with Live Search, with users spending an average of 3-5 minutes per session exploring the feature's capabilities. Additionally, Google has secured partnerships with several smart camera manufacturers to ensure broad compatibility, indicating confidence in the technology's market potential. These partnerships could help establish Live Search as a de facto standard for camera-based AI analysis in the smart home ecosystem.

Counter-perspectives highlight significant challenges that Google must overcome to make Live Search successful. Privacy advocates have raised serious concerns about the implications of constant visual monitoring, particularly in home environments where people expect a degree of privacy. The feature's accuracy also remains a question mark, as current AI systems often struggle with complex scenes, unusual objects, or poor lighting conditions. Some industry observers note that while the technology is impressive, it may ultimately be a solution in search of a problem, as most users haven't expressed a strong need for AI-powered camera analysis.

From a competitive standpoint, Google's move puts pressure on other smart home platforms to advance their AI capabilities. Amazon's Alexa and Apple's HomeKit have similar camera integration features but lack the sophisticated AI analysis that Live Search promises. This could potentially shift the competitive dynamics in the smart home market, with AI functionality becoming a key differentiator. Google's deep investment in AI technology gives it a potential advantage in this space, though competitors are rapidly developing their own capabilities.

The timing of these updates is noteworthy, coming as Google faces increasing competition in the AI space from companies like OpenAI and Anthropic. By integrating Gemini directly into its smart home ecosystem, Google is creating practical applications for its AI technology beyond chatbots and content generation. This strategy could help establish Gemini as a versatile AI platform with real-world utility, differentiating it from competitors that focus primarily on conversational AI.

For developers, the Live Search feature opens new possibilities for smart home applications. The API could enable third-party developers to create innovative uses for camera-based AI analysis, from home security enhancements to accessibility tools for visually impaired users. Google has indicated plans to release developer documentation and SDKs for the feature in the coming months, suggesting the company is committed to building an ecosystem around this technology.

Looking ahead, the success of these updates will likely depend on three factors: privacy protections, accuracy improvements, and practical utility. If Google can address privacy concerns while delivering reliable functionality, Live Search could become a defining feature of its smart home ecosystem. However, if the technology proves unreliable or raises too many privacy questions, it may remain a niche feature rather than a mainstream selling point.

Google's Home updates reflect a broader trend in the tech industry toward more practical AI applications. Rather than focusing solely on conversational AI or content generation, companies are increasingly exploring how AI can enhance everyday experiences in tangible ways. The smart home represents an ideal testing ground for these applications, as it combines physical environments with digital interfaces that can benefit from intelligent assistance.

As with many technological advancements, the ultimate value of Live Search may not be in its technical sophistication but in how well it solves real problems for users. If the feature can genuinely improve the smart home experience by providing useful information or assistance, it could set a new standard for AI integration in connected devices. If not, it may join the long list of overhyped technologies that failed to deliver on their promise.

Comments

Please log in or register to join the discussion