Intel and SambaNova have announced a joint heterogeneous AI inference platform that splits workloads across different hardware types, with Intel's Xeon 6 processors playing a central role in agentic workloads and system orchestration.

Intel and SambaNova on Wednesday announced their joint production-ready heterogeneous inference architecture that relies on AI accelerators or GPUs for prefill, SambaNova reconfigurable dataflow units (RDUs) SN50 for decode, and Xeon 6 processors for agentic tools and system orchestration. The platform is designed to address as broad a set of workloads as possible to siphon some of the market share away from Nvidia and other emerging players.

Breaking Down the Heterogeneous Architecture

The new platform represents a strategic shift in how AI inference workloads are distributed across different types of silicon. Rather than relying on a single type of accelerator, the architecture separates inference into distinct stages handled by different hardware:

- Prefill stage: AI GPUs or AI accelerators ingest long prompts and build key-value caches

- Decode stage: SambaNova's SN50 RDU handles token decoding and generation

- Agentic operations: Xeon 6 processors run agent-related operations like code compilation, execution, and output validation

- System orchestration: Xeon 6 also coordinates and distributes workloads across the heterogeneous hardware stack

This approach mirrors Nvidia's strategy with its upcoming Rubin platform, which similarly splits workloads between its Rubin CPX and heavy-duty Rubin GPU with HBM4 memory. However, Intel's partnership with SambaNova offers a crucial advantage: the Rubin CPX is not coming to market, while Intel's Xeon 6 processors are already shipping and widely deployed.

Technical Performance Claims

According to SambaNova's internal testing, Xeon 6 demonstrates significant performance advantages in key workloads:

- 50% faster LLVM compilation compared to Arm-based server CPUs

- 70% higher performance in vector database workloads compared to competing x86 processors (specifically AMD EPYC)

These gains are particularly relevant for coding agents and similar agentic applications that require rapid compilation and execution of code, as well as efficient vector database operations for context management.

Market Implications and Deployment Strategy

The heterogeneous inference platform is scheduled to be available in the second half of 2026 to enterprises, cloud operators, and sovereign AI programs seeking scalable inference platforms. The timing positions the solution as a mid-term alternative to Nvidia's dominance in the AI inference market.

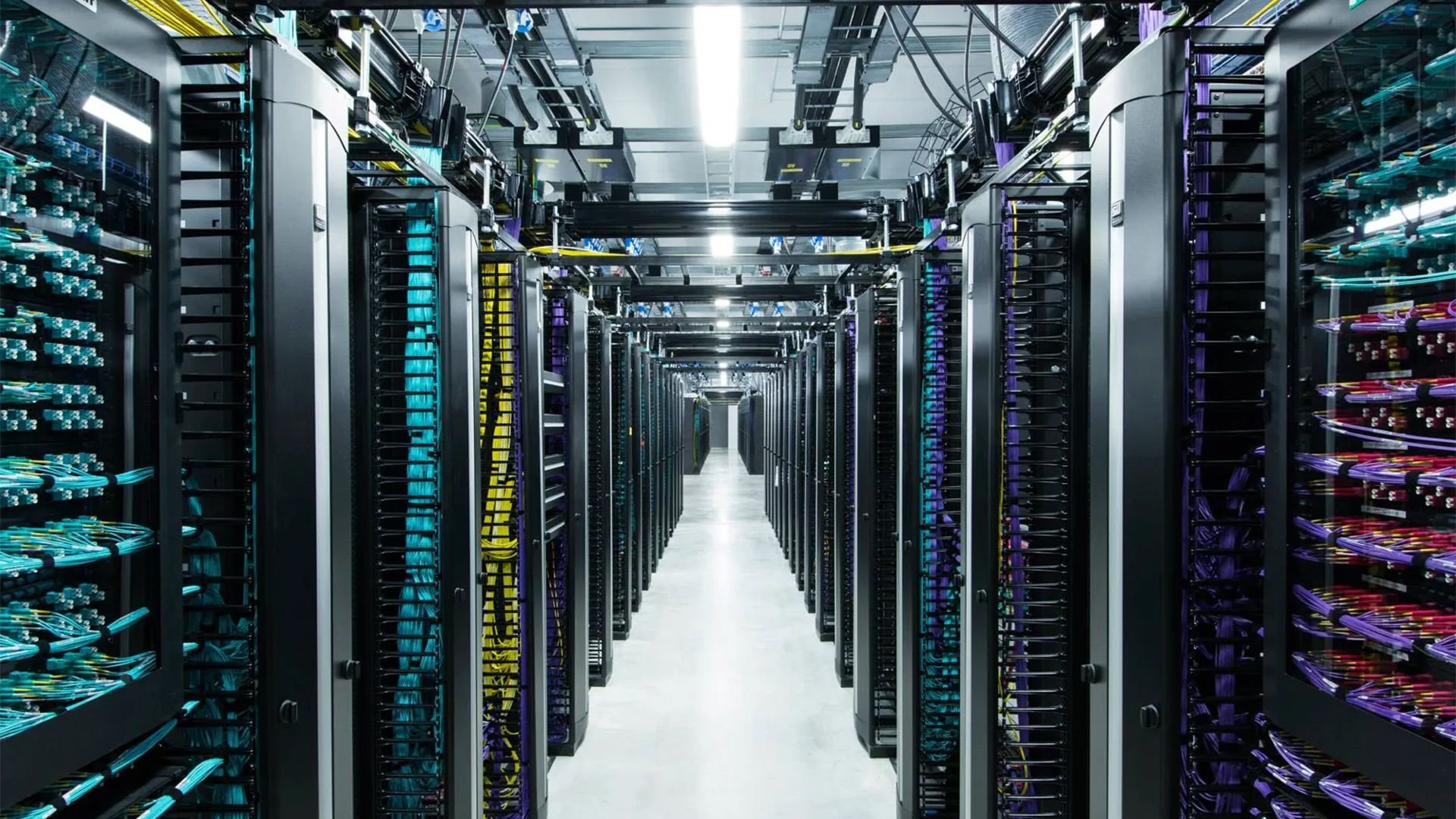

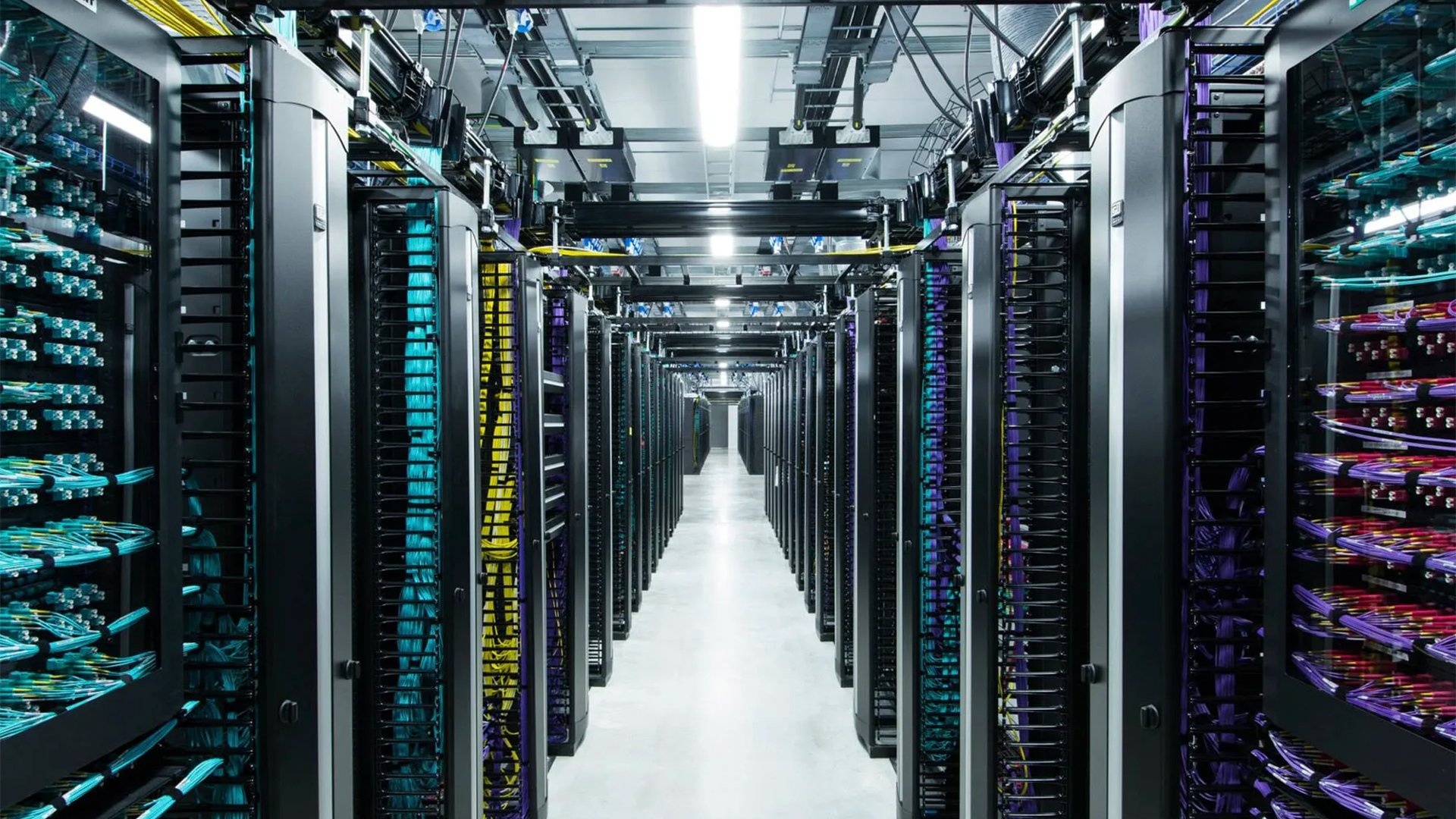

Perhaps the most significant advantage of this joint architecture is its deployment flexibility. SambaNova SN50 and Xeon-based servers are drop-in compatible with data centers that can handle 30kW power delivery—which encompasses the vast majority of enterprise data centers. This eliminates the need for costly infrastructure upgrades that many high-performance AI accelerators require.

Strategic Significance for Intel

For Intel, the partnership represents more than just another product announcement. As Kevork Kechichian, Executive Vice President and General Manager of the Data Center Group at Intel Corporation, noted: "The data center software ecosystem is built on x86, and it runs on Xeon—providing a mature, proven foundation that developers, enterprises, and cloud providers rely on at scale."

This statement underscores Intel's strategy of leveraging its entrenched position in the x86 ecosystem while expanding into AI-specific workloads. By positioning Xeon 6 as the central orchestrator and agentic workload processor, Intel ensures its processors remain essential even in highly specialized AI deployments.

The Broader AI Inference Landscape

The announcement comes amid growing recognition that AI inference represents a massive and growing market opportunity. While training large models captures headlines, inference—the actual running of AI models to generate responses—represents the bulk of AI computing demand and operational costs.

Nvidia has dominated both training and inference markets, but the heterogeneous approach pioneered by Intel and SambaNova suggests the inference market may fragment as different hardware types prove optimal for different stages of the inference pipeline. This fragmentation could provide opportunities for specialized players like SambaNova while ensuring Intel maintains relevance through its Xeon processors' role in system orchestration and agentic workloads.

The platform's focus on coding agents and similar agentic workloads also reflects where the industry sees the next wave of AI demand. As enterprises move beyond simple chat interfaces to AI systems that can autonomously execute tasks, the computational requirements become more complex, potentially favoring architectures that can efficiently distribute these workloads across specialized hardware.

For enterprises and cloud operators, the heterogeneous inference platform offers a path to building sophisticated AI capabilities without being locked into a single vendor's ecosystem. The drop-in compatibility with existing data center infrastructure reduces barriers to adoption, while the specialized hardware components promise performance advantages for demanding workloads.

The partnership between Intel and SambaNova represents a calculated move to challenge Nvidia's dominance by offering a more flexible, potentially more cost-effective alternative for AI inference. Whether this heterogeneous approach can capture significant market share remains to be seen, but it certainly adds another dimension to the competitive landscape in AI infrastructure.

Comments

Please log in or register to join the discussion