Andrej Karpathy introduces autoresearch, a framework where AI agents autonomously experiment with LLM training, potentially revolutionizing how AI research is conducted through self-modifying code and iterative optimization.

AI Agents Take the Helm: Karpathy's Autoresearch Signals New Era in Machine Learning Development

The landscape of AI research may be on the cusp of another transformation, with Andrej Karpathy's latest project, autoresearch, proposing a paradigm shift where AI agents autonomously conduct LLM training experiments. The concept, both simple and profound, gives AI agents control over a small but real LLM training setup, allowing them to modify code, train for brief intervals, evaluate results, and iterate—all without human intervention overnight.

The Autonomy Equation

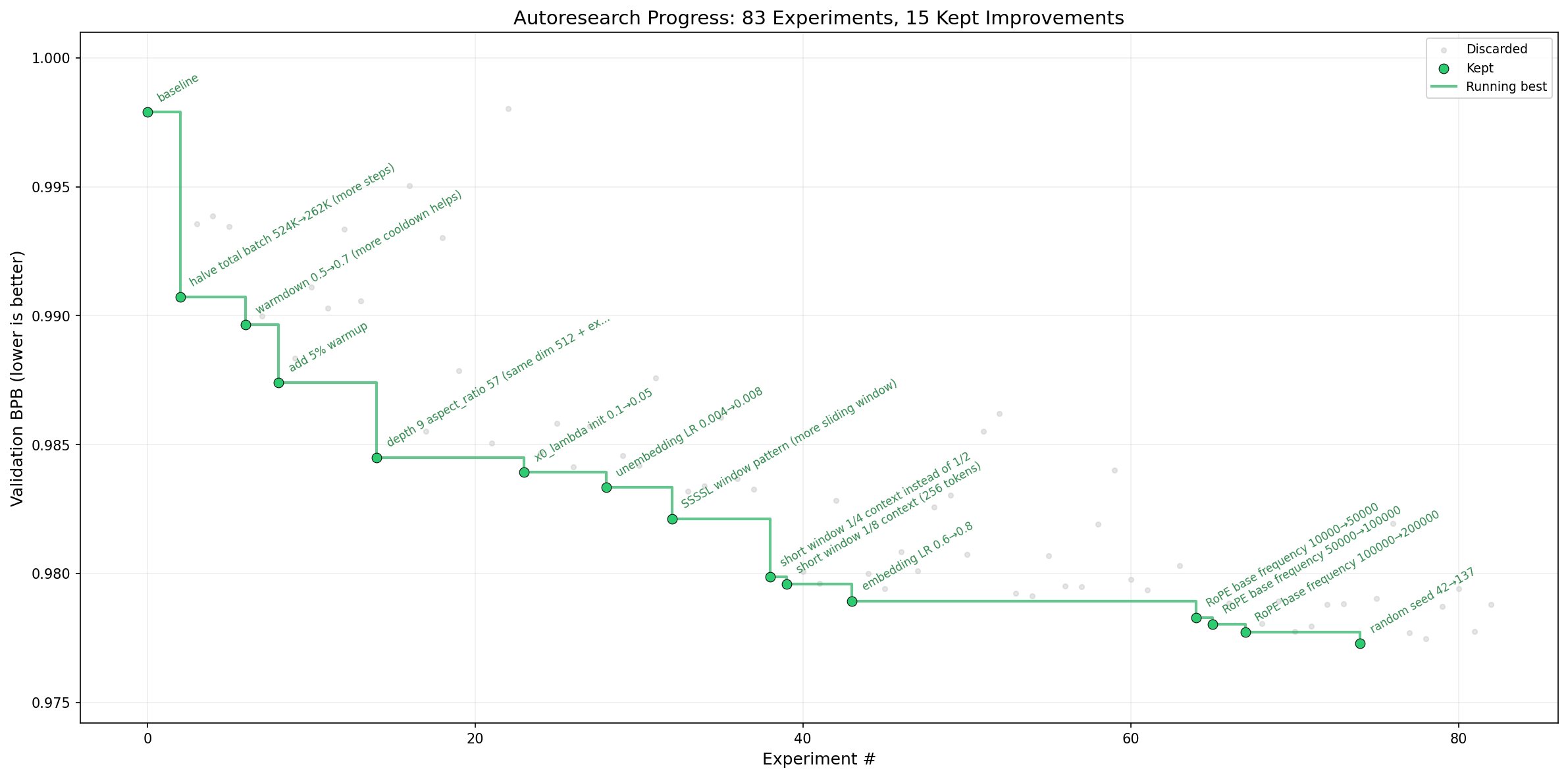

At its core, autoresearch challenges the traditional research methodology by replacing human researchers with AI agents that operate on a fixed 5-minute training budget. The system measures progress using validation bits per byte (val_bpb), a metric that remains consistent across architectural changes, enabling fair comparisons between experiments.

"The idea: give an AI agent a small but real LLM training setup and let it experiment autonomously overnight," explains Karpathy in the project's README. "It modifies the code, trains for 5 minutes, checks if the result improved, keeps or discards, and repeats. You wake up in the morning to a log of experiments and (hopefully) a better model."

The implementation centers on three key files:

- prepare.py — Contains fixed constants, data preparation, and runtime utilities

- train.py — The single file the AI agent modifies, containing the full GPT model, optimizer, and training loop

- program.md — Instructions for the AI agent, which humans can iterate on

Research Organization as Code

What makes autoresearch particularly intriguing is its approach to research organization. Rather than directly modifying Python files, users program the AI agents through Markdown files that set up the autonomous research framework. This creates a meta-layer where the research process itself becomes a programmable system.

"The training code here is a simplified single-GPU implementation of nanochat," Karpathy notes. "The core idea is that you're not touching any of the Python files like you normally would as a researcher. Instead, you are programming the program.md Markdown files that provide context to the AI agents and set up your autonomous research org."

This approach allows for the creation of increasingly sophisticated research organizations. As the project evolves, researchers could develop "research org code" that achieves faster progress, coordinate multiple agents, or implement complex experimentation strategies—all through these seemingly simple instruction files.

Practical Implementation and Accessibility

The project is designed with practicality in mind, requiring only a single NVIDIA GPU (tested on H100), Python 3.10+, and the uv package manager. The fixed 5-minute training budget ensures approximately 12 experiments per hour and roughly 100 experiments during a typical sleep cycle, creating a rapid iteration cycle that could accelerate discovery.

"By design, training runs for a fixed 5-minute time budget (wall clock, excluding startup/compilation), regardless of the details of your compute," Karpathy explains. "The metric is val_bpb (validation bits per byte) — lower is better, and vocab-size-independent so architectural changes are fairly compared."

The project's self-contained nature minimizes dependencies, requiring only PyTorch and a few small packages. This design choice reduces complexity while maintaining the flexibility needed for autonomous experimentation.

Community Response and Early Adoption

Since its release, autoresearch has sparked considerable discussion within the AI research community. The project represents a natural extension of Karpathy's previous work on making AI more accessible and autonomous, building on his contributions to OpenAI, Tesla, and his educational initiatives.

"This is exactly the direction research should be heading," commented one AI researcher on social media. "Automating the experimentation process allows us to explore a much broader design space than human researchers ever could."

The project has already seen notable forks, including miolini/autoresearch-macos, indicating community interest in extending its capabilities to different platforms. However, Karpathy notes that the current implementation focuses on single NVIDIA GPUs, with broader platform support potentially bloating the codebase.

Critical Perspectives and Limitations

Despite the enthusiasm, some researchers have raised concerns about the approach. The fixed 5-minute training budget, while enabling rapid iteration, limits the depth of each experiment. Complex architectural improvements or training dynamics might require longer evaluation periods to manifest meaningful improvements.

"The time budget constraint is both a strength and a weakness," observed Dr. Elena Rodriguez, a machine learning researcher not affiliated with the project. "It enables quick comparisons but might miss important long-term dynamics that only emerge after extended training."

Others question whether the current approach can truly capture the nuances of research intuition that human scientists bring to the table. The system operates on predefined metrics and objectives, potentially overlooking serendipitous discoveries that arise from human creativity and interdisciplinary thinking.

"This automates what we can measure, but doesn't necessarily advance what we can imagine," commented another researcher. "The most groundbreaking research often comes from connecting seemingly unrelated concepts in ways that automated systems might not discover."

The Path Forward

Karpathy presents autoresearch as a demonstration rather than a fully supported product, acknowledging that he's "not 100% sure that I want to take this on personally right now." The project's GitHub page invites community contributions, suggesting that its evolution may depend on collective effort rather than centralized development.

The potential implications of successful autonomous research systems are profound. If AI agents can effectively conduct and accelerate research, we might see exponential improvements in AI capabilities, with each generation of systems capable of developing more advanced successors. This recursive improvement cycle could dramatically accelerate the timeline for AI development.

"The default program.md in this repo is intentionally kept as a bare bones baseline, though it's obvious how one would iterate on it over time to find the 'research org code' that achieves the fastest research progress, how you'd add more agents to the mix, etc.," Karpathy suggests, pointing toward the project's evolutionary potential.

As the field continues to evolve, autoresearch represents both a practical tool and a thought experiment about the future of AI research. Whether it becomes a widely adopted research methodology or serves as an inspiration for other approaches, it highlights a growing trend toward greater autonomy in AI systems—one that may ultimately redefine our relationship with the technology we're creating.

Comments

Please log in or register to join the discussion