The second edition of Martin Kleppmann's influential book on distributed systems is now available, reflecting how the cloud has transformed data-intensive application design. We explore the key updates and insights from the author who bridges industry and academia.

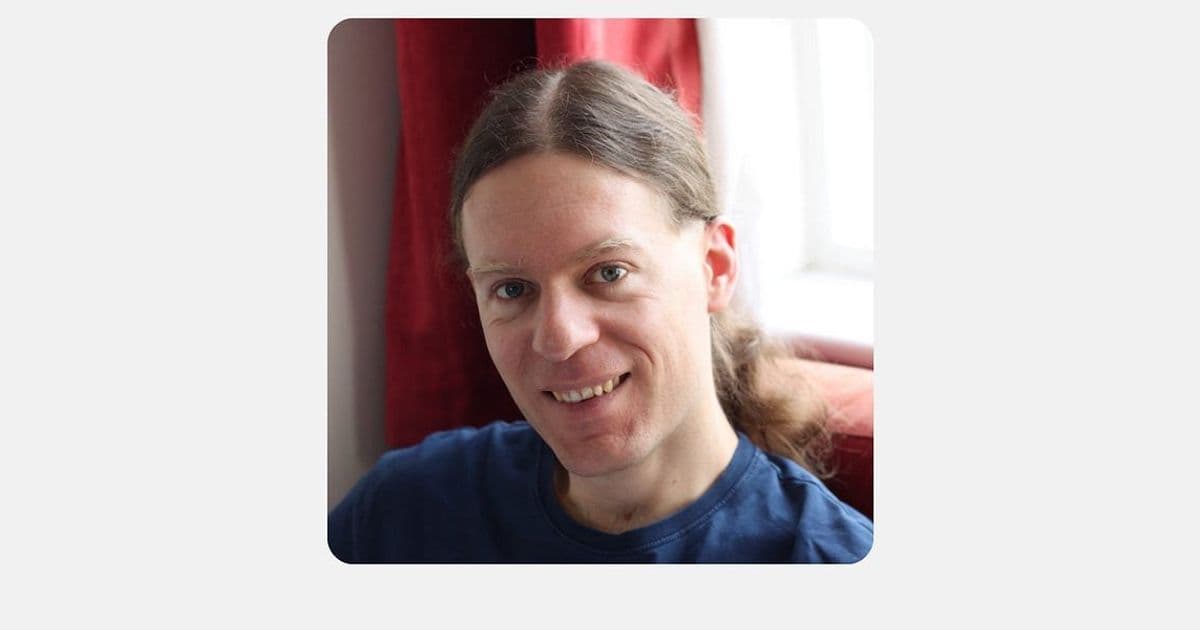

Martin Kleppmann, researcher and author of the seminal book Designing Data-Intensive Applications, has just released a second, heavily updated edition of this work that has become essential reading for software engineers working with distributed systems. The book, first published in 2017, has been updated to reflect how the cloud has fundamentally changed what it means to build and scale data-intensive applications.

The updated edition comes from an author uniquely positioned to understand both industry and academic perspectives. Kleppmann built his career in tech startups, including Rapportive (which was sold to LinkedIn), before transitioning to academia. This dual perspective informs his approach to system design, emphasizing practical understanding of tradeoffs rather than prescribing "best practices." As he explains, "There is no 'best practice' in deciding whether to go multi-region or multi-cloud. This decision is a tradeoff between risk and costs. It's a business decision to be made."

What's New in the Second Edition

The second edition reflects significant changes in the data systems landscape since the first edition:

- Reduced emphasis on MapReduce: While the first edition covered MapReduce extensively, the second edition has largely removed it. "Practically nobody uses it these days," Kleppmann notes, as technologies like Spark and Flink have replaced it. MapReduce is included only as a reference for understanding partitioned batch systems.

- Increased focus on replication for fault tolerance: The cloud has reduced the need for manual sharding for most teams, as machines grow larger and more workloads fit on a single machine. "Sharding across machines is increasingly a specialist concern; replication for fault tolerance, however, is still relevant at every scale," explains Kleppmann.

- Updated coverage of cloud scaling approaches: The book now reflects how the cloud has changed what it means to scale, including the challenges of scaling down efficiently when there's less traffic.

- New material on formal verification: With the rise of AI-assisted code generation, formal verification may become more practical and widely adopted.

- Expanded coverage of local-first software: Building applications that work well offline and give users true ownership of their data presents unique engineering challenges.

Why the Book Matters

Kleppmann wrote the book out of his own experience at Rapportive, where his team was "drowning" in design decisions without proper foundations. "We hit database performance problems and were searching in the dark, with no idea what to do," he recalls. The book aims to help application developers develop intuition for making good design decisions and debugging performance issues.

"This is not a book for people who build databases or even infrastructure," Kleppmann maintains, "but it's helpful for application developers to develop an intuition for making good design decisions and debugging performance issues they will encounter."

One of the book's strengths is how it helps engineers articulate tradeoffs in a way that enables business leaders to make informed decisions. These tradeoffs include reputational and societal risks, not just technical ones. As Kleppmann puts it, "An engineer's job is increasingly about surfacing risks — including societal ones — to decision-makers."

Key Insights for Practitioners

From the recent conversation and book, several insights stand out for practitioners:

Understanding system internals as a superpower: Application developers who understand how their systems work internally can make better decisions and debug issues more effectively.

Tradeoffs over best practices: System design decisions, especially regarding multi-region and multi-cloud deployments, should be based on specific business needs rather than following trends.

Scaling down is as challenging as scaling up: Building systems that can efficiently scale down when traffic decreases is an important but often overlooked problem. Solutions like serverless architectures are valuable for this purpose.

Distributed systems theory makes deliberately paranoid assumptions: The theory assumes no upper bound on network delays, crashes, or clock differences. This extreme approach is intentional because occasionally, reality hits these extremes.

AI may change formal verification: Kleppmann believes that the combination of LLMs generating large amounts of code and improving at writing formal proofs could make formal verification more practical and widely adopted.

Local-first software presents difficult challenges: Decentralized access control becomes complex without a central server to arbitrate. For example, a revoked user might make concurrent edits that different devices will disagree about.

Bridging Industry and Academia

Kleppmann has worked in both industry and academia and is critical of how the two fields often dismiss each other. "The tech industry calls academia 'theoretical' and misses useful research. Academia, in turn, often calls industry work just engineering and misses the interesting problems they solve," he observes.

His experience in both worlds has shaped his perspective. "The best PhD students I work with have a few years of real engineering experience," he notes. This practical understanding helps ground academic research in real-world problems.

For those interested in distributed systems, the updated Designing Data-Intensive Applications offers a comprehensive overview of the field, updated for the cloud era. The book is available through O'Reilly, and Kleppmann maintains a website with additional resources and his research papers.

The conversation also touches on Kleppmann's current research into using cryptography to improve transparency in supply chains without exposing sensitive data, and his work as an advisor to Bluesky, a distributed social network project.

For engineers working with data-intensive applications, the updated edition provides both foundational knowledge and practical insights for navigating the complex tradeoffs of modern system design.

Comments

Please log in or register to join the discussion