Meta unveils four new MTIA chips with 25x compute increase and 4.5x HBM bandwidth growth, aiming to disrupt Nvidia's dominance in AI inference through rapid six-month development cycles.

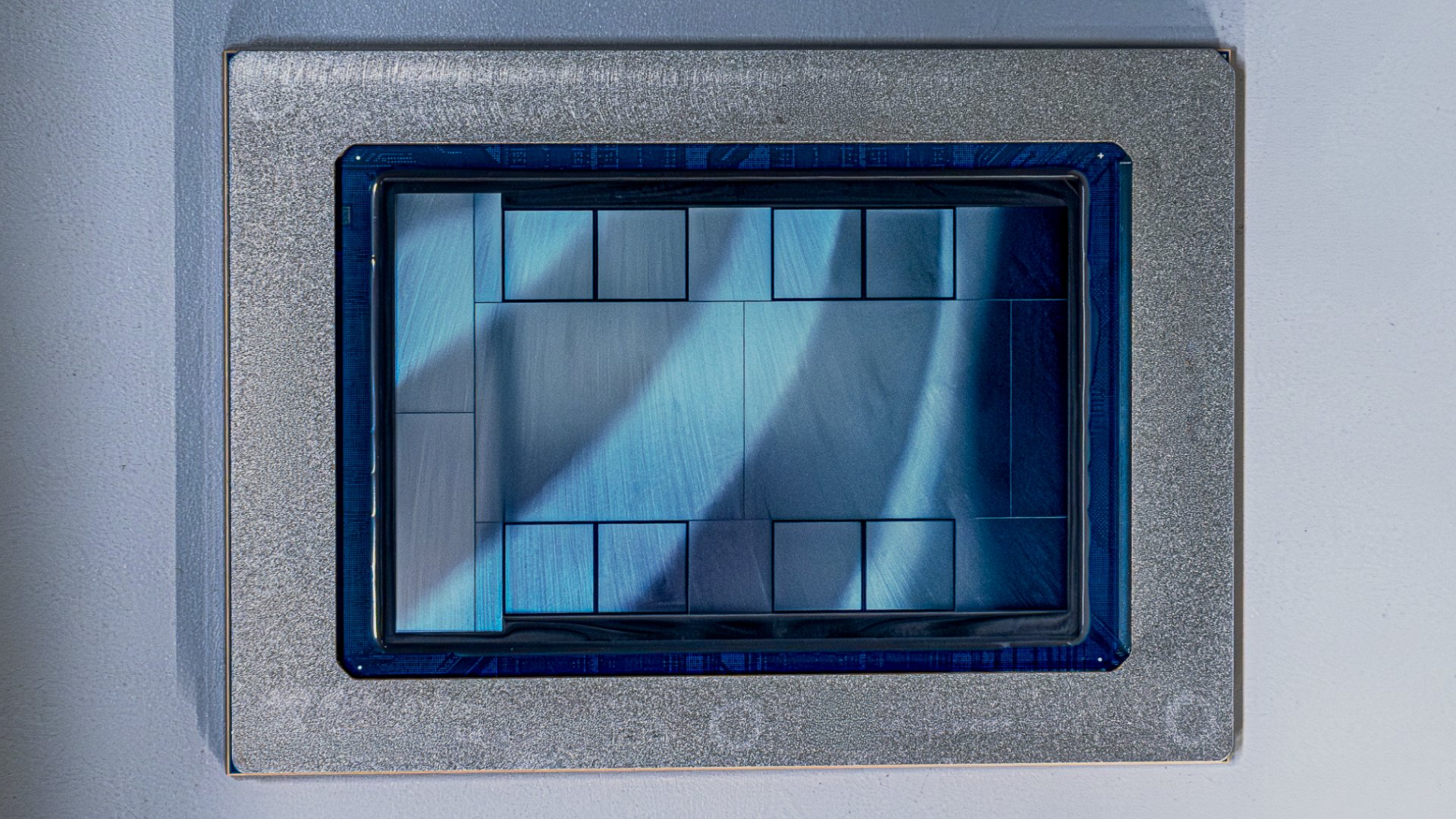

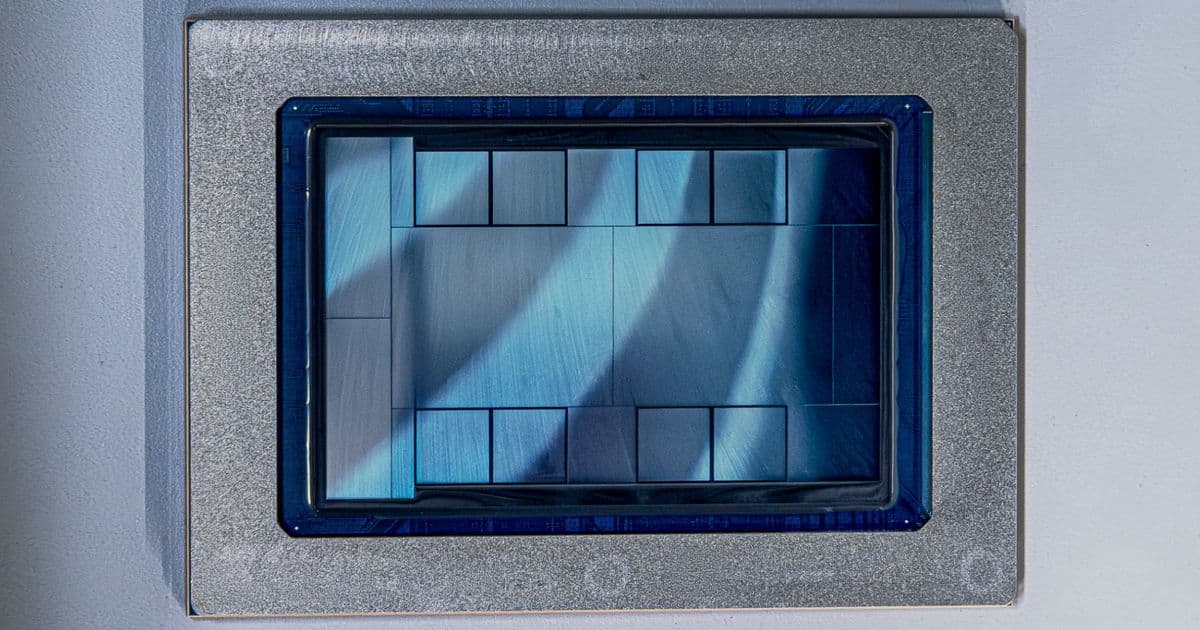

Meta today announced four successive generations of its in-house Meta Training and Inference Accelerator (MTIA) chips, all developed in partnership with Broadcom and scheduled for deployment within the next two years. The company revealed MTIA 300, 400, 450, and 500 chips, targeting everything from ranking and recommendations training to high-performance AI inference workloads.

The four-chip roadmap represents Meta's aggressive strategy to reduce dependence on Nvidia's GPUs while maintaining control over its AI infrastructure. According to Meta's press release, the company has prioritized "rapid, iterative development" with an inference-first focus and frictionless adoption by building natively on industry standards.

Technical Evolution Across Four Generations

Meta's technical blog reveals substantial performance improvements across the MTIA family. From MTIA 300 through to MTIA 500, HBM bandwidth increases 4.5 times while compute FLOPs increase 25 times. This represents a compound annual growth rate that far exceeds traditional semiconductor development cycles.

MTIA 300 is already in production for ranking and recommendations training, while MTIA 400 is currently in lab testing ahead of data center deployment. The more advanced MTIA 450 and 500 chips are targeted at AI inference workloads, with mass deployment scheduled for early 2027 and later in 2027, respectively.

HBM Bandwidth: The Critical Bottleneck

Meta's focus on HBM bandwidth addresses a fundamental challenge in transformer inference. The company notes that HBM bandwidth, not raw FLOPs, represents the main bottleneck during the decode phase of transformer inference. This insight drives Meta's architectural decisions, as mainstream GPUs are architected to maximize FLOPs for large-scale pre-training, carrying cost and power overhead that Meta considers unnecessary for inference workloads.

MTIA 450 doubles the HBM bandwidth of MTIA 400, with Meta describing it as "much higher than that of existing leading commercial products," a clear reference to Nvidia's H100 and H200 GPUs. MTIA 500 then adds another 50% HBM bandwidth on top of 450, along with up to 80% more HBM capacity.

Performance Specifications

Swipe to scroll horizontally

| Row 0 - Cell 0 | MTIA 300 | MTIA 400 | MTIA 450 | MTIA 500 |

|---|---|---|---|---|

| Workload Focus | R&R Training | General AI Inference | AI Inference | AI Inference |

| Module TDP | 800 W | 1,200 W | 1,400 W | 1,700 W |

| HBM Bandwidth | 6.1 TB/s | 9.2 TB/s | 18.4 TB/s | 27.6 TB/s |

| HBM Capacity | 216 GB | 288 GB | 288 GB | 384-512 GB |

| MX4 Performance | - | 12 PFLOPS | 21 PFLOPS | 30 PFLOPS |

| FP8/MX8 Performance | 1.2 PFLOPS | 6 PFLOPS | 7 PFLOPS | 10 PFLOPS |

| BF16 Performance | 0.6 PFLOPS | 3 PFLOPS | 3.5 PFLOPS | 5 PFLOPS |

Architectural Innovations

The MTIA family incorporates several architectural innovations specifically designed for inference workloads. Hardware acceleration for FlashAttention and mixture-of-experts feed-forward network computation represents a targeted approach to the most common inference patterns in Meta's applications.

Meta has also co-designed custom low-precision data types for inference, with MTIA 450 supporting MX4 format. This delivers six times the MX4 FLOPs of FP16/BF16 while avoiding the software overhead of data type conversion through mixed low-precision computation.

Six-Month Development Cadence

Perhaps most striking is Meta's development timeline. The company claims a roughly six-month chip cadence, significantly faster than the industry's typical one-to-two year cycle. This rapid iteration is enabled by the modular design approach, where MTIA 400, 450, and 500 all use the same chassis, rack, and network infrastructure.

This modularity means each new chip generation drops into the existing physical footprint for easy interchange, allowing Meta to continuously upgrade its infrastructure without wholesale replacement of supporting systems.

Software Ecosystem Integration

Meta's software strategy emphasizes compatibility and ease of deployment. The software stack runs natively on PyTorch, vLLM, and Triton, with support for torch.compile and torch.export. This allows production models to be deployed simultaneously on both GPUs and MTIA without MTIA-specific rewrites.

This approach addresses one of the primary barriers to custom accelerator adoption: the need to rewrite existing models and pipelines. By maintaining compatibility with standard frameworks, Meta can gradually transition workloads to MTIA without disrupting its development workflows.

Market Implications and Strategic Context

Meta says it has already deployed hundreds of thousands of MTIA chips across its apps for inference on organic content and ads. This existing deployment provides valuable real-world data for optimizing subsequent generations.

The announcement comes just two weeks after Meta disclosed a long-term, $100 billion AI infrastructure agreement with AMD, suggesting a broader effort to reduce dependence on Nvidia across different parts of Meta's AI stack while keeping MTIA at the core of inference workloads.

Manufacturing Partnership with Broadcom

The partnership with Broadcom represents a strategic choice in chip manufacturing. Broadcom provides both design services and manufacturing capabilities, allowing Meta to leverage the company's expertise in custom silicon while maintaining control over the architecture.

This approach differs from companies that design chips in-house but rely on foundries like TSMC for manufacturing, or those that work with merchant silicon providers. The Broadcom partnership gives Meta access to specialized manufacturing processes optimized for its specific requirements.

Competitive Positioning

Meta's MTIA strategy positions the company to compete directly with Nvidia in the inference market, where power efficiency and cost per inference matter more than raw training performance. By focusing on inference-specific optimizations, Meta aims to deliver better performance per watt and lower total cost of ownership compared to general-purpose GPUs.

The six-month cadence also allows Meta to respond more quickly to changing inference patterns and model architectures, potentially giving it an advantage over competitors with longer development cycles.

Future Outlook

With MTIA 450 and 500 scheduled for deployment in 2027, Meta is establishing a foundation for the next phase of AI infrastructure. The company's ability to maintain its rapid development cadence while scaling production will be critical to its success in reducing dependence on merchant silicon providers.

The MTIA roadmap represents a significant bet on inference becoming an increasingly important workload as AI models move from training to deployment at scale. If successful, Meta's approach could influence how other companies approach custom silicon for AI workloads, potentially reshaping the competitive landscape in the AI hardware market.

Meta's aggressive timeline and substantial performance improvements suggest the company is committed to this strategy for the long term, with implications that extend beyond its own infrastructure to the broader AI hardware ecosystem.

Comments

Please log in or register to join the discussion