Nvidia's GPU Technology Conference returns with major announcements expected around Groq integration, Rubin GPUs, Vera CPUs, and next-gen datacenter systems as the AI chipmaker addresses growing token generation demands.

Nvidia's GPU Technology Conference (GTC) kicks off next week in San Jose, and expectations are running high for what CEO Jensen Huang will unveil at what some call the "AI Burning Man." The annual event has become one of tech's most-watched conferences as Nvidia's datacenter business continues to dominate the AI hardware landscape.

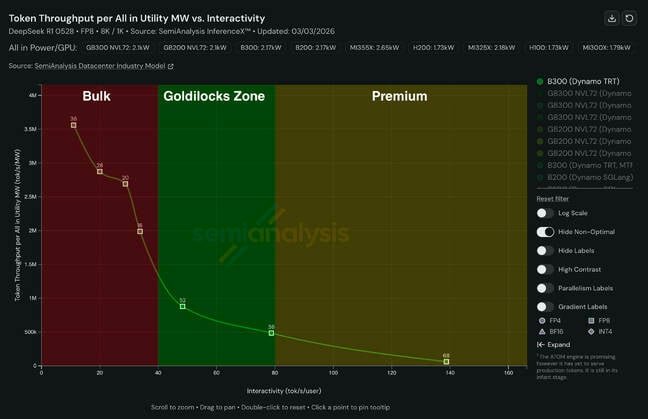

The timing is particularly interesting given Nvidia's December acquisition of Groq for $20 billion. Market analysis from SemiAnalysis shows Groq's dataflow architecture fills a critical gap in Nvidia's current portfolio. Their latest InferenceX benchmarks reveal three distinct performance categories: bulk token generation on the left, expensive low-latency tokens on the right, and the "goldilocks zone" in the middle.

While Nvidia's NVL72 rack systems scale well for lower per-user token generation rates, they become increasingly inefficient as user interactivity rises. By contrast, SRAM-heavy architectures like those from Groq and Cerebras excel in latency-sensitive scenarios, achieving token generation rates exceeding 500-1,000 tokens per second—far beyond what GPU-based architectures can deliver. This capability is precisely why Cerebras won OpenAI's business to power its Codex model earlier this year.

Nvidia's challenge has been matching Cerebras' performance until the Groq acquisition. By combining GPU technology and CUDA software libraries with Groq's dataflow architecture, Nvidia can dramatically raise the Pareto curve, reducing cost per token while boosting output speeds. At GTC, expect announcements about adding limited support for Groq's existing architecture relatively quickly.

On the silicon front, Nvidia already revealed its Rubin GPUs at CES in January. These chips pack up to 288 GB of HBM4 memory with 22 TB/s bandwidth and deliver 35-50 petaFLOPS of dense NVFP4 performance—a 5x performance uplift over Blackwell-generation parts. Rubin will be available in eight-way HGX platforms, the 72-module NVL72 rack system, and GPX variants for large context and video processing workflows.

With Rubin's thermal design power estimated at 1.8 kW or higher, liquid cooling is mandatory. Some buyers may resist this requirement, potentially benefiting AMD's air-cooled alternatives. However, Nvidia could counter with a single-die, air-cooled version featuring five or six HBM stacks instead of eight, still delivering a 2.5x performance uplift over Blackwell without liquid cooling requirements.

Nvidia's CPU ambitions will also take center stage with more details on the standalone Vera CPU. First teased at last year's GTC, Vera features 88 custom Arm cores with simultaneous multithreading and confidential computing features previously exclusive to x86 platforms. While initially packaged as part of the Vera-Rubin superchip, Nvidia will offer it as a standalone processor competing with Intel and AMD in mainstream applications. Meta is already evaluating Vera CPUs for its datacenters after deploying Grace at scale.

Looking further ahead, expect Huang to share details about Kyber racks and Feynman GPUs slated for 2027 and 2028. The 600 kW Kyber crams 144 GPU sockets with four Rubin Ultra GPU dies into a standard rack form factor. Nvidia revealed Kyber partly because datacenter operations were already struggling with the 120 kW NVL72 systems from the previous year. With a yearly release cadence, Nvidia must telegraph its next moves years in advance, likely setting new power and cooling targets exceeding a megawatt per rack for Feynman.

On the consumer front, rumors persist about an Arm-based system-on-chip for PCs. Nvidia's DGX Spark and GB10 partner systems already use such a chip in workstation-class mini-PCs running Linux. Recent reports suggest Nvidia is working with Lenovo, Dell, and even Intel to bring integrated Nvidia graphics to the Windows PC market. While gamers might hope for RTX 50 Super series cards, memory market conditions make their appearance at GTC unlikely.

Beyond hardware, OpenClaw—the agentic framework Huang reportedly called "the most important software release probably ever"—will be a major talking point. Nvidia is reportedly developing its own safer version called NemoClaw. Robotics will also feature prominently, with updates on the Isaac GR00T platform and Omniverse digital twin platform, which has evolved from Metaverse hype into a serious tool for simulating physical processes before real-world implementation.

With AI datacenters becoming increasingly critical infrastructure, GTC 2026 promises to showcase how Nvidia plans to maintain its dominance in an industry where performance, efficiency, and cooling requirements are growing exponentially. The conference runs March 17-21 in San Jose, with El Reg on the ground to bring you the latest developments from what has become one of tech's most consequential annual events.

Comments

Please log in or register to join the discussion