Veracode's latest report reveals a troubling trend: more vulnerabilities are being created than fixed as AI accelerates development, leaving security debt at crisis levels.

Veracode has posted its annual State of Software Security report, based on data from 1.6 million applications tested on its cloud platform, finding that more vulnerabilities are being created than are being fixed, and that high-velocity development with AI is making comprehensive security unattainable.

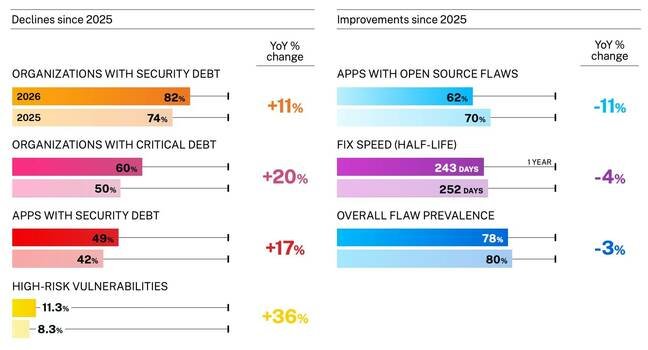

The company defines security debt as "known vulnerabilities left unresolved for more than a year" and reckons this now affects 82 percent of companies, up from 74 percent a year ago. High-risk vulnerabilities, meaning flaws that are both severe and likely to be exploited, have risen from 8.3 percent to 11.3 percent. The figures are from a combination of static analysis (analyzing the code), dynamic analysis (testing runtime behavior), software composition analysis (examining software components such as library dependencies), and manual penetration testing.

There is also some good news. The number of apps with open source vulnerabilities has reduced from 70 percent to 62 percent, and the overall "flaw prevalence" is down from 80 percent to 78 percent. The researchers cite increasing use of testing tools as one of the factors behind the increase, suggesting that one factor in the worsening numbers is that more problems are being spotted that might previously have been missed. The number of false positives is unknown, so the figures may not be as bad as they first appear.

According to Veracode, though, there is also an accelerating pace of software releases causing new code to be added more quickly than existing vulnerabilities are addressed. The researchers see growing technical complexity too, attributed to more AI-generated code, which makes remediation more difficult.

Nailing down the impact of AI is difficult, since the software security company also suggests that AI tools can help identify vulnerabilities and automate fixes. And the researchers note that malicious actors might succeed with AI penetration tools, or manipulate models via techniques such as prompt injection.

Veracode makes the usual nod to the importance of human oversight of AI tools, though exactly what that means is uncertain. In Cloudflare's latest AI coding effort, for example, in which a significant application was built in a week with no human review of most of the code, it seems inevitable that security is either neglected or entrusted largely to AI despite its known flaws. AI tools are also good at generating false positives, creating a burden for human code reviewers that may be unmanageable.

"The velocity of development in the AI era makes comprehensive security unattainable," the report states, a bleak conclusion. Further, "the remediation gap has reached crisis proportions; incremental improvements insufficient; transformational change required."

Identifying what that change should be is elusive; one suspects that the industry will promote more AI tooling as the answer, despite evidence from reports like this one that it is currently failing to improve matters. ®

Comments

Please log in or register to join the discussion