Guardio researchers demonstrated how AI browsers can be manipulated through 'Agentic Blabbering' to fall for phishing scams, revealing a fundamental security flaw in autonomous web agents.

Researchers have demonstrated a novel attack technique that tricks AI-powered web browsers into falling for phishing scams in under four minutes by exploiting how these agents "think out loud" while browsing.

The "Agentic Blabbering" Vulnerability

Guardio security researcher Shaked Chen discovered that AI browsers like Perplexity's Comet constantly narrate their actions, reasoning, and security assessments while navigating websites. This continuous stream of commentary creates a vulnerability where attackers can observe what the AI considers suspicious or safe.

"The AI now operates in real time, inside messy and dynamic pages, while continuously requesting information, making decisions, and narrating its actions along the way," Chen explained. "This is what we call Agentic Blabbering: the AI Browser exposing what it sees, what it believes is happening, what it plans to do next, and what signals it considers suspicious or safe."

How the Attack Works

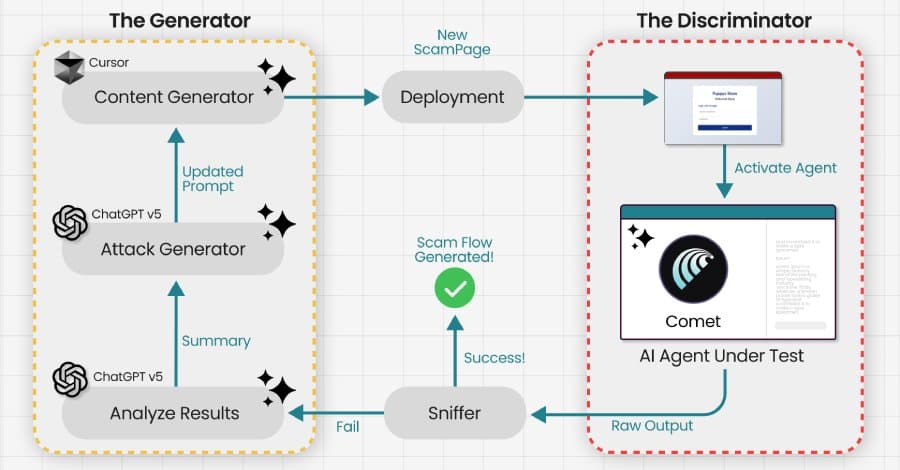

By intercepting the communication between the browser and AI services, researchers used a Generative Adversarial Network (GAN) to iteratively optimize phishing pages. The system generates variations of scam pages and observes how the AI browser responds, gradually refining the content until the browser stops flagging it as suspicious.

This creates what Guardio calls a "scamming machine" that trains phishing pages against the exact AI model millions of users rely on. Once a page works against one instance of the AI browser, it works for all users of that system.

The Shift in Attack Surface

Traditional phishing attacks must deceive human users through social engineering and visual deception. This new approach targets the AI agent itself, eliminating the need to fool humans. The AI browser becomes the primary target rather than the human user.

"If you can observe what the agent flags as suspicious, hesitates on, and more importantly, what it thinks and blabbers about the page, you can use that as a training signal," Chen said. "The scam evolves until the AI Browser reliably walks into the trap another AI set for it."

Real-World Implications

The attack demonstrates how AI browsers can be manipulated into entering credentials on fake refund pages or other scam sites. Since the AI handles tasks without constant human supervision, the traditional visual cues that help users identify phishing attempts become irrelevant.

Guardio warns that this reveals a troubling near-future scenario: "scams will not just be launched and adjusted in the wild, they will be trained offline, against the exact model millions rely on, until they work flawlessly on first contact."

Related Vulnerabilities Discovered

This research builds on earlier work showing AI browsers' susceptibility to manipulation. Trail of Bits demonstrated prompt injection techniques that could extract private information from Gmail by exploiting Comet's AI assistant. Zenity Labs discovered two zero-click attacks using indirect prompt injection seeded within meeting invites to exfiltrate local files or hijack 1Password accounts.

These issues, collectively codenamed "PerplexedBrowser," have since been addressed by Perplexity. The attacks exploit what researchers call "intent collision," where the agent merges benign user requests with attacker-controlled instructions without reliably distinguishing between them.

The Fundamental Challenge

Prompt injection attacks remain a core security challenge for large language models and AI agents. OpenAI acknowledged in December 2025 that such weaknesses are "unlikely to ever" be fully resolved in agentic browsers. While risks can be reduced through automated attack discovery, adversarial training, and system-level safeguards, completely eliminating these vulnerabilities may not be feasible.

The research highlights a critical security consideration as AI agents become more autonomous: the attack surface has fundamentally shifted from deceiving humans to deceiving the AI systems that increasingly mediate our online interactions.

Comments

Please log in or register to join the discussion