Criminal enterprises in Southeast Asia are recruiting 'AI face models' to facilitate crypto and romance scams, with some demanding over 100 video calls per day.

Criminal enterprises operating in Southeast Asian scam hubs are increasingly recruiting "AI face models" to facilitate sophisticated crypto and romance scams, according to a Wired investigation that uncovered dozens of Telegram channels advertising these positions.

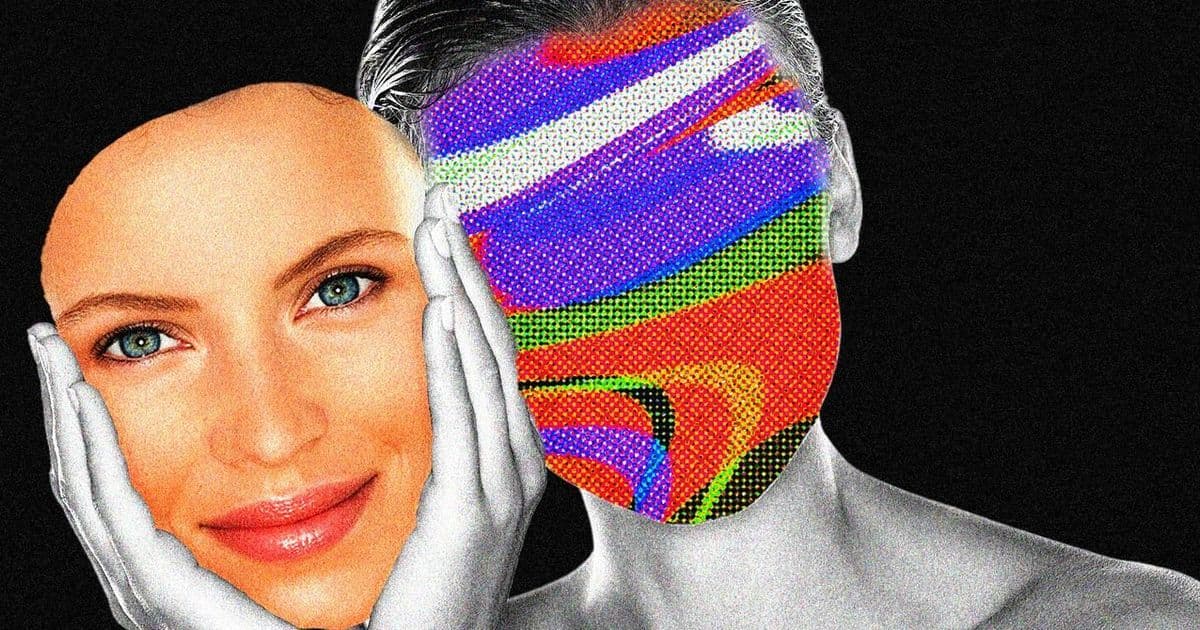

The job listings reveal a disturbing trend where scammers are leveraging artificial intelligence and deepfake technology to create more convincing fraudulent schemes. These "AI face models" are essentially human actors who provide their likeness and voice to be manipulated by AI systems, creating realistic video calls and interactions with potential victims.

Some of these positions demand extraordinary workloads, with certain listings requiring models to conduct over 100 video calls per day. The high volume suggests these operations are running at industrial scale, targeting victims across multiple time zones and demographics.

How the Scam Operations Work

The recruitment process typically occurs through Telegram channels and other encrypted messaging platforms. Job postings often promise high daily earnings, sometimes claiming models can make hundreds of dollars per day for relatively simple work. However, the reality appears far more exploitative.

Once recruited, these models are typically required to:

- Conduct lengthy video calls with potential victims

- Maintain consistent personas across multiple interactions

- Follow scripted conversations designed to build trust

- Gradually introduce cryptocurrency investment opportunities

- Facilitate the transfer of funds to scam accounts

The use of AI technology allows scammers to maintain consistent appearances and voices across different calls, making it harder for victims to detect fraud. Some operations reportedly use deepfake technology to alter the models' appearances or create entirely synthetic personas.

The Scale of the Problem

Southeast Asia has become a hub for large-scale scam operations, with criminal enterprises running from countries like Cambodia, Myanmar, and Laos. These operations often involve human trafficking victims who are forced to work in scam call centers under coercive conditions.

The addition of "AI face models" represents an evolution in these scams, making them more sophisticated and harder to detect. Law enforcement officials have noted that the quality of these scams has improved dramatically, with victims reporting highly convincing interactions that closely mimic legitimate relationships or investment opportunities.

Industry Response

Cybersecurity experts and law enforcement agencies are increasingly concerned about the use of AI in facilitating fraud. The technology lowers the barrier to entry for sophisticated scams while making them more convincing to potential victims.

Several cryptocurrency exchanges and financial institutions have implemented new verification procedures to combat these types of scams, including enhanced video verification and behavioral analysis. However, the rapid advancement of AI technology continues to challenge these safeguards.

Victim Demographics

While romance scams traditionally targeted older adults, these AI-enhanced operations appear to be casting a wider net. The use of professional actors and AI technology allows scammers to create personas that appeal to various demographics, including younger investors interested in cryptocurrency opportunities.

The crypto angle has proven particularly effective, as many victims are already familiar with digital assets and may be more willing to engage in what appears to be legitimate investment discussions. Scammers often create elaborate fake trading platforms and use manipulated data to show victims "profits" that encourage them to invest more.

Legal and Ethical Implications

The recruitment of "AI face models" raises significant legal and ethical questions. While some participants may be aware they're working for scam operations, others may be misled about the nature of the work or coerced through debt bondage or threats.

International law enforcement agencies are working to dismantle these operations, but the decentralized nature of the recruitment and the use of encrypted communication platforms make it challenging to track and prosecute offenders.

Prevention and Protection

Experts recommend several strategies for protecting against these sophisticated scams:

- Be skeptical of unsolicited investment opportunities, especially those involving cryptocurrency

- Verify the identity of people you meet online through multiple channels

- Be cautious of requests for money or financial information from new online relationships

- Use reverse image searches to check if profile pictures appear elsewhere online

- Report suspicious activity to relevant authorities and platforms

As AI technology continues to advance, the line between legitimate and fraudulent online interactions may become increasingly blurred, making awareness and skepticism essential tools for digital safety.

The Wired investigation highlights how criminal enterprises are adapting to technological advances, using AI not just for automation but to create more convincing and scalable fraud operations that pose significant challenges to both victims and law enforcement.

Comments

Please log in or register to join the discussion