A reflective exploration of how deep understanding remains the most powerful tool for programmers, despite growing complexity and the allure of abstraction.

In the contemporary landscape of coding agents and increasingly sophisticated abstractions, Gerald Sussman's observation that programmers no longer construct systems from known parts but instead perform 'basic science on the functionality of foreign libraries' resonates with particular force. This perspective, shared by Andy Wingo in a recent Mastodon post, suggests a fundamental shift in the nature of programming since the days when MIT taught Scheme in introductory courses. Sussman himself explains this transition as a response to engineering's evolution—from an era when code ran close to the metal and was understandable all the way down, to our current reality where we must experiment with libraries to discern their actual behavior.

This assertion provokes complex reactions. While it is undeniably true that modern software development often involves navigating leaky abstractions and inadequate documentation, the implication that we have lost the capacity for deep understanding represents a perspective that stands in stark contrast to my own experience as a programmer who came of age in precisely the era Sussman describes as a turning point.

My journey into programming began in the early 1990s, a time when the tools available to aspiring developers simultaneously offered unprecedented power and imposed formidable barriers to comprehension. Starting with BASIC on 8-bit computers like the VIC-20 and Apple II, programming meant sequencing commands that produced visible results. When BASIC lacked a necessary command, the solution was simply to accept limitation. Assembly language existed in a different universe, accessible only through moments of accidental entry into machine code monitors, which typically ended with frantic Ctrl+Reset combinations in an attempt to return to the safety of BASIC.

The transition to a 286 and QuickBASIC represented both progress and frustration. While more commands enabled greater capability, the absence of graphics documentation in help files created new obstacles. The eventual discovery of Bulletin Board Systems (BBSes) and shareware compilers revealed alternative approaches to VGA programming, yet understanding remained elusive. Even when I succeeded in getting pixels on the screen through a self-designed paint program that used simple 'width, height, pixels' formats, the underlying mechanisms remained shrouded in mystery.

The acquisition of a 486 and internet access exacerbated this challenge. The accumulated knowledge of previous generations suddenly became obsolete as entirely new layers of complexity emerged. Books on assembly language and Win32 programming filled me with despair, while newsgroup discussions about DPMI, real mode, and protected mode made the task of displaying a single pixel seem impossibly daunting. This was precisely the timeframe Sussman identifies as the moment engineering fundamentals began to change irrevocably.

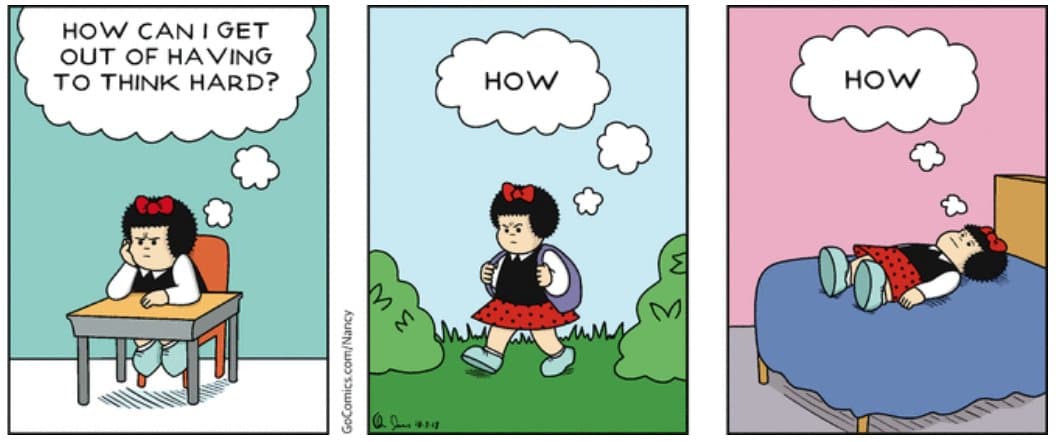

As a teenager learning these technologies, my response was predictable: I sought to avoid understanding at all costs. What I desired most was the ability to make the computer 'Do Thing' without comprehending the underlying mechanisms. The overwhelming volume of incomprehensible concepts created a powerful incentive to seek libraries and languages with simple interfaces that would solve problems without requiring deep thought.

This mindset produced what I now recognize as a characteristic approach to problem-solving: creating workarounds rather than solutions. My proudest accomplishment from this era was 'Easymik,' a library I developed to simplify the integration of Mikmod and Allegro. Rather than understanding the flexible APIs and proper integration methods, I identified values that worked and created wrapper functions that eliminated all flexibility. When Mikalleg failed to interoperate with Allegro's compressed data files, I implemented a solution that wrote decompressed data to disk, instructed Mikmod to load it, and then deleted the temporary file—knowing it was suboptimal but satisfied by its effectiveness.

This approach reflected a deeper aspiration: to become a 'Real Programmer.' I recognized the tools Real Programmers used (C and assembly) and the form their programs took (.exe files, special data formats), yet I lacked understanding of why these conventions existed. The closer I could approximate these patterns, the more legitimate my programming identity would become, and the more likely I would be to achieve mastery over the computer's capabilities.

If presented with a 'magic box' capable of generating incomprehensible code that mostly worked, I would have embraced it enthusiastically. The available resources were so inadequate that such a tool would have represented liberation rather than limitation. I could have remained trapped in this cycle of superficial understanding for many more years had certain pivotal experiences not redirected my trajectory.

The first such experience was an improbable programming job working with QNX while still in high school. QNX represented everything MS-DOS and Windows were not: clear, elegant, understandable, and reliable. Rob Krten's book demonstrated that operating systems could be designed with comprehensibility as a primary goal. While I never managed to master Win32 programming, I could understand Photon's design principles. This experience proved that systems need not be inherently opaque; good design could make complexity manageable.

The second significant shift came during my next role, where I attempted to apply the design principles I had been studying. Tasked with patching numerous bespoke tools to support a new peripheral, I recognized that two core use cases underpinned all the variations. I proposed a unified codebase to replace them all, building an infinitely configurable system via XML. While solving 80% of the problem proved straightforward, the remaining 20% required increasingly convoluted approaches, including defining a bidirectional algebra for serial number strings.

The resulting system, while functional for the first use case, encountered catastrophic performance issues with the second. My dynamic Value class, designed to allow flexible data schema definitions in XML rather than C++, consumed hundreds of kilobytes of logs and rendered my development system unusable. Eventually, I was forced to hardcode the schema, reintroducing the product-specific code I had worked months to eliminate. This experience demonstrated that elegant design principles, when applied without deep understanding, could produce systems of greater complexity than those they replaced.

These experiences led me to a profound realization: what I had secretly yearned for throughout my career was not better tools or techniques, but escape from the necessity of learning things that felt too difficult. The conviction that some magical approach would eliminate the need for understanding had driven significant life decisions, including an international move to work on a tree-merging algorithm. It was during this project that I finally confronted the fear that had silently guided my career: the terror of deeply engaging with complex problems.

The breakthrough came when I acknowledged that attempting to solve problems without understanding them creates infinitely greater challenges. I implemented Polya's problem-solving methodology, starting with the fundamental principle that understanding must precede solution. This approach transformed my work on the tree-merging algorithm. After years of incremental progress and ad-hoc testing, I finally examined core assumptions that had guided the existing implementation. By questioning these premises and rebuilding the system from scratch, I achieved flawless functionality where years of patching had produced only marginal improvements.

This experience established the principle that now guides my professional practice: the ability to understand what is actually happening in a system represents an unreasonably effective skill that grows more valuable with time. While experimentation and 'doing basic science' certainly have their place, true satisfaction comes from examining source code to understand actual behavior rather than documented behavior.

Returning to Sussman's observation, I acknowledge his correct identification of the 1990s as a period of exploding complexity in both software and electrical engineering. However, I cannot accept that our current situation represents a deterioration in comparison to that era. The critical difference lies in accessibility: in the 1990s, the inner workings of essential components like Windows 95 were secret. Programmers worked with documentation, and when behavior contradicted expectations, debugging often involved little more than trial and error. Reverse engineering an operating system was not a viable approach for most developers.

Today's software landscape differs fundamentally. While libraries have grown larger and more numerous, most are open source. When behavior contradicts expectations, determining the cause is orders of magnitude easier than in previous decades. Modern components typically exhibit greater reliability, either through language design choices or the gradual elimination of bugs over time. Worthwhile dependencies usually possess coherent design principles that can be learned.

My experience with Android UI programming illustrates this point vividly. When struggling with layout bugs where UI recomputation failed to occur despite documentation suggesting otherwise, I eventually examined the source code. This revealed that requestLayout and forceLayout merely set flags on view objects rather than 'scheduling' anything as the documentation implied. Understanding the actual recursive propagation of these flags through the view hierarchy clarified the underlying mechanism and enabled proper implementation.

This experience demonstrates that while experimentation might produce working code, reading the source builds genuine understanding that prevents future problems and enables confident modification. The Android layout system's actual behavior, once understood, made debugging straightforward and revealed the purpose of seemingly mysterious methods.

The contemporary software ecosystem does not represent a descent into unknowable complexity. Rather, it offers unprecedented opportunities for understanding through open source availability, improved documentation practices, and more thoughtful design. While challenges certainly remain, particularly in dependency selection and integration, the fundamental principle remains unchanged: systems can still be constructed from understandable components.

Programming in 2025 need not involve fumbling in the dark. The tools exist to illuminate even the most complex systems—if we are willing to use them. The most powerful 'magic box' a programmer can possess is not one that generates incomprehensible solutions, but the commitment to understand problems deeply before attempting to solve them. This commitment, more than any language, framework, or methodology, remains the surest path to mastery in our increasingly complex technical landscape.

Comments

Please log in or register to join the discussion