Traditional secrets management creates operational overhead that only large organizations can bear, but AI agents have exposed a fundamental flaw in how we handle API keys. A simple HTTP proxy that injects secrets as headers offers most of the security benefits without the complexity, making this approach ideal for organizations of any size.

The proliferation of AI agents has forced us to confront a problem that has always existed but was previously manageable: API keys are simultaneously too powerful and too fragile. When you hand an agent an API key directly, you're not just granting access to make API calls—you're potentially giving that agent the ability to exfiltrate the key and share it with others, creating a security vulnerability that persists until you manually rotate credentials.

This problem has become acute because different AI models react unpredictably to secrets. Some models refuse to work when presented with an API key, treating it as "exposed" and declining to proceed. Others will happily write the key to persistent memory, potentially using it in future sessions and wasting precious context window space on revoked credentials. The result is a frustrating experience where the same agent that can write sophisticated code suddenly becomes paralyzed by a simple authentication token.

But this isn't really an agent problem—it's a fundamental flaw in how we've been handling secrets all along. API keys grant not just the ability to make API calls, but the power to delegate that ability to others by sharing the key. This design made sense when we were building traditional services with human operators who could be trusted to handle credentials responsibly. It makes far less sense in a world where automated agents need to interact with services on our behalf.

The traditional solutions have been inadequate. Large organizations centralize secrets management in dedicated services, creating significant operational overhead and engineering complexity that smaller teams cannot justify. OAuth, while theoretically sound, is notoriously complex to implement and rarely automated in practice. Some services compound the problem by encouraging practices like GitHub's 90-day expiring personal access tokens—long enough to forget about them, short enough to cause mysterious failures during vacations.

What's particularly frustrating is that inter-server OAuth, as commonly implemented, doesn't help with agents at all. The creation process typically requires human intervention through web browser cookies, deliberately designed to be difficult to automate. I've never encountered a service that provides an OAUTH_CLIENT_SECRET via an API, making it impractical for automated workflows.

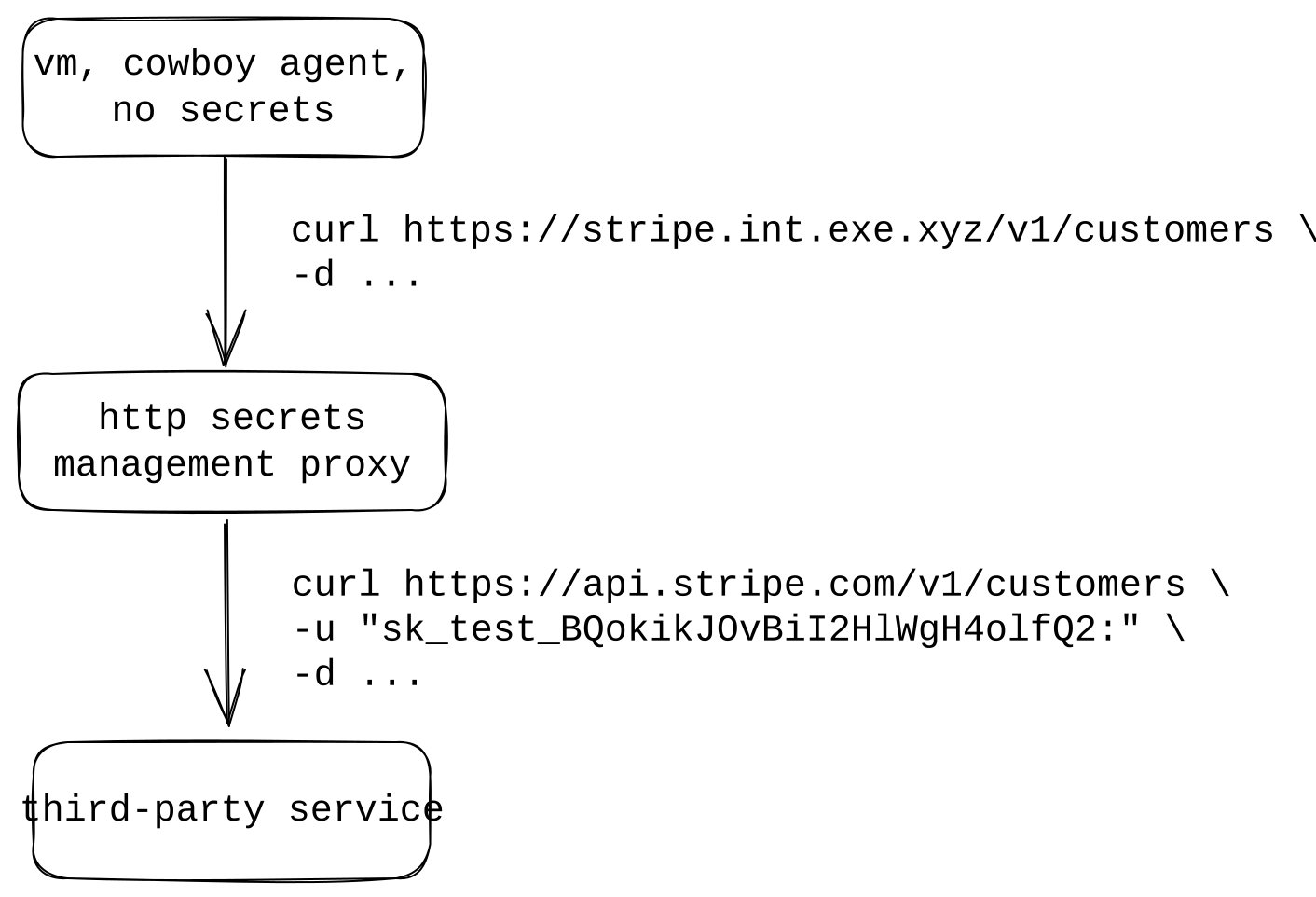

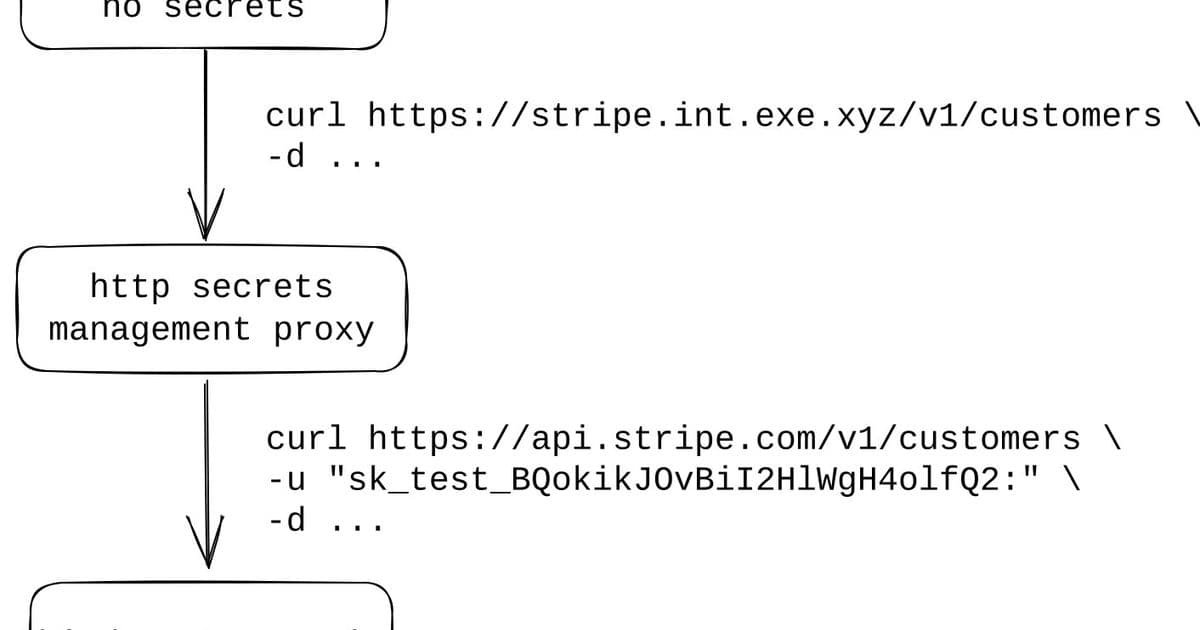

There's a simpler solution hiding in plain sight: HTTP proxies that inject secrets as headers. Many APIs already communicate over HTTP and expect secrets in headers—Stripe, for example, uses basic auth headers with a format like -u "sk_test_BQokikJOvBiI2HlWgH4olfQ2:". Instead of storing these secrets in environment variables or configuration files, we can route requests through a proxy that injects the appropriate headers automatically.

This approach transforms how we think about secrets access. Rather than granting direct access to the secret itself, we grant access to a proxy that can use the secret on our behalf. The ability to use a secret becomes equivalent to the ability to reach the secrets HTTP proxy, not the ability to read and exfiltrate the secret itself. This is a crucial distinction that dramatically reduces the attack surface.

The beauty of this solution is that it covers almost all secrets. While complex secrets management products provide this as part of a larger suite of features, the HTTP proxy component is actually one of the easier parts to implement and delivers a disproportionate amount of the security benefit. It's the kind of pragmatic solution that works for organizations of any size without requiring the overhead of enterprise-grade secrets management systems.

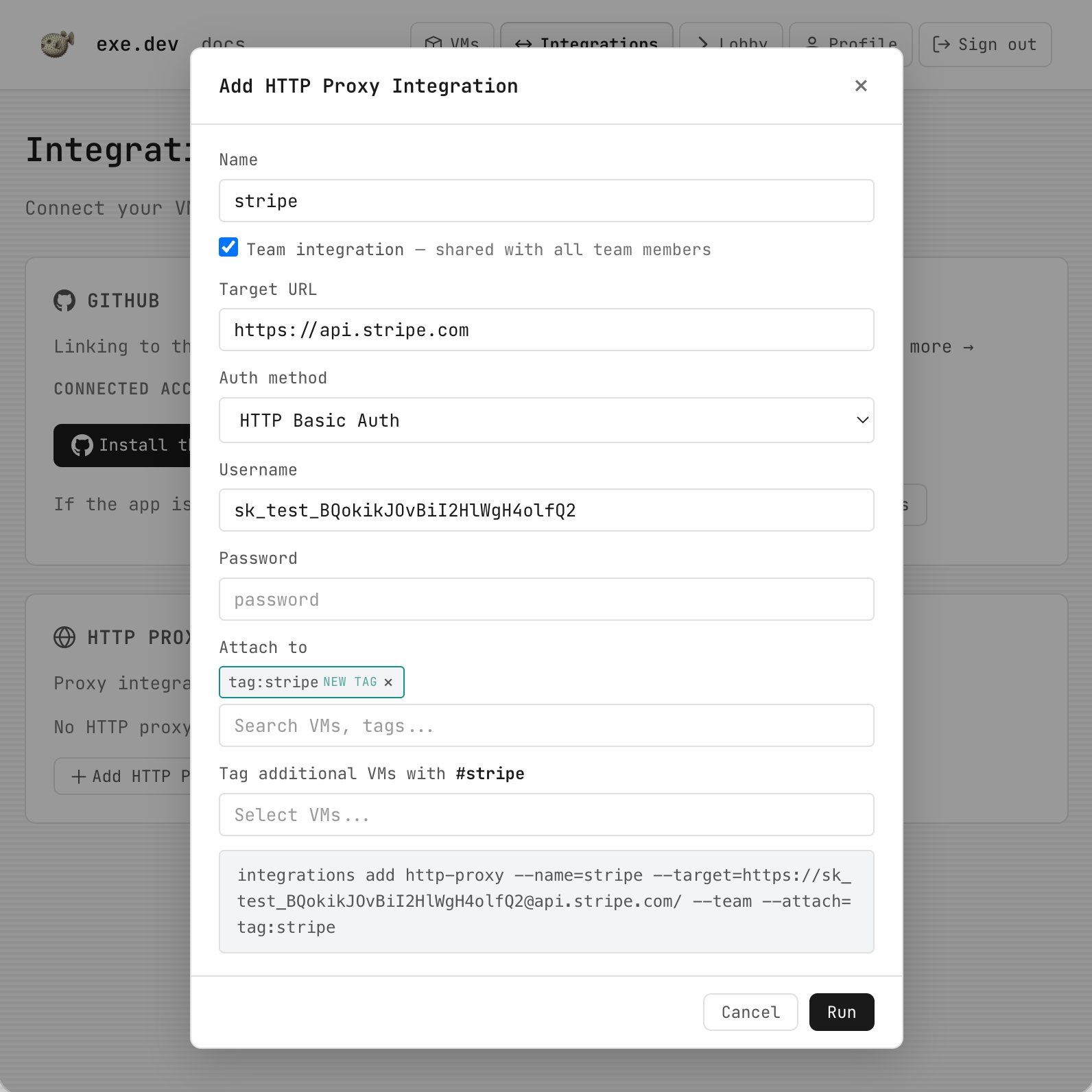

This is the thinking behind integrations in exe.dev, which automates the HTTP proxy approach. By assigning integrations to tags and tagging the VMs that need access, you get automatic secrets injection without writing or managing proxy infrastructure yourself. When you clone a VM, you get a fresh workspace with agents and integrations already configured. For GitHub specifically, they built a GitHub App to manage OAuth automatically, eliminating the need for manual key rotation.

The HTTP proxy approach to secrets management represents a fundamental shift in how we think about authentication in an agent-driven world. Instead of treating API keys as something to be carefully guarded and manually rotated, we treat them as credentials that can be safely managed by infrastructure we control. This isn't just a workaround for agent compatibility—it's a better way to handle secrets that addresses the underlying security concerns while dramatically reducing operational complexity.

The implications extend beyond just making agents work better. This approach forces us to reconsider what we mean by "access" to a service. If the ability to use a secret is equivalent to the ability to reach a proxy, then our security model shifts from protecting individual credentials to protecting network access to trusted infrastructure. This is a more robust security posture that aligns with how modern applications actually work.

For organizations struggling with secrets management in the age of AI agents, the HTTP proxy solution offers a pragmatic middle ground. It provides most of the security benefits of enterprise secrets management without the operational overhead, and it solves the agent compatibility problem without requiring complex OAuth flows or manual credential rotation. It's a reminder that sometimes the best solutions are the simplest ones—we just needed the right problem to make them obvious.

The HTTP proxy topology shows how secrets flow through controlled infrastructure rather than being exposed directly to agents. This architectural pattern represents a fundamental improvement in how we think about authentication and authorization in distributed systems.

Setting up HTTP integrations in exe.dev demonstrates how this pattern can be automated, removing the operational burden while maintaining security. The ability to tag VMs and automatically inject secrets based on those tags creates a scalable, manageable approach to secrets distribution that works whether you're running one VM or hundreds.

Comments

Please log in or register to join the discussion