A critical examination of how vanity metrics like lines of code are creating a technical debt crisis in software development, with insights on measuring true value through user outcomes, feedback loops, and utility.

In 1982, Bill Atkinson, the architect behind QuickDraw and a lead designer for the Apple Lisa, found himself at odds with a new management initiative. The team decided to track "Lines of Code" (LOC) as a proxy for productivity. Atkinson had just spent weeks refactoring the region-calculation engine. He had replaced a clunky, original algorithm with something elegant, faster, and significantly smaller. When it came time to fill out his weekly status report, in the box for "Lines of Code Written," he didn't put a positive integer. He simply wrote: -2,000.

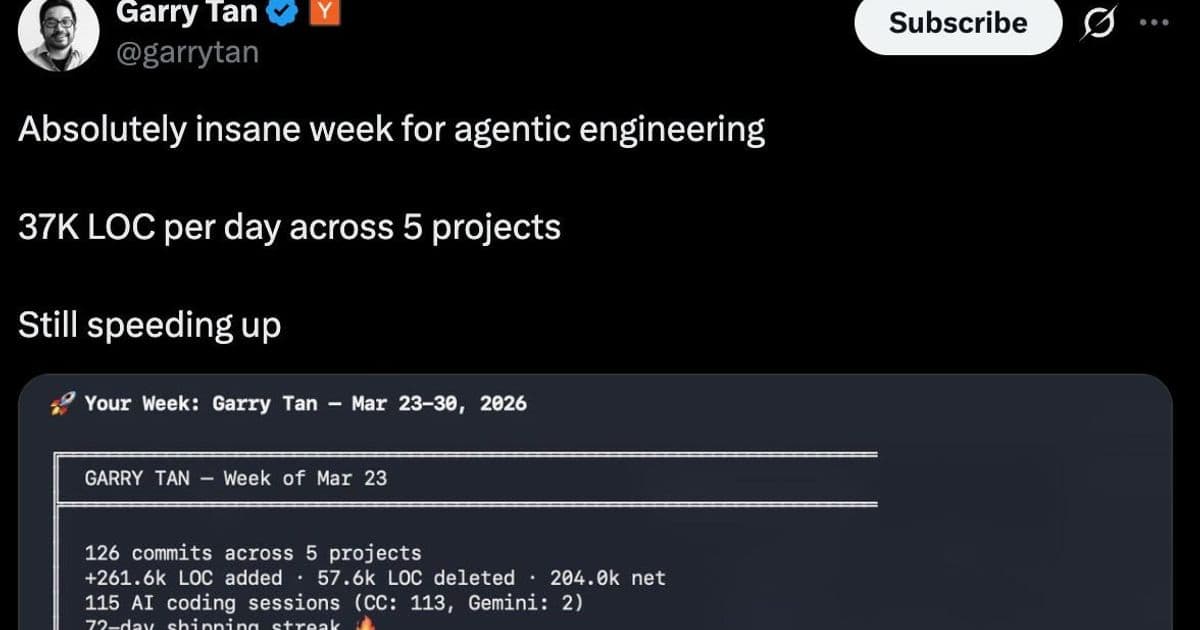

While history never repeats, it often rhymes. Forty years later, we have come full circle to LOC being used as a measure of developer productivity. If this were just a one-off opinion from a fringe person, it could be dismissed as a misunderstanding of the craft. But when Garry Tan, the CEO of Y Combinator, tweets a dashboard boasting 37,000 lines of code per day as proof of "agentic engineering" speeding up, it's clear the rot has reached the highest levels of tech leadership.

This idea has metastasized across Slacks, forums, and boardrooms: the belief that AI has magically "solved" software by making it effortless to generate mass quantities of it. We are mistaking token burn for value creation. We are celebrating the expansion of our attack surface and the explosion of our maintenance burden as if it were progress.

I'm writing this in the hope that those in leadership might step back from the dashboard and realize that vanity metrics like LOC will never tell you how much value was created—only how much technical debt was financed.

This leads into a natural question: if we cannot use quantity as a metric, how do we define the value of software, and those who are paid to create it? Based on my own personal experience combined with ideas borrowed from experts, I have the following three suggestions.

User Outcomes: The "Windows" Litmus Test

The most obvious way to describe valuable software is the value a user receives from using it. This sounds like a platitude until you realize that all software without users is, by definition, worthless. The source code itself holds zero intrinsic value. If you don't believe this, imagine Microsoft open-sourced the entirety of Windows tomorrow. Would Windows users immediately jump to a new startup selling that exact same code? Of course not.

The source code isn't what makes Windows valuable—it is the Outcome the user achieves. An average user pays for Windows because they can open a laptop and work immediately. They are paying for Distribution, Support, and Trust. They are paying for a "Job" to be "Done."

No matter how many tens of thousands of lines you generate with an AI agent, no customer will ever pay you more for it. In fact, if those lines don't move the needle on the user's "Time to Joy," you haven't created a single cent of value—you've just increased your own overhead.

Time-to-Value: Physics of a feedback loop

If you want to understand why your expensive engineering team feels slow, stop looking at their output and start looking at their feedback loops. The quintessential catalyst for software value isn't a new framework or an AI agent—it's the refinement of the loop: the time it takes for a developer to make a change and see if it actually worked.

I learned this lesson by accident. Years ago, I took a "step back in time" to help a family member with a website running on a basic LAMP stack managed via FTP. Coming from a world of enterprise Kubernetes clusters and hour-long CI/CD pipelines, I expected to be frustrated. Instead, I was faster than I'd been in years. Because there were no massive dependency graphs or 20-minute build steps, I could try an idea and see the result in seconds. I was in a constant state of flow.

In his research on developer effectiveness, Tim Cochran emphasizes these "micro-feedback loops"—the actions a developer performs 100 or 200 times a day. When those loops are fast, you get "Flow." When they are slow, you get "Friction."

This is where the "37k lines of code" metric becomes truly dangerous. More code = more dependencies. More dependencies = slower test suites. Slower test suites = broken feedback loops. When a developer's loop is interrupted by a slow build or a failing agent-generated test, it takes an average of 23 minutes to return to a state of focused productivity.

If your AI agent is dumping 37,000 lines of code into your repo, it isn't just adding value—it's adding "noise" that clogs the pipes. It forces the human engineers to spend their day waiting for CI, debugging "hallucinated" edge cases, and losing their flow. Instead of measuring how many lines the AI wrote today, measure how long it takes a human to validate those lines. If the "Time-to-Value" is increasing, your productivity is actually decreasing, regardless of what the LOC dashboard says.

Utility to Maintenance: Use it or Lose it

In the pre-agentic era, building a feature was expensive. That high cost acted as a natural filter. Because it took weeks of human engineering to implement an idea, we were forced to validate it first. We spoke to users, ran prototypes, and asked: "Is this worth the effort?"

That "friction" was actually a massive business benefit. It prevented us from cluttering the product with "invasive species" features that no one asked for but everyone has to maintain.

Now, with agents capable of pumping out thousands of lines of code in a single afternoon, that filter has vanished. New features are being shoved into products without a second thought. But here is the reality: If you are generating tons of code for features that aren't being used, you aren't creating value. You are burning money and financing technical debt.

In a "Garden," a healthy plant provides shade, food, or beauty. A weed just takes up space and nutrients. Every unused "AI-generated" feature is a weed. It makes your documentation longer, your UI more confusing, and your test suites slower.

Conclusion

To be clear, I am not anti-AI. These tools are the equivalent of high-end power tools for a master tradesperson. They have slashed the time it takes to solve niche problems, automated the tedious boilerplate required for compilation, and can replicate a human-defined pattern across a codebase in seconds. Used correctly, they are a massive win for the individual engineer.

However, we are biologically wired for the path of least resistance. Evolution taught us that cognitive energy is a scarce resource. If we can avoid thinking deeply about a complex architectural trade-off by pressing a "magic solve it for me" button, we usually will.

The danger is that today's models are just good enough to hide the rot. In a corporate environment driven by quarterly goals, a massive pile of technical debt scheduled for next year is often ignored in favor of the "velocity" shown on a dashboard today.

When leadership adopts "Lines of Code" as a success metric, they aren't just rewarding laziness—they are incentivizing the industrialization of bloat. We must return to the fundamental truth: The source code is not the product. The product is the Outcome the user achieves. The code is merely the expensive, high-maintenance machinery required to deliver that outcome.

If you can deliver a $1,000,000 outcome with 10 lines of code, you are a hero. If you deliver that same outcome with 37,000 lines, you have just created a $1,000,000 liability.

As we move deeper into this "agentic" era, the winners won't be the companies that burn the most tokens or generate the most files. The winners will be the ones with the discipline to measure what matters: Does the user's life get better, or did we just make our repo bigger?

Comments

Please log in or register to join the discussion