A UK datacenter successfully cut AI power consumption by up to 40% during a five-day trial, responding to National Grid signals without disrupting critical workloads.

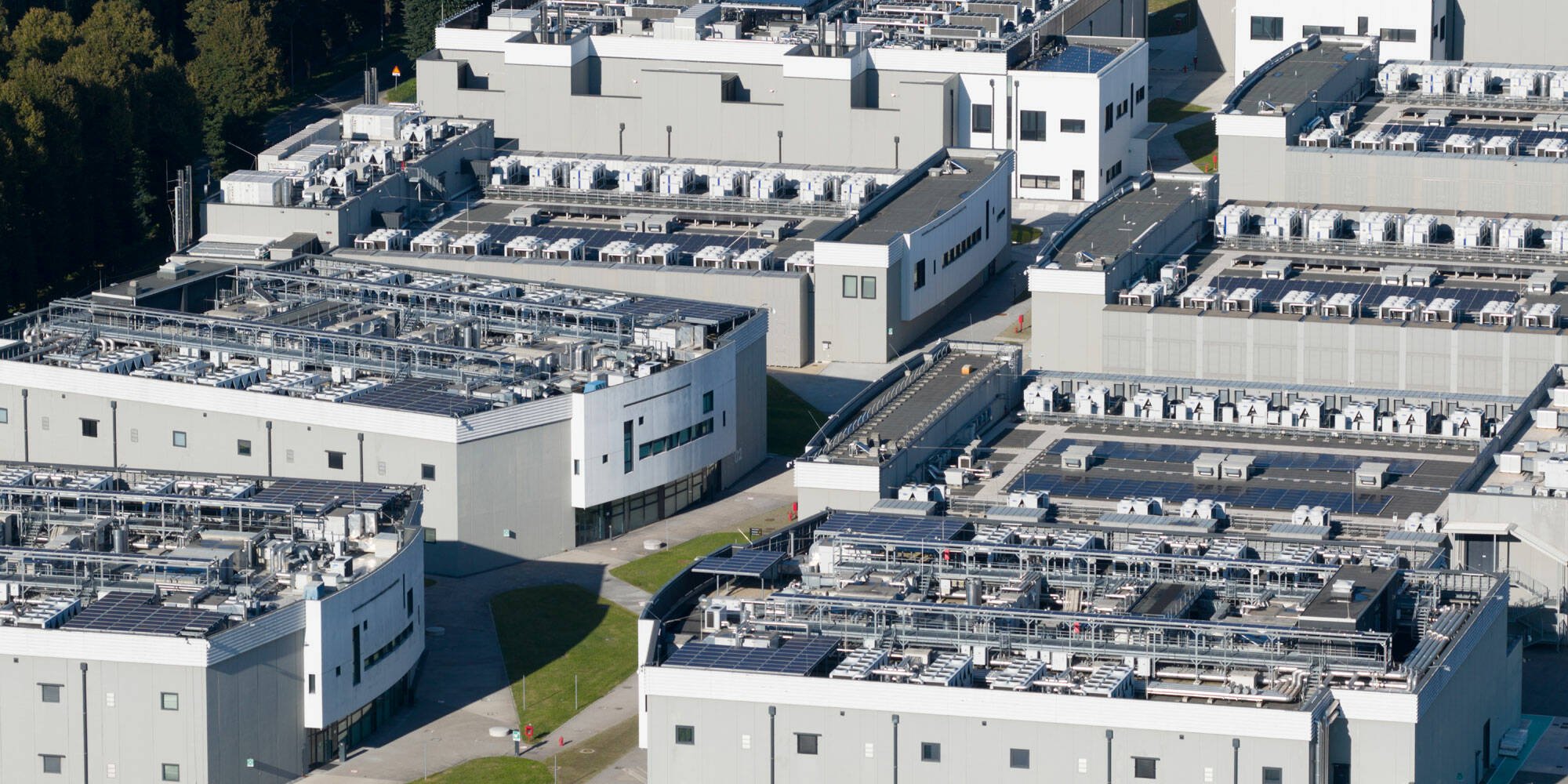

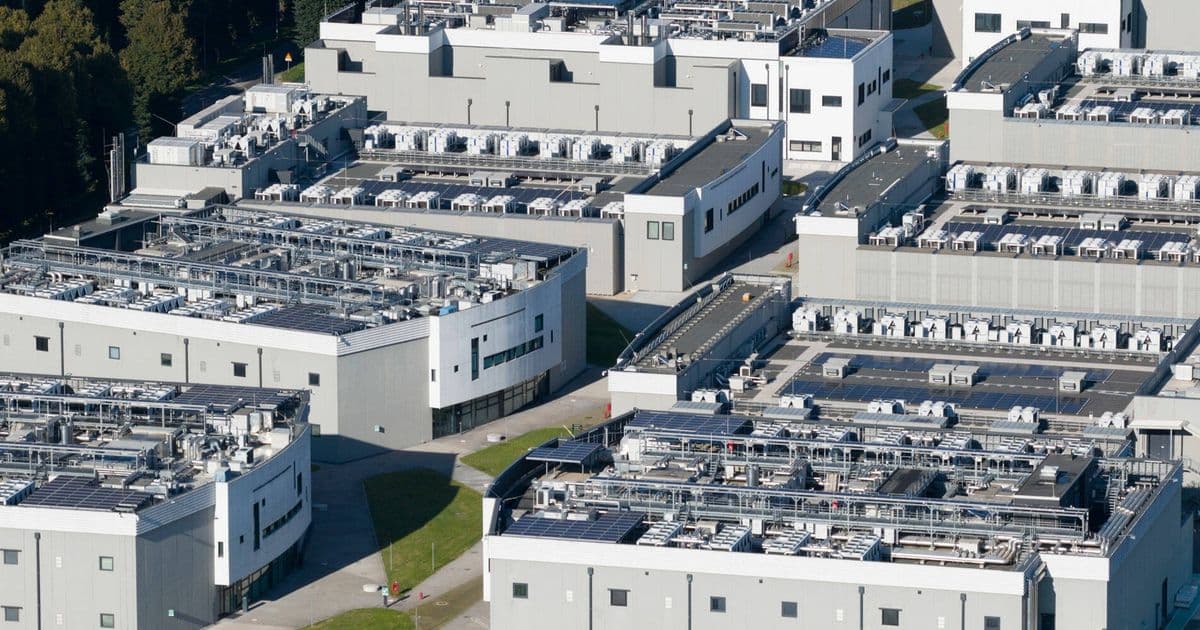

A UK datacenter has demonstrated it can reduce AI infrastructure power consumption by up to 40 percent in response to grid events, without disrupting critical workloads. The trial, conducted over five days last December, involved a cluster of Nvidia Blackwell Ultra GPUs installed in a datacenter near London operated by GPU-as-a-service provider Nebius.

The demonstration involved more than 200 simulated grid event notifications sent to the site to test its ability to dynamically adjust the cluster's power consumption. This was achieved successfully, cutting power demand by up to 40 percent while key tasks continued to run as normal, according to energy provider National Grid.

The project involved multiple partners: National Grid and Nebius operated the infrastructure, Emerald AI supplied the software that enabled the dynamic power management, and the Electric Power Research Institute (EPRI) contributed through its Datacenter Flexible Load Initiative (DCFlex).

A whitepaper provided by National Grid reveals that power control was achieved through sophisticated workload management rather than simply powering down infrastructure. The system paused or deprioritized jobs running on the GPUs, or shifted workloads to later times. This approach allowed the datacenter to maintain operational continuity while responding to grid demands.

Google is already implementing similar strategies in the United States, announcing last year that it would pause non-essential AI workloads to protect power grids during peak demand periods. The UK trial demonstrates that this approach can work effectively at scale with commercial AI workloads.

Not all AI workloads are equally sensitive to power management. While inference tasks are latency-sensitive and require immediate responses, training and fine-tuning workloads are more throughput-intensive and include natural "flex points" like checkpoint intervals where processing can be paused without data loss. The trial used commercially representative AI training workloads including gpt-oss, Llama, and Qwen models to approximate production conditions.

For the power experiments, National Grid Electricity Transmission (NGET) and EPRI submitted grid signals through an event submission portal. These signals specified the notice period, power reduction percentage, ramp-down duration, ramp-up duration, and overall event duration. Some tests involved "surprise" signals with no advance notice or immediate response requirements, while others emulated real-world demand spikes like those seen when British households put the kettle on during football halftime.

The tests were carried out as the Nebius "AI Factory" was being brought online, involving a 130 kW compute cluster roughly equivalent to the power consumption of 400 UK households. According to the whitepaper, the cluster achieved 100 percent compliance with all requested power targets and ramp rates.

This success suggests these systems could help alleviate the problems caused by AI's enormous power consumption. By replacing the rigid "firm load" models of the past with measurement-based flexibility, grid operators and policymakers can create new options for delivering capacity efficiently, the report concludes.

"As the UK's digital economy accelerates, there's concern that datacenters could add pressure to an already constrained system. This trial proves the opposite can be true. High-performance datacenters don't have to place additional strain on the grid," claimed National Grid Partners president Steve Smith.

However, the achievement comes with important caveats. As previously reported, new generating capacity isn't being added at the same rate datacenters are being built, and some developers face waits of years to get grid connections and for local substations to be upgraded. The ability to flex power consumption only matters if the power can be delivered in the first place.

The trial represents a significant step forward in making AI infrastructure more grid-friendly, but it also highlights the complex challenges of balancing technological advancement with energy infrastructure limitations. As AI workloads continue to grow exponentially, solutions that allow datacenters to respond dynamically to grid conditions may become essential rather than optional.

Comments

Please log in or register to join the discussion