Docker has evolved from a Linux containerization tool to a cross-platform development platform, enabling developers to build, share, and run applications seamlessly across diverse environments.

Docker has become an indispensable tool in modern software development, transforming how developers build, share, and deploy applications. Since its launch in 2013, Docker has grown from a simple Linux containerization system into a comprehensive cross-platform development platform that powers everything from cloud-native applications to AI workloads.

The Problem Docker Solved

Before Docker, developers faced a persistent challenge: how to ensure applications run consistently across different environments. The traditional approach involved manually installing operating systems, managing dependencies, and configuring software stacks—a process that was time-consuming, error-prone, and difficult to reproduce.

Docker simplified this by allowing developers to package applications and all their dependencies into portable "containers" that could run anywhere Docker was installed. Unlike virtual machines that require entire operating systems, Docker containers share the host OS kernel while maintaining isolation through Linux namespaces and control groups.

How Docker Works Under the Hood

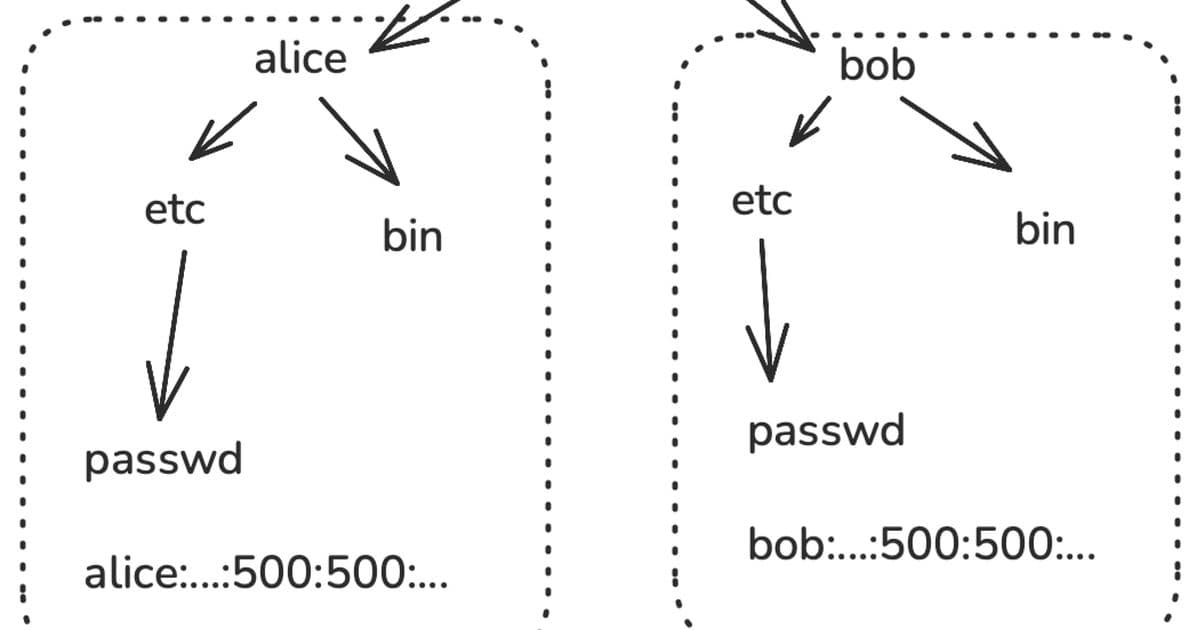

The magic of Docker lies in its use of Linux kernel features. Namespaces provide process isolation by giving each container its own view of system resources. A container sees its own filesystem, network stack, process tree, and user IDs, completely isolated from other containers and the host system.

Docker builds on this with a layered filesystem architecture. When you create a Docker image using a Dockerfile, each instruction creates a new layer. These layers are cached and shared across images, making builds faster and storage more efficient. The Open Container Initiative has standardized this image format, ensuring compatibility across different implementations.

Evolution Beyond Linux

The biggest challenge Docker faced was enabling development on macOS and Windows while targeting Linux for deployment. The solution was ingenious: embed a Linux virtual machine within a desktop application using a library virtual machine monitor called HyperKit.

This approach, combined with technologies like SLIRP for network translation and virtio-fs for filesystem sharing, created a seamless experience where developers could use the same Docker commands regardless of their host operating system. The architecture has proven so successful that it's now the standard way to run containers on desktop platforms.

Adapting to Modern Workloads

As computing has evolved, so has Docker. The rise of ARM processors required multi-architecture support, achieved through multiarch manifests and transparent binary translation using QEMU. AI and machine learning workloads brought GPU support challenges, addressed through the container device interface that allows dynamic mounting of GPU-specific libraries and devices.

Security has also advanced significantly. Docker now integrates with trusted execution environments and confidential computing technologies, allowing containers to run in hardware-protected environments even when the host OS might be compromised.

The Developer Experience

Despite all the technical complexity under the hood, Docker's core workflow remains remarkably simple: write a Dockerfile, build an image, and run containers. This simplicity, combined with powerful features like volume mounting for persistent data, port forwarding for networking, and environment variable injection for configuration, has made Docker the de facto standard for containerization.

The Docker Hub hosts over 14 million images and serves billions of pulls monthly, demonstrating the massive scale of the ecosystem. From small startups to large enterprises, developers rely on Docker to streamline their workflows and ensure consistent deployments.

Looking Forward

As we move into an era of AI-driven development and heterogeneous computing, Docker continues to evolve. The platform now supports edge computing scenarios, integrates with AI development tools, and provides the isolation needed for multi-tenant cloud environments.

What started as a solution to dependency hell has become the foundation of modern cloud-native architecture, enabling technologies like Kubernetes and shaping how we think about software deployment. Docker's success lies not just in its technical innovation, but in its ability to make complex systems accessible to developers worldwide.

The future of Docker is about maintaining that accessibility while adding the capabilities needed for emerging workloads—whether that's running containers on spacecraft, managing AI model deployments, or securing applications in confidential computing environments. The goal remains the same: help developers ship code faster and with greater confidence, regardless of where it needs to run.

Comments

Please log in or register to join the discussion