Early adopters report successful implementation of ROCm with Strix Halo technology, allowing 128GB of memory to be dynamically shared between CPU and GPU, potentially democratizing high-performance AI computing.

AMD's ROCm platform continues to gain traction among developers and researchers, with early adopters reporting success implementing the Strix Halo memory sharing technology. This innovation allows for efficient allocation of system memory between CPU and GPU, addressing a key bottleneck in AI and machine learning workflows.

The Strix Halo technology enables up to 128GB of memory to be dynamically shared between the CPU and GPU, a significant advantage for workloads that require large memory pools. Unlike traditional approaches where GPU memory is fixed and separate from system memory, Strix Halo creates a unified memory space that can be allocated based on workload demands.

"The CPU is not able to use the GPU reserved memory. The GPU can use the total of Reserved + GTT, but utilizing both simultaneously can be less efficient than a single large GTT pool due to fragmentation and addressing overhead," noted one early implementer in a technical blog post.

Setting up the technology requires careful configuration, including BIOS updates and specific kernel parameters. Users report success with Ubuntu 24.04 LTS, following AMD's official installation guidelines. The BIOS must be updated to enable GPU functionality, and reserved video memory should be set to a low value (as low as 512MB) to allow maximum memory sharing through the Graphics Translation Table (GTT).

"I was able to play with PyTorch and run Qwen3.6 on llama.cpp with a large context window," the blogger reported. "There were some rough edges, but I think it was quite worth it."

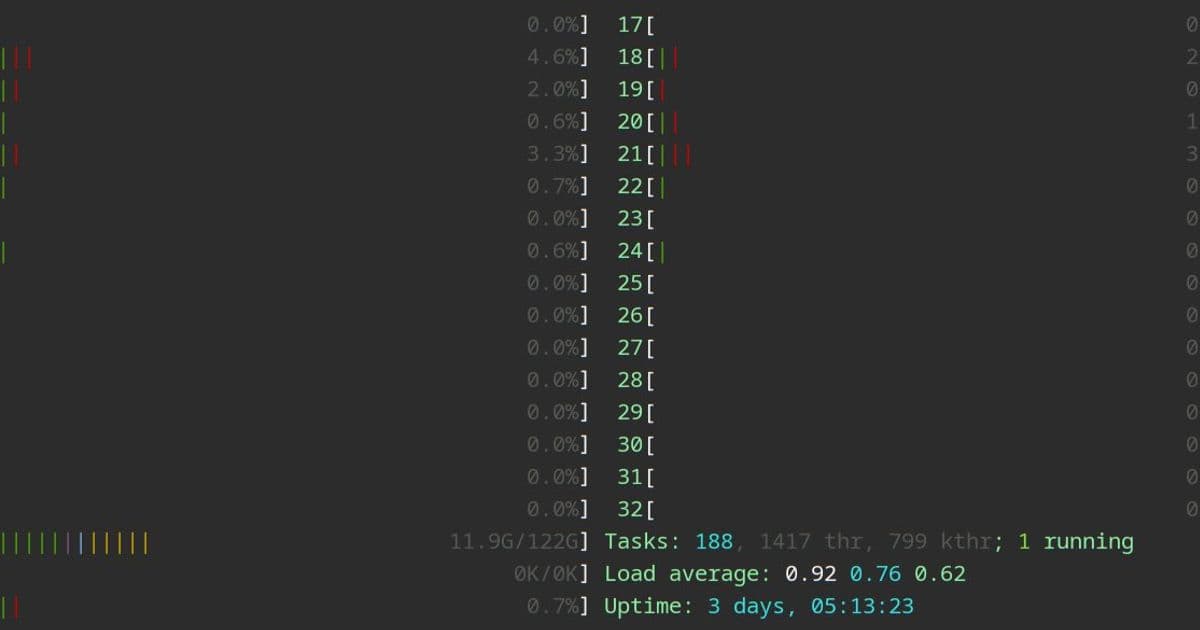

The implementation involves modifying the GRUB configuration with parameters like ttm.pages_limit=32768000 and amdgpu.gttsize=114688 to properly allocate memory between the CPU and GPU. Users should leave 4-12GB of memory reserved for the CPU to maintain system stability.

For AI development, PyTorch can be installed using UV package manager with ROCm-specific versions. The blogger provided a working configuration that includes PyTorch 2.11.0 with ROCm 7.2 support and the necessary Triton dependencies.

Running large language models becomes more feasible with this memory sharing approach. The blogger demonstrated running Qwen3.6 through Llama.cpp using Podman, with the model utilizing a large context window of 327680 tokens. The configuration includes enabling flash attention and disabling memory mapping for optimal performance.

"Some legacy games or software sadly might see the GPU memory as 512MB and refuse to work, this has not happened to me so far though," the blogger noted, acknowledging potential compatibility challenges.

The integration with development environments like Openade further extends the utility of this technology, allowing developers to work with local AI models more efficiently.

As AMD continues to develop ROCm and technologies like Strix Halo, the company appears to be positioning itself as a viable alternative to NVIDIA in the AI/ML space. The ability to leverage existing system memory more efficiently could lower barriers to entry for high-performance computing, particularly for researchers and developers working with large models.

The implementation challenges suggest that while the technology is promising, it still requires technical expertise to deploy effectively. As the platform matures, we may see more streamlined installation processes and improved compatibility with existing software ecosystems.

For developers interested in exploring ROCm and Strix Halo, AMD's official documentation provides installation instructions, while the ROCm GitHub repository offers source code and community support.

Comments

Please log in or register to join the discussion