Anthropic faces mounting challenges as Chinese AI models dominate market share while the company's strict safety measures alienate security researchers and drive users to cheaper alternatives.

Anthropic, the AI company behind Claude, is rushing toward a public offering as early as Q4 2026, but faces mounting headwinds from Chinese competition, financial pressures, and its own safety-focused approach that may be alienating key users.

Financial Pressure Mounts

The company's financial picture remains troubling. Despite raising $30 billion in capital, Anthropic has only generated $5 billion in revenue while spending $10 billion on inference and training alone, according to CFO Krishna Rao's legal filing earlier this month. Recent cost-saving measures designed to reduce token demand during peak hours have done little to inspire confidence among investors.

Chinese Competition Gains Ground

A recent US-China Economic and Security Review Commission report highlights how Chinese AI labs have narrowed performance gaps with Western models and developed key architectural advances that are now industry standards. This assessment is reflected in market share data from LLM Rankings, which tracks model popularity on OpenRouter.

Currently, the top six positions are occupied by Chinese companies: MiMo-V2-Pro (Xiaomi), Step 3.5 Flash (stepfun), DeepSeek V3.2 (DeepSeek), MiniMax M2.7 (MiniMax), MiniMax M2.5 (MiniMax), and GLM 5 Turbo (z.ai). Anthropic's Claude Opus 4.6 and Claude Sonnet 4.6 sit at seventh and eighth place.

More concerning for Anthropic is the dramatic market share decline—from 29.1 percent on March 22, 2025 to just 13.3 percent on March 21, 2026. Cost comparisons show the severity of the challenge: when Kilo Code compared Claude 4.6 Opus to MiniMax M2.7, it found MiniMax delivered 90 percent of the quality for just 7 percent of the cost ($0.27 versus $3.67).

Safety Measures Backfire

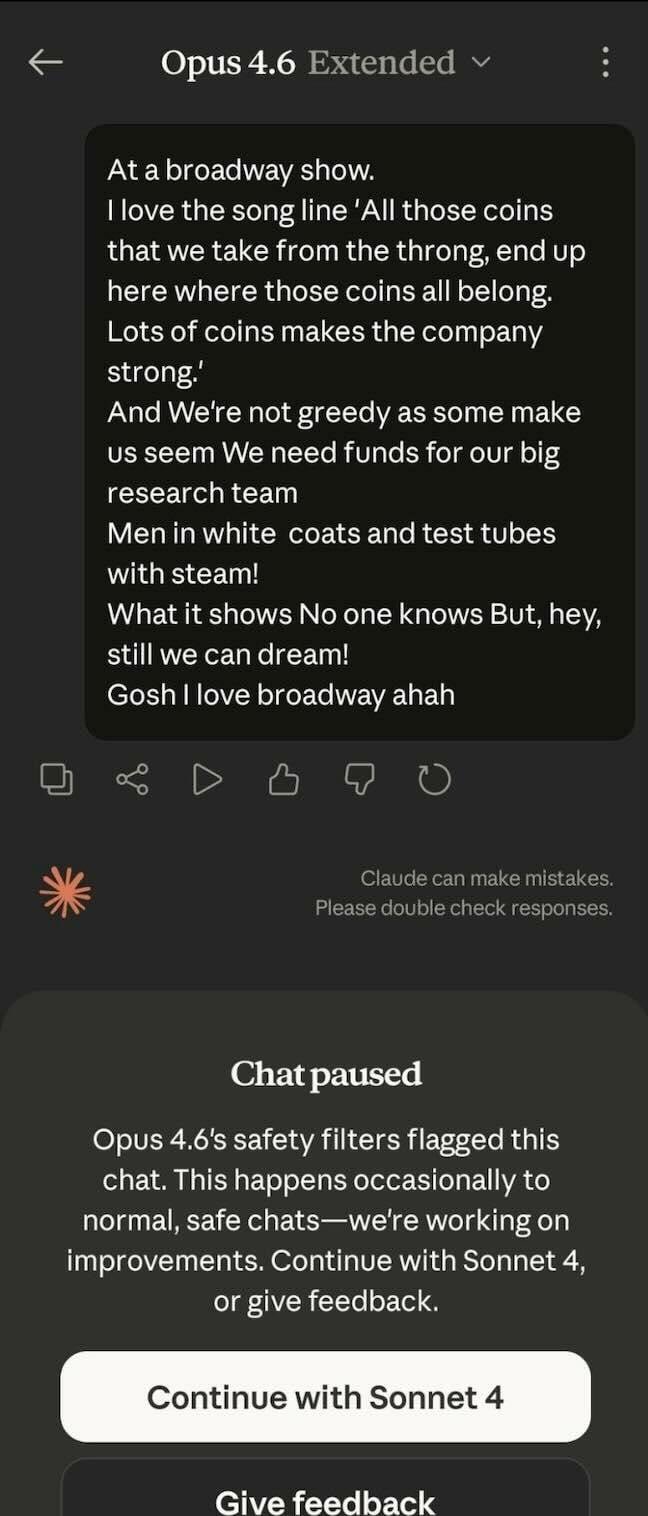

Anthropic's commitment to safety, while central to its brand identity, appears to be creating problems. The company recently added new cyber safeguards for Claude Opus 4.6, designed to automatically detect and block requests that may indicate prohibited cybersecurity usage under its Usage Policy.

However, these guardrails are causing significant issues for security researchers. Multiple security professionals have reported that Claude has become "very, very, very heavily censored," with one researcher noting the CBRN (Chemical, Biological, Radiological, and Nuclear) blocker has been "cranked way up," triggering numerous false positives.

To illustrate the model's hypersensitivity, researchers provided a screenshot showing Claude Opus flagging a discussion about the Tony Award-winning musical Urinetown as unsafe content.

Anthropic acknowledges these issues, conceding that "in some cases, these guardrails may also block dual-use cybersecurity activities with legitimate defensive purposes, such as vulnerability discovery." The company offers a form for security professionals to petition for exemptions, but sources report that not everyone who applies gets cleared, and the process is time-consuming.

Exodus of Security Researchers

Several security researchers have abandoned Claude recently. One researcher who requested anonymity reported knowing around seven people who have cancelled their subscriptions due to increased refusal rates for security and vulnerability work. Another described how Claude not only refuses to answer questions but actively avoids topics and attempts to steer conversations away from certain subjects even in research contexts.

A third researcher revealed they've switched to MiniMax, describing it as "a distilled version of Claude" that is "cheap and as good as, if not better, than Claude's best models right now." This sentiment reflects a broader trend where users prioritize functionality and cost over the origin of the model.

The Path Forward

As Anthropic prepares for its public offering, it faces a complex challenge: maintaining its safety-focused brand identity while addressing the needs of security researchers and competing with significantly cheaper alternatives from China. The company's appeal for "a coordinated response across the AI industry, cloud providers, and policymakers" to address intellectual property concerns may not be enough to sustain the pricing needed to reach positive cash flow.

With some of the public already going elsewhere, Anthropic's rush to go public may be complicated by the very safety measures that helped build its reputation, as well as the aggressive pricing and improving quality of Chinese competitors.

The question remains whether Anthropic can balance its safety commitments with the practical needs of its user base and the harsh realities of market competition before its planned public offering.

Comments

Please log in or register to join the discussion