Simon Willison's Claude Token Counter tool now lets users compare token counts across different Claude models, revealing that Opus 4.7's updated tokenizer increases costs by roughly 40% despite identical pricing.

When Anthropic released Claude Opus 4.7, they quietly changed the tokenizer - a move that could significantly impact how developers budget for their AI applications. Simon Willison's updated Claude Token Counter tool now lets users compare token counts across different Claude models, revealing that Opus 4.7's updated tokenizer increases costs by roughly 40% despite identical pricing.

The Tokenizer Change That Changes Everything

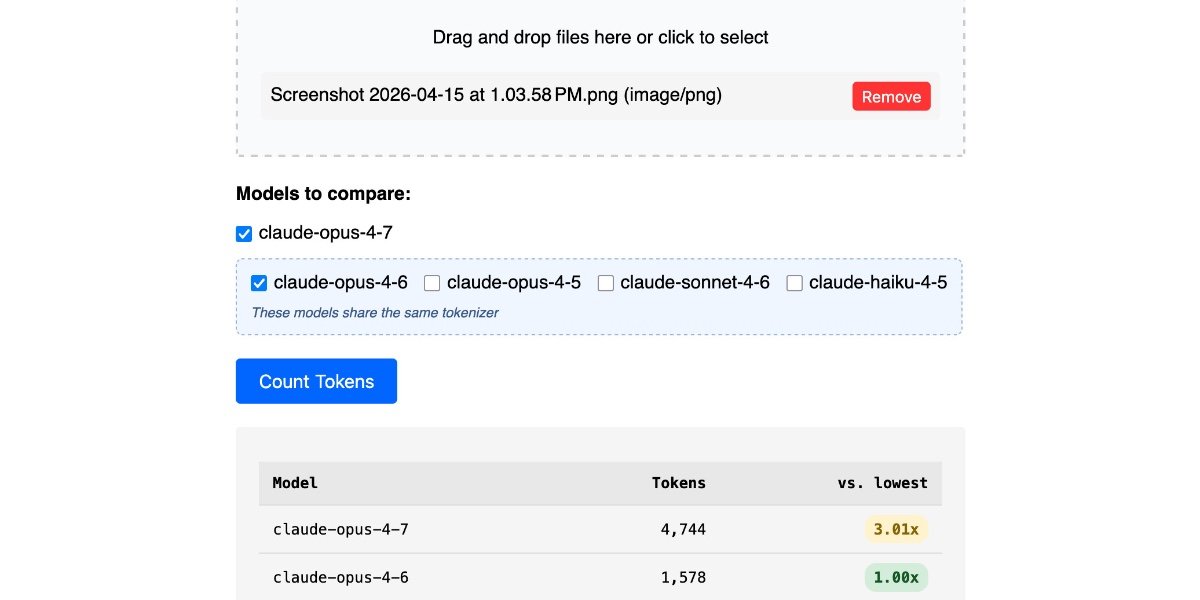

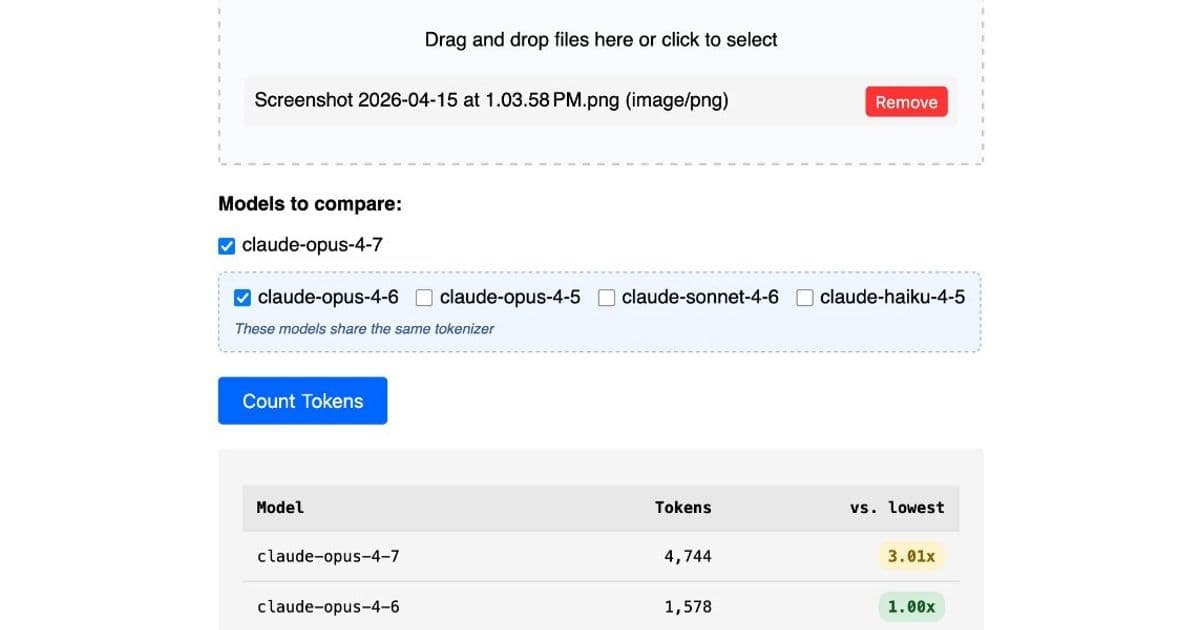

The token counting API accepts any Claude model ID, so Willison included options for all four notable current models: Opus 4.7 and 4.6, Sonnet 4.6, and Haiku 4.5. The comparison reveals a stark difference.

When Willison pasted the Opus 4.7 system prompt into the token counting tool, the results were eye-opening: Opus 4.7's tokenizer used 1.46x the number of tokens as Opus 4.6. This means that despite Anthropic maintaining the same pricing at $5 per million input tokens and $25 per million output tokens, the effective cost has increased by approximately 40%.

Anthropic had warned about this tradeoff in their announcement, noting that "the same input can map to more tokens—roughly 1.0–1.35× depending on the content type." Willison's testing suggests the real-world impact may be even higher for certain inputs.

Image Processing Gets a Resolution Boost

Opus 4.7 also brings improved image support, accepting images up to 2,576 pixels on the long edge (approximately 3.75 megapixels) - more than three times the resolution of prior Claude models. This enhancement comes with its own token implications.

Willison tested a 3,456×2,234 pixel 3.7MB PNG and found a dramatic 3.01x increase in token counts for 4.7 compared to 4.6. However, he later discovered this was entirely due to the higher resolution support. When testing with a smaller 682×318 pixel image, the token counts were effectively identical: 314 tokens with Opus 4.7 versus 310 with Opus 4.6.

Why This Matters for Developers

These findings highlight a crucial consideration for developers building on Claude: model upgrades don't always mean better value. The tokenizer change in Opus 4.7 demonstrates how seemingly incremental improvements can have cascading effects on operational costs.

The ability to compare token counts across models becomes essential for cost optimization. Developers can now make informed decisions about which model version best balances performance needs against budget constraints.

Willison's tool provides transparency in an area where pricing can be opaque. As AI models continue to evolve, having clear visibility into how changes affect token consumption will be increasingly important for managing AI infrastructure costs effectively.

For teams running large-scale applications or processing high volumes of text and images, these token count differences could translate to significant budget variations over time. The 40% effective price increase for text inputs and potential 3x increase for high-resolution images (when supported) are not trivial considerations when planning AI deployments.

The Claude Token Counter update represents a practical response to the evolving AI landscape, giving developers the tools they need to navigate these changes with confidence.

Comments

Please log in or register to join the discussion