Meta reports 4x higher bug detection rates using Just-in-Time testing that dynamically generates tests during code review, addressing the maintenance challenges of traditional test suites in AI-assisted development environments.

Meta has reported improved software quality using a Just-in-Time (JiT) testing approach that dynamically generates tests during code review instead of relying on long-lived, manually maintained test suites. According to Meta's engineering blog and accompanying research, the approach improves bug detection by approximately 4x in AI-assisted development environments.

The shift is driven by agentic workflows where AI systems increasingly generate or modify large portions of code. In this environment, traditional test suites face higher maintenance overhead and reduced effectiveness, as brittle assertions and outdated coverage struggle to keep up with rapid changes.

As Ankit K., ICT Systems Test Engineer, observes: AI generating code and tests faster than humans can maintain them makes JiT testing almost inevitable.

How Just-in-Time Testing Works

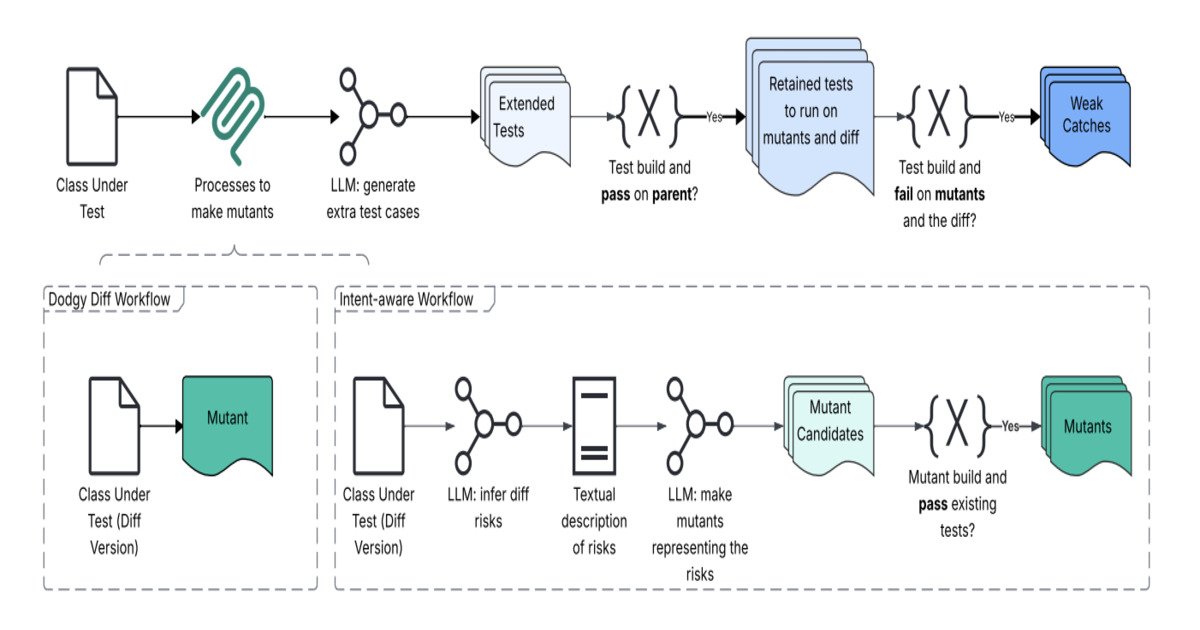

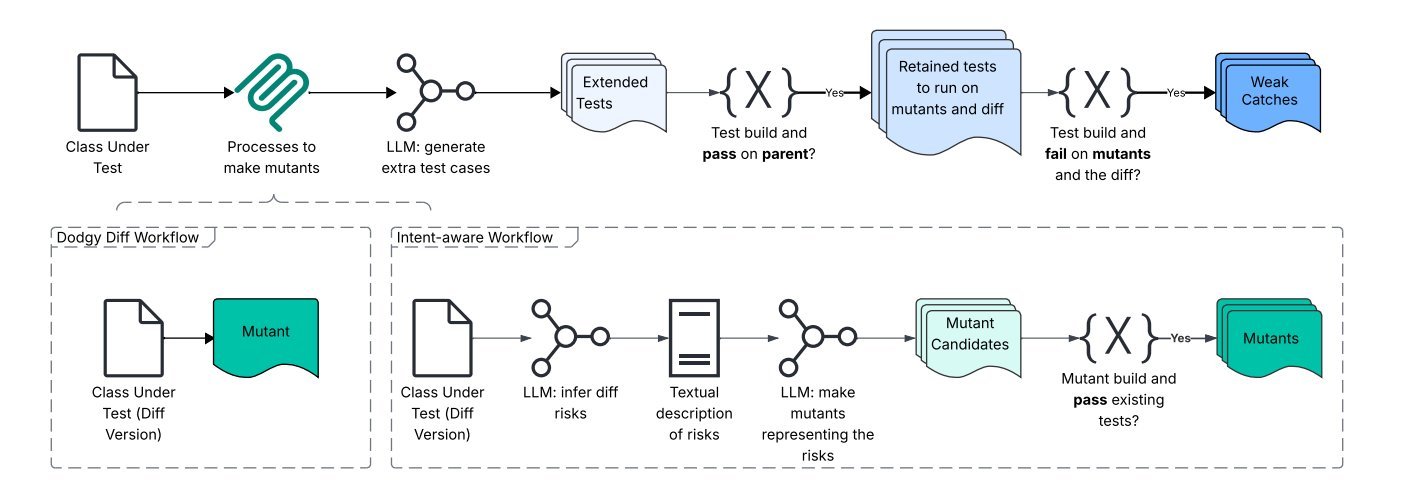

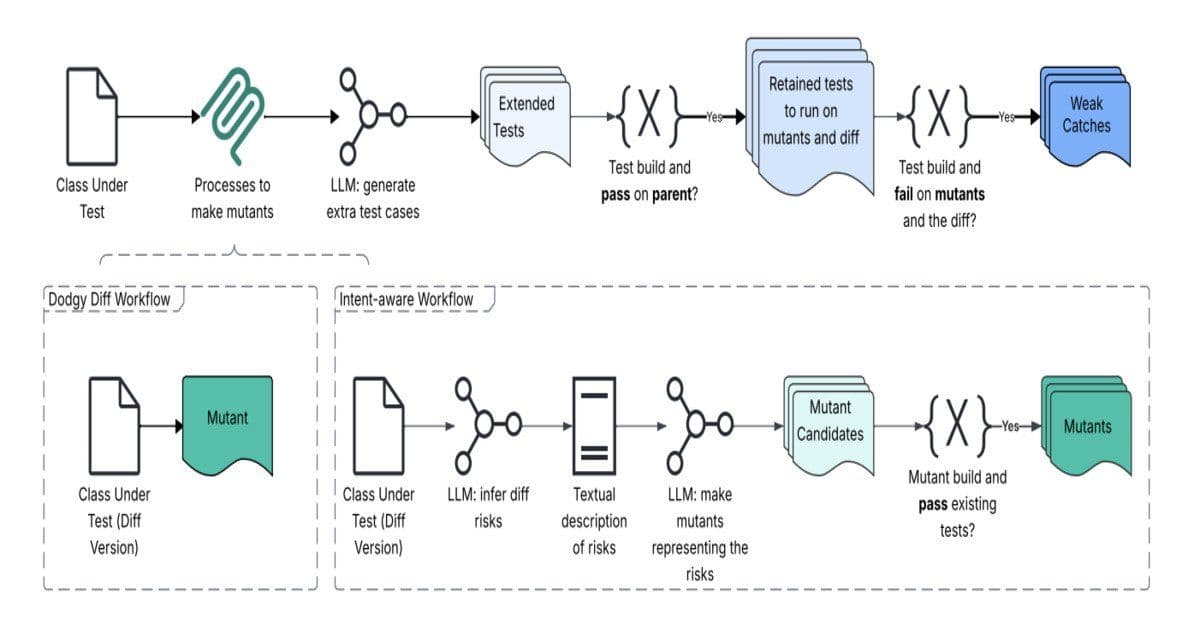

JiT testing addresses this by generating tests at pull request time based on the specific code diff. Instead of static validation, the system infers developer intent, identifies potential failure modes, and constructs targeted tests designed to fail when regressions exist.

It targets regression-catching tests that fail on the proposed changes but pass on the parent revision. This is achieved through a pipeline combining large language models, program analysis, and mutation testing, where synthetic defects are injected to validate whether generated tests detect them.

As Mark Harman, Research Scientist at Meta, notes: This work represents a fundamental shift from 'hardening' tests that pass today to 'catching' tests that find tomorrow's bugs.

The Dodgy Diff Architecture

A key component is the Dodgy Diff and intent-aware workflow architecture, which reframes a code change as a semantic signal rather than a textual diff. The system analyzes the diff to extract behavioral intent and risk areas, then performs intent reconstruction and change-risk modeling to understand what could break as a result.

These signals feed into a mutation engine that generates dodgy variants of the code, simulating realistic failure scenarios. An LLM-based test synthesis layer then generates tests aligned with inferred intent, followed by filtering to remove noisy or low-value tests before surfacing results in the pull request.

Results and Impact

Meta reports that the system was evaluated on over 22,000 generated tests. Results show a 4x improvement in bug detection over baseline-generated tests and up to 20x improvement in detecting meaningful failures compared to coincidental outcomes. In one evaluation subset, 41 issues were identified, of which 8 were confirmed as real defects, including several with potential production impact.

Mark Harman, in another LinkedIn post, emphasized: Mutation testing, after decades of purely intellectual impact, confined to academic circles, is finally breaking out into industry and transforming practical, scalable Software Testing 2.0.

Benefits for AI-Driven Development

Catching JiT tests are designed for AI-driven development, generated per change to detect serious, unexpected bugs without ongoing maintenance. They reduce brittle test suites by adapting automatically as code evolves and shifting effort from humans to machines. Human review is required only when meaningful issues are surfaced.

This reframes testing toward change-specific fault detection rather than static correctness validation.

The approach represents a significant evolution in software testing methodology, particularly relevant as AI-assisted coding becomes increasingly prevalent in modern development workflows. By eliminating the maintenance burden of traditional test suites while improving detection rates, JiT testing could become a standard practice for organizations leveraging AI in their development processes.

Comments

Please log in or register to join the discussion