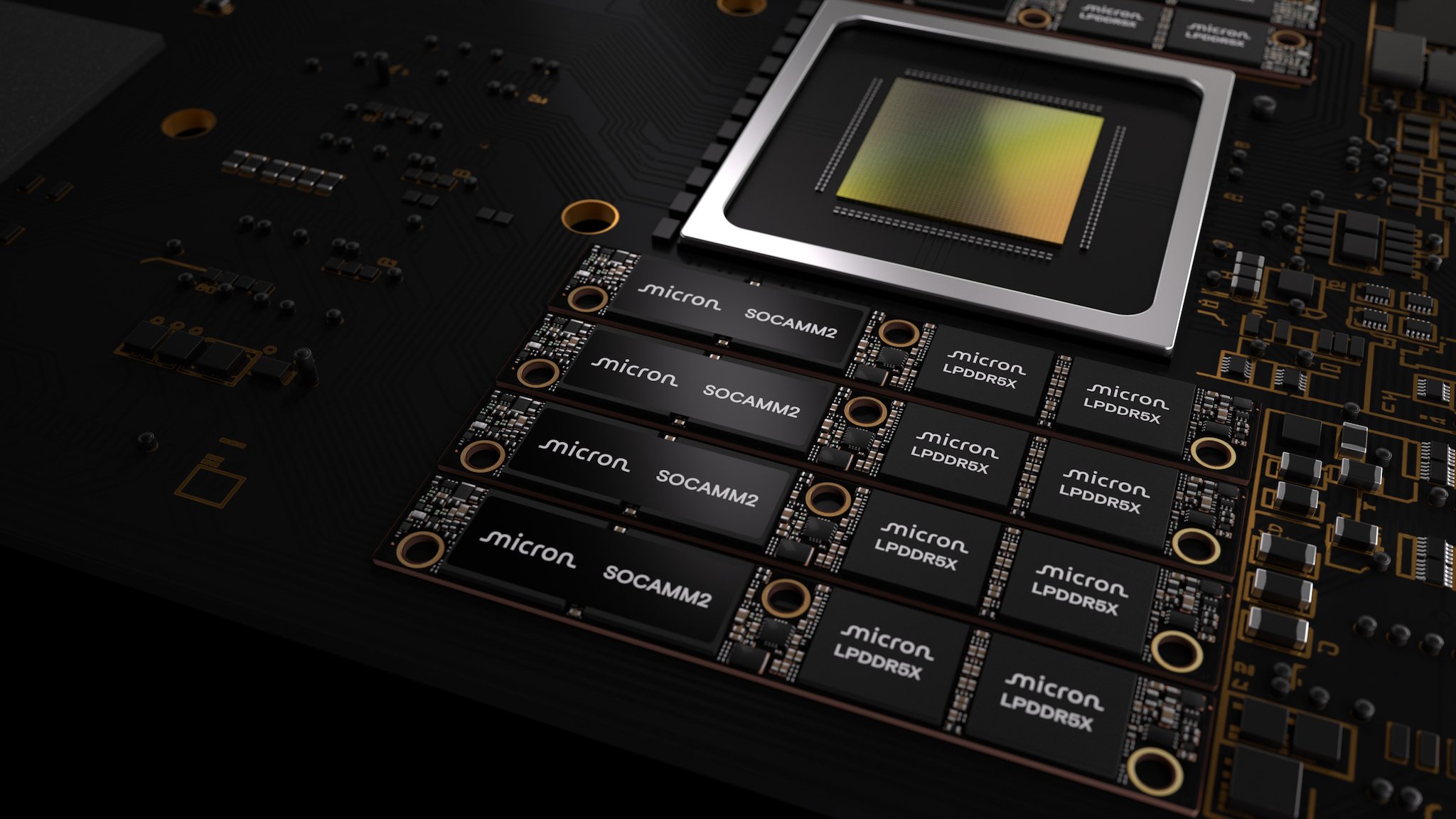

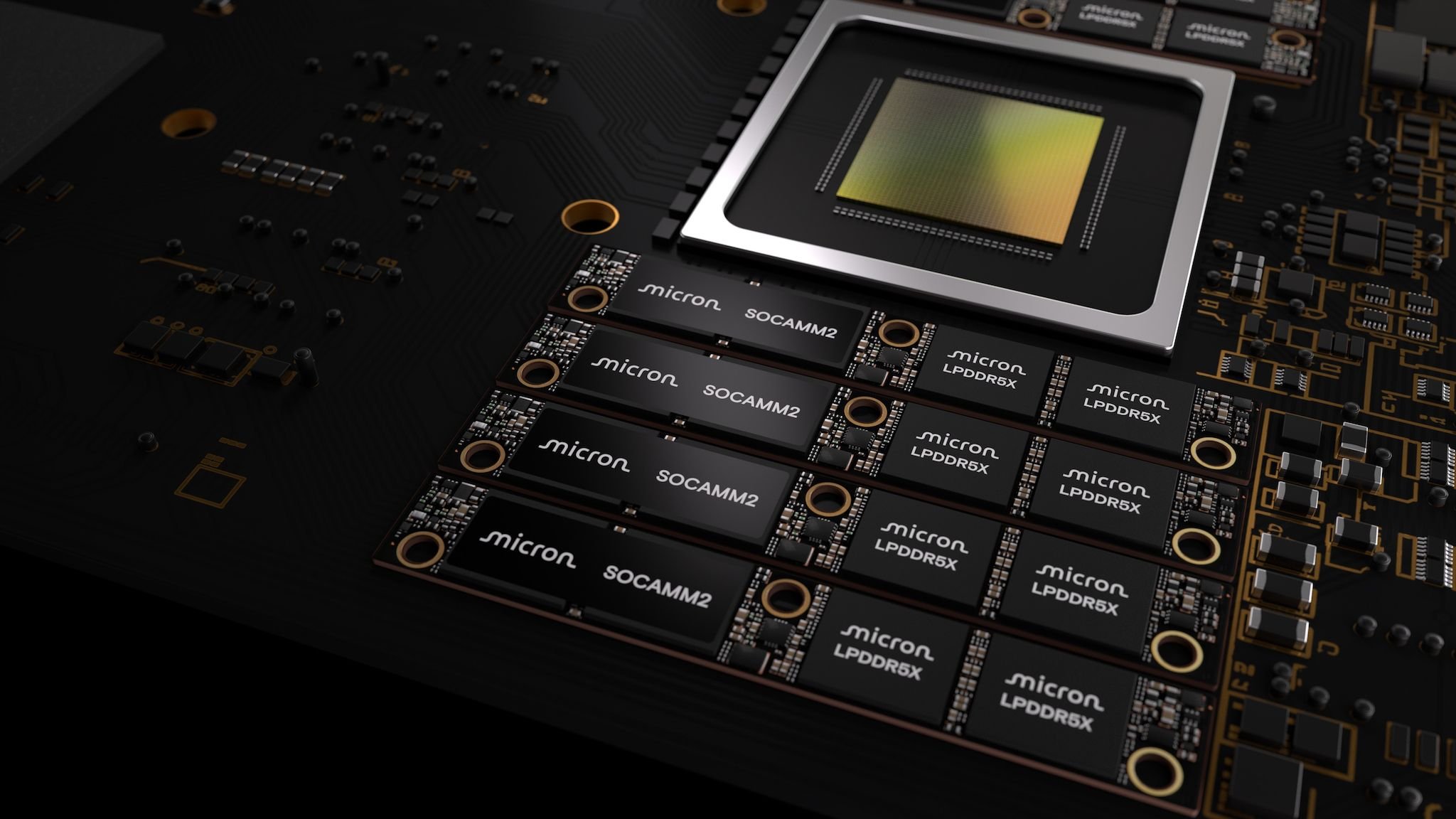

Micron unveils industry-first 256GB SOCAMM2 memory packages, enabling 2TB RAM configurations per CPU and 66% better power efficiency than traditional RDIMMs for next-generation AI servers.

Micron has unveiled what it claims are the industry's first 256GB SOCAMM2 memory modules, marking a significant leap in memory density for AI datacenters. The new modules, which are now being sampled to customers, represent a 33% increase over the previous 192GB SOCAMM2 modules released just six months ago, pushing the boundaries of what's possible in high-performance computing environments.

(Image credit: Micron)

The implications for AI infrastructure are substantial. With these new modules, a single CPU can now access up to 2TB of memory, and a typical Nvidia NVL72 rack—which houses 36 CPUs—can support an impressive 72TB of total RAM. This massive memory capacity is crucial for AI workloads that require large context windows and rapid data access.

Technical Breakthrough: Monolithic 32Gb LPDDR5X Dies

What makes these 256GB SOCAMM2 modules particularly noteworthy is their use of Micron's 32Gb (4GB) LPDDR5X monolithic dies. In semiconductor terminology, "monolithic" means that all the memory cells and relevant circuitry are integrated onto a single die, rather than using multiple smaller dies. This approach offers several advantages:

- Improved signal integrity: Fewer interconnects between dies reduce latency and power consumption

- Enhanced thermal performance: Heat is generated from a single source rather than distributed across multiple dies

- Better scalability: Monolithic designs can theoretically scale to higher capacities more efficiently

Power Efficiency Revolution

Beyond raw capacity, Micron emphasizes that these SOCAMM2 modules deliver 66% better power efficiency compared to traditional Registered DIMMs (RDIMMs). This efficiency gain is critical for AI datacenters where power consumption is a major operational cost and cooling challenge.

(Image credit: Micron)

The power efficiency improvements come from several factors:

- LPDDR5X technology: The low-power DDR5X standard is inherently more efficient than standard DDR5

- Monolithic die architecture: Reduced interconnects mean less power wasted on signal transmission

- SOCAMM2 optimized design: The form factor itself is engineered for minimal power consumption

Liquid Cooling Compatibility

The SOCAMM2 form factor was specifically designed with liquid cooling in mind, addressing one of the biggest challenges in modern AI datacenters. As compute density increases, traditional air cooling becomes insufficient, making liquid cooling solutions increasingly necessary. The SOCAMM2 modules' compatibility with liquid cooling infrastructure makes them ideal for next-generation AI servers that push thermal limits.

AI Performance Implications

For AI workloads, memory capacity and speed directly translate to performance gains. Micron highlights several key benefits:

Larger Context Windows: With 2TB of RAM per CPU, AI models can process vastly larger context windows. This is particularly important for:

- Large language models that need to maintain coherence over extended conversations

- Multimodal models that process text, images, and other data simultaneously

- Complex reasoning tasks that require maintaining extensive state information

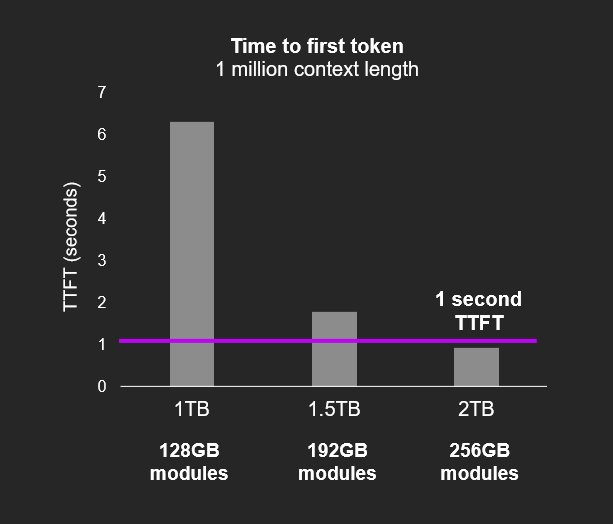

Reduced TTFT (Time To First Token): The ability to load more model parameters and context into memory means AI systems can start generating responses faster. In competitive AI services, even milliseconds matter for user experience.

Improved Model Throughput: With more memory available, models can process larger batches of data without spilling to slower storage, improving overall throughput.

The SOCAMM2 Partnership

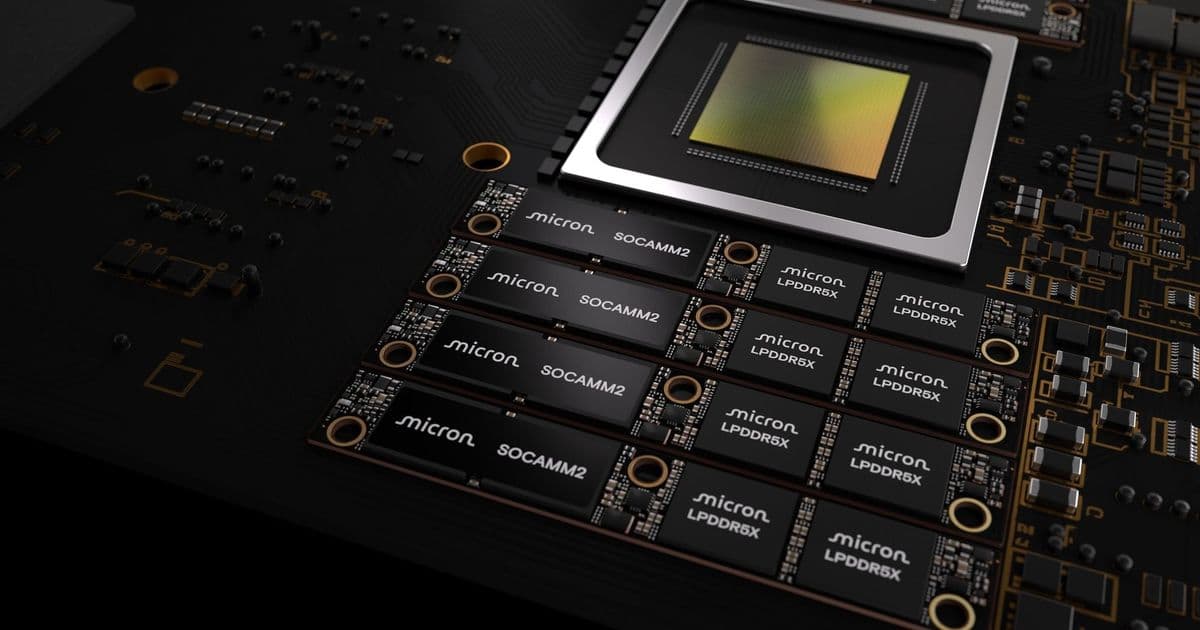

The SOCAMM2 standard emerged from a collaboration between Nvidia and major memory manufacturers including Micron, Samsung, and SK hynix. Originally, Nvidia designed the SOCAMM standard but encountered thermal challenges when implementing it in high-density servers.

Recognizing the need for expertise in memory design, Nvidia partnered with established memory manufacturers to create SOCAMM2. This collaboration has resulted in modules that not only meet the density requirements but also address the thermal and power challenges that come with such high-capacity memory configurations.

Market Impact and Future Outlook

The timing of this announcement is significant given the current AI infrastructure boom. Companies are investing hundreds of billions of dollars in AI datacenters, and memory capacity is becoming as critical as compute power in determining overall system performance.

As AI models continue to grow in size and complexity, the demand for high-capacity, efficient memory solutions will only increase. Micron's 256GB SOCAMM2 modules position the company at the forefront of this trend, potentially giving it an advantage in the competitive AI infrastructure market.

(Image credit: SK Hynix)

The advancement also signals the ongoing evolution of memory technology. While traditional DIMM form factors have served the industry well for decades, specialized form factors like SOCAMM2 are emerging to meet the unique demands of AI and high-performance computing workloads.

Technical Specifications at a Glance

- Capacity: 256GB per module

- Technology: 32Gb (4GB) LPDDR5X monolithic dies

- Form Factor: SOCAMM2 (Nvidia collaboration)

- Power Efficiency: 66% better than RDIMMs

- Cooling: Liquid cooling compatible

- Target Market: AI datacenters, high-performance computing

Industry Context

This announcement comes amid a broader shift in the memory industry toward specialized solutions for AI workloads. High Bandwidth Memory (HBM) has been gaining traction for GPU memory, while solutions like SOCAMM2 address the CPU memory needs in AI servers.

The competition in this space is intense, with Samsung and SK hynix also developing advanced memory solutions. However, Micron's claim of being first to market with 256GB SOCAMM2 modules could provide a competitive advantage as customers begin designing their next-generation AI infrastructure.

As AI continues to transform industries from healthcare to finance to entertainment, the infrastructure supporting these workloads will only become more critical. Micron's 256GB SOCAMM2 modules represent a significant step forward in that infrastructure, enabling the massive memory capacities that tomorrow's AI systems will require.

For datacenter operators and AI companies, these modules offer a compelling combination of capacity, efficiency, and performance that could influence infrastructure decisions for years to come. As samples begin shipping to customers, the industry will be watching closely to see how this technology performs in real-world deployments and how competitors respond to this significant advancement in memory technology.

Comments

Please log in or register to join the discussion