OpenAI's defense contract raises questions about AI ethics as legal language redefines prohibited activities

Mike Masnick of Techdirt has uncovered a concerning pattern in OpenAI's Department of Defense agreement that reveals how legal language can be used to circumvent ethical boundaries. The analysis shows that OpenAI's stated "red lines" within its DOD agreement are built upon legal language that the NSA has redefined over decades to permit activities that appear to be prohibited on the surface.

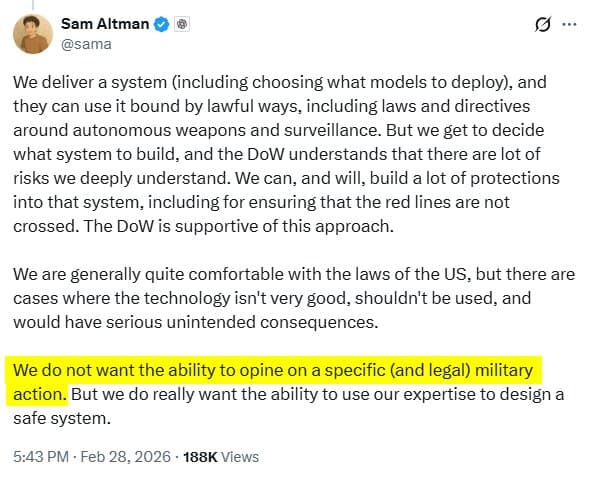

This revelation comes at a time when OpenAI is facing intense scrutiny over its defense contracts. Just days ago, Sam Altman defended the company's DOD deal during an all-hands meeting, calling the backlash "painful" while claiming OpenAI doesn't "get to make operational decisions" regarding how its technology is used by the Department of Defense.

The legal maneuvering Masnick describes is particularly troubling because it demonstrates how organizations can maintain the appearance of ethical constraints while simultaneously enabling activities that violate those same principles. The NSA's decades-long effort to redefine legal language has created a framework where seemingly prohibited activities become permissible through careful linguistic manipulation.

This pattern of legal redefinition is not unique to OpenAI or the NSA. It reflects a broader trend in technology ethics where companies establish public-facing ethical guidelines while simultaneously working to find legal pathways around those same guidelines when they conflict with business interests or government contracts.

The timing of this revelation is significant. OpenAI recently announced GPT-5.3 Instant, which it claims delivers more accurate answers and better-contextualized results when searching the web. The company is simultaneously expanding its government partnerships while facing internal and external criticism about the ethical implications of those partnerships.

Masnick's analysis raises fundamental questions about the effectiveness of self-imposed ethical boundaries in the AI industry. If legal language can be redefined to permit activities that appear to be prohibited, then what value do these ethical guidelines actually provide? The answer appears to be: primarily public relations value rather than actual constraint on behavior.

The broader implications extend beyond OpenAI. As AI companies increasingly pursue government contracts and defense partnerships, the tension between stated ethical principles and actual business practices will likely intensify. The legal framework that allows for the redefinition of prohibited activities creates a system where companies can claim ethical high ground while engaging in practices that many would consider unethical.

This situation also highlights the challenges of regulating AI development and deployment. When legal language can be manipulated to permit activities that appear to be prohibited, traditional regulatory approaches may prove ineffective. The solution may require not just legal reform but also cultural and institutional changes in how we approach technology ethics.

The revelation about OpenAI's DOD agreement serves as a reminder that in the world of AI development and government contracting, appearances can be deceiving. What looks like an ethical boundary on paper may be nothing more than a legal construct that can be redefined to permit the very activities it claims to prohibit.

As the AI industry continues to evolve and expand its government partnerships, the need for genuine ethical frameworks that cannot be circumvented through legal maneuvering becomes increasingly apparent. The current system, where legal language can be used to redefine prohibited activities, undermines public trust and raises serious questions about the future of AI ethics and governance.

Comments

Please log in or register to join the discussion