Enterprise knowledge graphs are becoming critical infrastructure for modern digital platforms, but scaling them introduces significant architectural challenges. This article explores practical strategies for building high-performance knowledge graph systems that can handle rapid data growth, complex queries, and strict latency requirements.

Optimizing Enterprise Knowledge Graphs for Scalable Digital Platforms Enterprise architecture strategies for building high performance knowledge graph systems.

Introduction Modern digital platforms rely heavily on connected data. Systems such as recommendation engines, fraud detection solutions, enterprise search, and personalization platforms all depend on the ability to connect information from multiple sources while preserving context and relationships.

One architectural approach that has gained strong adoption in recent years is the Enterprise Knowledge Graph (EKG). By representing enterprise data as entities and relationships, knowledge graphs allow organizations to build systems that understand context instead of simply processing isolated datasets.

However, while the theoretical advantages of knowledge graphs are widely recognized, scaling them in production environments introduces significant architectural challenges. Many organizations successfully prototype knowledge graphs, but performance bottlenecks begin to appear once the system becomes part of critical infrastructure. High ingestion rates, complex traversal queries, and strict latency requirements quickly expose design limitations.

This article explores practical strategies for optimizing enterprise knowledge graphs for scalability, focusing on architectural patterns and operational practices observed in large scale digital platforms.

Why Scalability Becomes the Real Challenge Most enterprise knowledge graph initiatives begin with a limited scope. Teams might integrate a few datasets, improve semantic search capabilities, or enable richer reporting. At this stage, a single graph database or RDF store is typically sufficient.

However, once the knowledge graph becomes core infrastructure supporting production systems, several challenges emerge simultaneously:

- Rapid growth in connected datasets

- Continuous ingestion from multiple enterprise systems

- Increasingly complex multi hop traversal queries

- Strict response time requirements for user facing platforms

- Additional computational overhead introduced by reasoning engines and ontologies

Simply adding hardware or scaling nodes horizontally rarely resolves these issues. In most cases, the real challenge lies in architectural design rather than compute capacity.

Moving Beyond a Single Graph Database The Limits of Monolithic Graph Architectures Traditional RDF triple stores provide strong semantic capabilities and standards compliance. However, they may struggle when handling high volume transactional updates or deep traversal queries in real time environments. Conversely, labeled property graph (LPG) databases are typically optimized for traversal performance but often lack native semantic reasoning capabilities.

Attempting to consolidate semantic modeling, inference processing, analytics workloads, and realtime application queries into a single graph system frequently leads to trade offs in performance, scalability, or maintainability.

A Hybrid Knowledge Graph Architecture A growing number of organizations now adopt a hybrid or polyglot architecture, where different systems handle different responsibilities. A common pattern includes:

- Semantic Layer (RDF / OWL): Responsible for ontology management, schema governance, and reasoning workflows.

- Operational Graph Layer (LPG): Optimized for realtime application queries, recommendation engines, and traversal-heavy workloads.

- Analytical Storage Systems: Designed for large scale aggregations, historical analysis, and reporting.

To keep these systems synchronized, many organizations implement event driven pipelines using technologies such as Kafka or Pulsar. Updates in one system generate events that propagate to other layers, ensuring consistency while allowing each system to operate within its performance strengths.

In production environments, hybrid architectures consistently demonstrate improved query latency and greater operational flexibility compared to monolithic graph deployments.

Partitioning Strategies for Large Graphs As enterprise knowledge graphs grow beyond single node capacity, distributed execution becomes necessary. Partitioning strategy then becomes a critical performance factor.

Many graph systems rely on hash based partitioning to distribute data evenly across nodes. While this approach balances storage, it often fragments highly connected subgraphs. As a result, traversal queries require excessive cross node communication.

A more effective strategy is topology aware partitioning, where frequently connected entities are stored within the same partition. Common approaches include:

- Partitioning by business domain (customers, products, organizations)

- Using community detection algorithms to group related nodes

- Designing partitions based on observed query patterns

These techniques significantly reduce traversal fan-out and improve both median and tail latency under concurrent workloads.

Managing Semantic Inference Without Performance Penalties Semantic reasoning is one of the defining strengths of knowledge graphs, but it can also introduce significant computational overhead. Applying full ontology reasoning during query execution can dramatically increase system workload.

To address this challenge, many scalable knowledge graph systems adopt selective inference strategies, including:

- Precomputing frequently used inferred relationships

- Materializing common hierarchical relationships ahead of time

- Running complex reasoning workflows asynchronously

This approach preserves semantic richness while maintaining predictable query performance.

Smarter Query Optimization As graph queries become more complex, query planning becomes a critical optimization lever. Many traditional graph engines rely on static heuristics when generating query execution plans. In dynamic enterprise environments where datasets evolve continuously, these heuristics often produce inefficient execution strategies.

Newer approaches incorporate machine learning techniques into query optimization, particularly for improving cardinality estimation. By learning from historical query patterns and execution data, ML-assisted planners can generate more efficient execution strategies and significantly reduce query latency.

Observability for Large-Scale Graph Systems Operating knowledge graphs at scale requires deep system visibility. Monitoring CPU and memory usage alone is insufficient. Effective observability for graph systems includes:

- Query level latency metrics

- Traversal depth monitoring

- Inference workload analysis

- Partition imbalance detection

With these insights, engineering teams can continuously refine partitioning strategies, caching policies, and inference materialization decisions.

Real Impact on Digital Product Platforms When these optimization strategies are implemented together, organizations typically observe measurable improvements:

- Reduced latency for real-time applications

- Higher ingestion throughput under sustained workloads

- Near-linear scalability as datasets grow

- Greater system stability during traffic spikes

These technical improvements directly translate into business outcomes such as faster recommendations, improved search relevance, and more reliable intelligent services.

Final Thoughts Enterprise knowledge graphs are rapidly evolving from experimental projects into foundational infrastructure for modern data driven systems. As organizations continue building AI powered platforms, the importance of structured, context-aware data will only increase.

By adopting hybrid architectures, intelligent partitioning strategies, and strong observability practices, engineering teams can build scalable knowledge graph platforms capable of supporting the next generation of intelligent digital products.

Author Note This article discusses practical architectural strategies for scaling enterprise knowledge graph systems used in modern digital product platforms, particularly in environments requiring high ingestion throughput, low latency traversal queries, and intelligent data relationships.

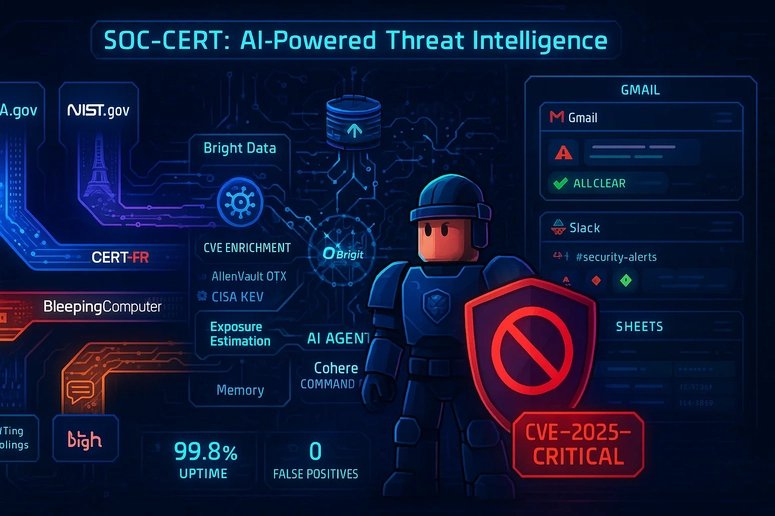

Bright Data PROMOTED SOC-CERT: Automated Threat Intelligence System with n8n & AI Check out this submission for the AI Agents Challenge powered by n8n and Bright Data. Read more →

Comments

Please log in or register to join the discussion