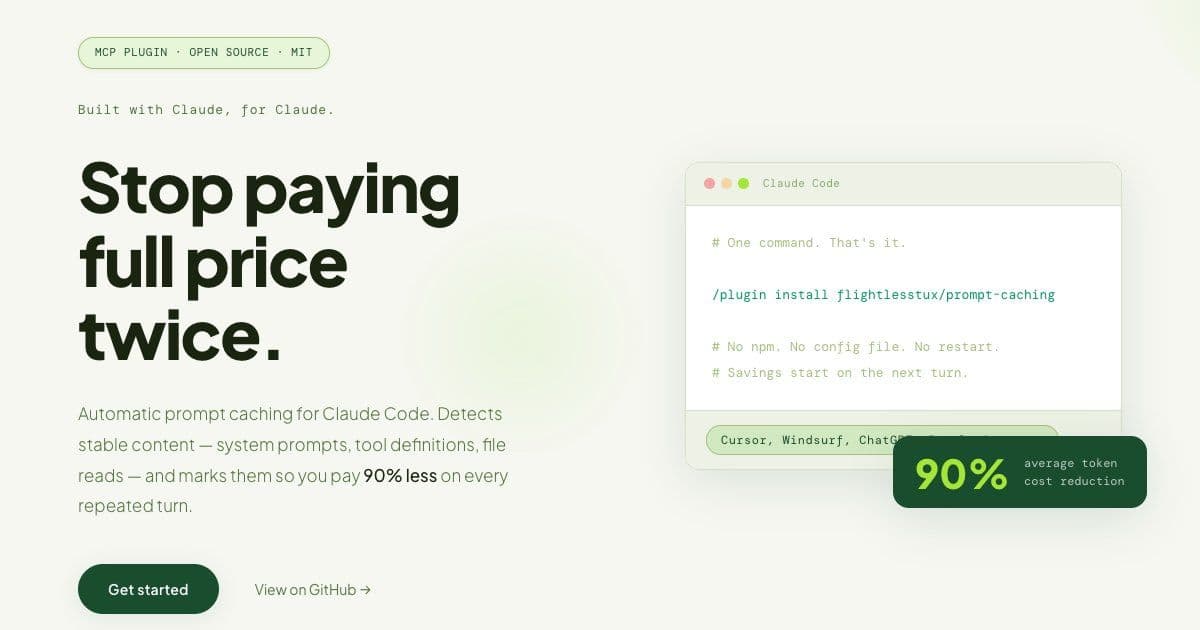

A new open-source plugin, prompt-caching, dramatically reduces token costs for Claude Code and other AI clients by implementing Anthropic's caching API with intelligent breakpoint placement, showing up to 90% savings in real-world usage scenarios.

The rising cost of AI token consumption has become a significant concern for developers working with large language models. A new open-source solution, prompt-caching, addresses this head-on by implementing Anthropic's caching API with intelligent breakpoint placement, potentially reducing token costs by up to 90% for Claude Code users.

Anthropic's caching API allows storing stable content server-side for 5 minutes, with cache reads costing just 0.1× of normal token costs. While this feature exists in the API, effective implementation requires careful placement of cache breakpoints. This is where prompt-caching provides value, automating the optimization process that developers would otherwise need to manage manually.

The plugin introduces four intelligent operational modes:

BugFix Mode: Detects stack traces in messages, caching the buggy file and error context once. Subsequent interactions only pay for new questions, dramatically reducing costs when debugging the same issue.

Refactor Mode: Identifies refactor keywords and file lists, caching the before-pattern, style guides, and type definitions. Only per-file instructions need to be resent with each interaction.

File Tracking: Monitors read counts per file, automatically injecting cache breakpoints on the second read. All future reads of that content then cost just 0.1×.

Conversation Freeze: After a predetermined number of turns, the system freezes all messages before turn (N−3) as a cached prefix, only sending the last 3 turns fresh. This creates compounding savings over extended conversations.

Real-world benchmarks measured on actual Claude Code sessions with Sonnet demonstrate significant cost savings:

- Bug fix scenarios (single file, 20 turns): 85% reduction (184,000 to 28,400 tokens)

- Refactor scenarios (5 files, 15 turns): 80% reduction (310,000 to 61,200 tokens)

- General coding (40 turns): 92% reduction (890,000 to 71,200 tokens)

- Repeated file reads (5×5): 90% reduction (50,000 to 5,100 tokens)

While cache creation costs 1.25× normal, every turn after the first generates pure savings, making the solution particularly valuable for extended development sessions.

Installation is straightforward across multiple platforms. For Claude Code, users can install directly from GitHub while awaiting approval in the official plugin marketplace. The plugin works seamlessly with Cursor, Windsurf, ChatGPT, Perplexity, Zed, Continue.dev, and any other MCP-compatible clients through a simple npm install process.

The project addresses common questions about its value proposition. While Claude Code does handle prompt caching automatically for its own API calls, this plugin serves developers building custom applications or agents with the Anthropic SDK, where raw SDK calls don't benefit from automatic caching without manual breakpoint placement.

Even with Anthropic's newer auto-caching feature, prompt-caching remains valuable for its observability tools, including get_cache_stats for tracking hit rates and cumulative savings, and analyze_cacheability for debugging cache misses.

The plugin supports Claude Opus 4.6/4.5/4.1/4, Sonnet 4.6/4.5/4/3.7, Haiku 4.5, Haiku 3.5, and Haiku 3 models, with cache lifetime set to 5 minutes by default (ephemeral), extending on cache hits. A 1-hour TTL option is available at 2× the base input token price.

As an MIT-licensed open-source project with zero lock-in, prompt-caching offers developers a transparent, cost-effective way to optimize their interactions with Claude Code and compatible AI clients.

For developers interested in implementing this solution, the project is available on GitHub where contributions are welcome. The official Anthropic documentation provides additional context on the underlying caching technology.

Comments

Please log in or register to join the discussion