RCLI brings complete voice AI capabilities to macOS without cloud dependency, offering sub-200ms latency and local document processing through Apple Silicon optimization.

The AI landscape is witnessing a significant shift as on-device solutions gain momentum, challenging the long-held dominance of cloud-dependent AI services. RCLI, a new voice AI framework for macOS, exemplifies this trend by delivering a complete speech-to-text + large language model + text-to-speech pipeline entirely on-device, with no cloud processing required.

At its core, RCLI represents a departure from conventional AI assistants that transmit user voice data to remote servers for processing. Instead, it leverages Apple Silicon's computational power to run all AI models locally, achieving impressive performance metrics including sub-200ms end-to-end latency and the ability to query personal documents through local RAG (Retrieval-Augmented Generation) technology.

Technical Architecture and Performance

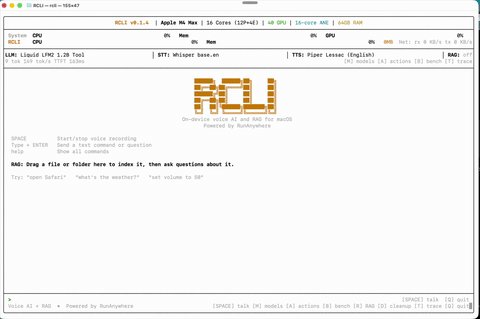

RCLI's architecture is built around three dedicated threads that work in concert to deliver real-time voice interaction:

- Speech Recognition Thread: Captures audio and runs voice activity detection (VAD) using Silero, with support for both streaming Zipformer and offline Whisper/Parakeet models

- Language Model Thread: Generates tokens using various LLMs including Qwen3, LFM2, and Llama 3.2, with KV cache continuation and Flash Attention optimization

- Speech Synthesis Thread: Implements double-buffered sentence-level synthesis where the next sentence renders while the current one plays

This parallel processing approach, combined with a 64 MB pre-allocated memory pool and lock-free ring buffers, enables zero-copy audio transfer and eliminates runtime malloc during inference, contributing to the system's impressive responsiveness.

The system's performance is further enhanced by MetalRT, a proprietary GPU inference engine developed by RunAnywhere specifically for Apple Silicon. MetalRT delivers up to 550 tokens per second for LLM inference and leverages Metal 3.1 features available on M3 and later chips. For M1/M2 users, RCLI automatically falls back to the open-source llama.cpp engine.

Practical Capabilities

RCLI goes beyond simple voice commands, offering 43 distinct macOS actions that can be triggered by voice or text input. These span multiple categories:

- Productivity: Create notes, reminders, run shortcuts

- Communication: Send messages, initiate FaceTime calls

- Media: Control Spotify, adjust volume, skip tracks

- System: Open apps, toggle dark mode, take screenshots

- Web: Search YouTube, open URLs, display maps

The document intelligence feature stands out as particularly innovative, allowing users to ingest PDFs, DOCX files, and plain text documents and then query them by voice. Using hybrid vector + BM25 retrieval with approximately 4ms latency, RCLI can process over 5,000 document chunks, enabling sophisticated local Q&A capabilities.

Privacy Implications

The rise of on-device AI like RCLI reflects growing concerns about data privacy and the environmental impact of cloud computing. By processing all data locally, RCLI eliminates the risks associated with transmitting sensitive voice commands and personal documents to third-party servers. This approach resonates with users who have become increasingly wary of how their data is collected, stored, and potentially used by cloud AI providers.

"The shift toward on-device AI represents a fundamental change in how we think about AI accessibility and privacy," says tech analyst Sarah Chen. "Users are beginning to understand that they don't need to sacrifice personal data to benefit from advanced AI capabilities."

Limitations and Counter-Perspectives

Despite its impressive capabilities, RCLI has limitations that cloud-based solutions don't share. The requirement for Apple Silicon (M1 or later with macOS 13+) immediately excludes a significant portion of the Mac user base. Additionally, the proprietary nature of MetalRT raises questions about long-term accessibility and potential vendor lock-in.

Cloud AI proponents argue that on-device solutions cannot match the computational power of remote servers for complex tasks. "While local AI is great for privacy and responsiveness, cloud-based models still offer superior performance for sophisticated reasoning and multimodal tasks," explains AI researcher Dr. Michael Torres.

The resource requirements of running multiple AI models simultaneously also present challenges. Even with Apple Silicon's efficiency, users may notice performance impacts during intensive voice interactions, particularly when using larger language models. The default installation requires approximately 1GB of storage, and additional models can significantly increase this footprint.

Community Adoption and Ecosystem Impact

The release of RCLI comes amid growing interest in local-first AI solutions. Projects like Ollama, LM Studio, and Mistral have demonstrated the viability of running LLMs locally, but RCLI distinguishes itself by integrating the complete voice pipeline—STT, LLM, and TTS—into a cohesive, macOS-specific solution.

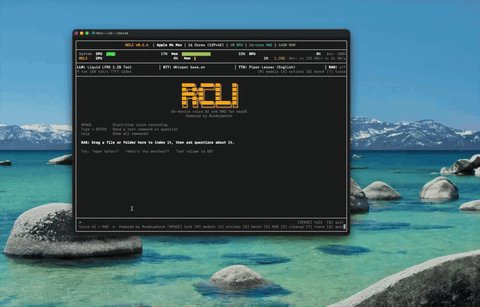

The project's GitHub repository has already attracted significant attention, with developers praising its technical implementation while requesting expanded platform support and open-sourcing of MetalRT. The interactive terminal user interface (TUI) provides a developer-friendly environment for experimenting with different models and configurations, potentially fostering a community of contributors extending its capabilities.

Future Trajectory

RCLI's emergence signals a broader trend toward specialized, hardware-optimized AI solutions. As on-device chips continue to improve in performance and efficiency, we can expect to see more applications that leverage local processing for both privacy and performance reasons.

The potential for cross-platform implementations is particularly intriguing. If MetalRT or similar technologies become available for other hardware platforms, the approach demonstrated by RCLI could revolutionize how AI assistants are designed across operating systems.

For now, RCLI stands as an impressive proof-of-concept for what's possible when AI processing moves from the cloud to the device. It represents a significant step toward making advanced AI capabilities more accessible, private, and responsive—qualities that may define the next generation of AI assistants.

To explore RCLI yourself, you can install it with a single command: curl -fsSL https://raw.githubusercontent.com/RunanywhereAI/RCLI/main/install.sh | bash or via Homebrew after tapping the repository. The project is open source under the MIT License, though MetalRT remains proprietary.

Comments

Please log in or register to join the discussion