Noah Smith argues the Pentagon is justified in pressuring Anthropic over AI development, as nation-states must maintain monopoly on force when AI becomes a potential superweapon.

The Pentagon's designation of Anthropic as a supply chain risk has sparked fierce debate about the role of AI companies in national security. While Anthropic CEO Dario Amodei has vowed to fight the designation in court, arguing it has a "narrow scope," the underlying logic of the Pentagon's position deserves serious consideration.

The Superweapon Argument

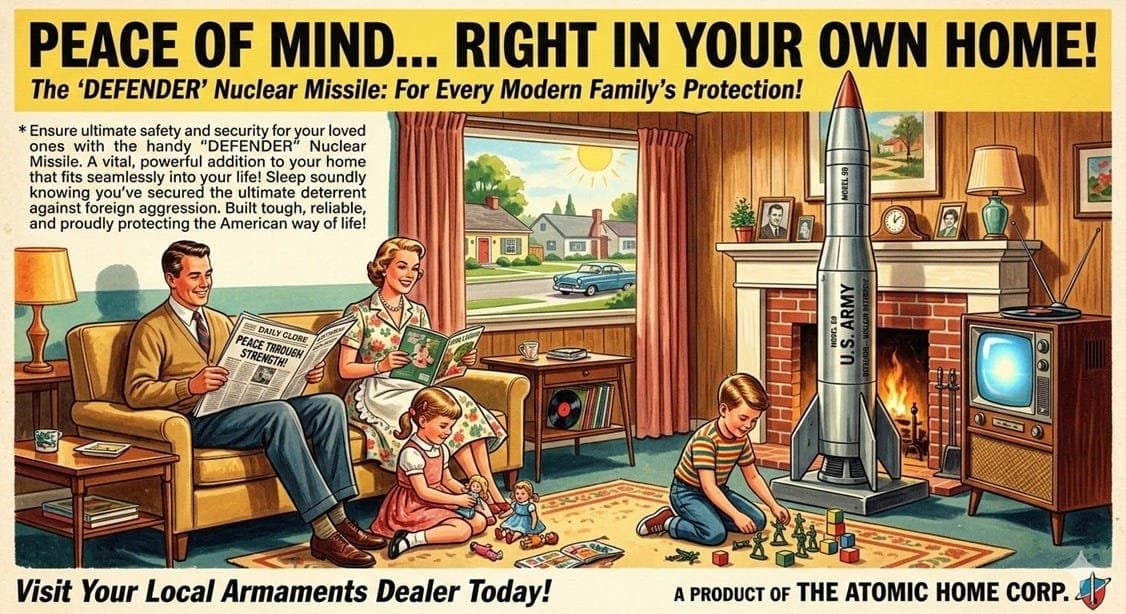

Smith's core thesis is straightforward: as AI systems become increasingly powerful, they may evolve into what amounts to a superweapon—potentially more consequential than nuclear arms. In this framework, allowing private companies to develop and control such technology without government oversight creates an unacceptable risk to national security.

The comparison to nuclear weapons is instructive. No one argues that private companies should be free to develop nuclear capabilities without government oversight. The same logic, Smith contends, should apply to AI systems that could potentially outthink human operators, manipulate information at scale, or even control critical infrastructure.

The Monopoly on Force

At the heart of Smith's argument is the principle that nation-states must maintain a monopoly on the use of force—a foundational concept in modern political theory dating back to thinkers like Thomas Hobbes and Max Weber. When private entities develop capabilities that could rival or surpass state power, the social contract itself becomes threatened.

This isn't merely about preventing bad actors from getting powerful AI. It's about ensuring that the entities controlling potentially civilization-altering technology remain accountable to democratic institutions and international law.

Anthropic's Position

Anthropic's resistance to the Pentagon's designation appears to stem from several concerns. First, there's the practical business impact—being labeled a supply chain risk could complicate partnerships with other companies and potentially limit market opportunities.

Second, there's the philosophical objection to military applications of AI. Anthropic has positioned itself as a company focused on AI safety and beneficial AI development, and many of its employees likely oppose using their technology for military purposes.

However, Smith would likely argue that these concerns, while understandable, miss the larger picture. The question isn't whether we want AI used for military purposes—it's whether we want any entity other than accountable governments to control technology that could fundamentally reshape global power dynamics.

The Broader Context

The debate comes amid growing tensions between tech companies and government over AI development. Microsoft and Google have both indicated they'll continue working with Anthropic on non-defense projects, suggesting a potential industry-wide pushback against government oversight.

Meanwhile, the rapid advancement of AI capabilities—with models like Claude Opus 4.6 already demonstrating sophisticated bug-finding abilities—underscores how quickly the technology is evolving beyond our ability to fully understand or control it.

The Path Forward

The Pentagon's approach may be heavy-handed, but Smith's argument suggests it's also necessary. Rather than fighting the designation, AI companies might be better served by engaging constructively with government to establish frameworks that balance innovation with security.

The alternative—a world where powerful AI systems are developed entirely outside government oversight—represents a gamble with potentially catastrophic consequences. As Smith notes, when it comes to technologies that could reshape civilization, erring on the side of caution and accountability isn't just prudent—it's essential for preserving democratic governance in an AI-enabled world.

The debate over Anthropic and the Pentagon is really a debate about who should control the future. Smith's position is clear: if AI becomes a superweapon, it must remain under the control of nation-states, not private companies, no matter how well-intentioned those companies might be.

Comments

Please log in or register to join the discussion