LLMs

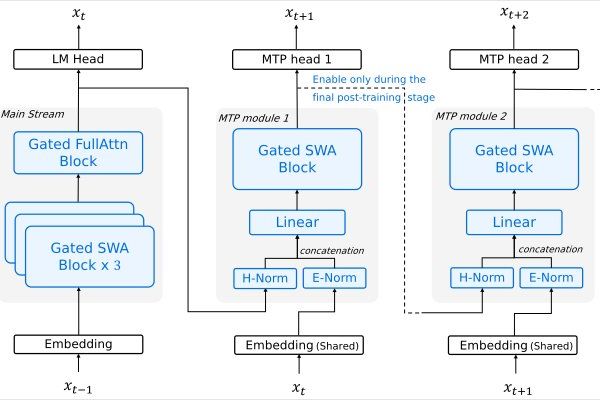

Alibaba's Qwen3.6-35B-A3B: A 35B-Parameter MoE Model That Challenges Dense Architectures

4/17/2026

Machine Learning

Visualizing Mixture of Experts: Inside the Black Box of Modern AI Models

4/13/2026

AI

Arcee AI Launches 399B-Parameter MoE Model Under Apache 2.0 License

4/3/2026

LLMs

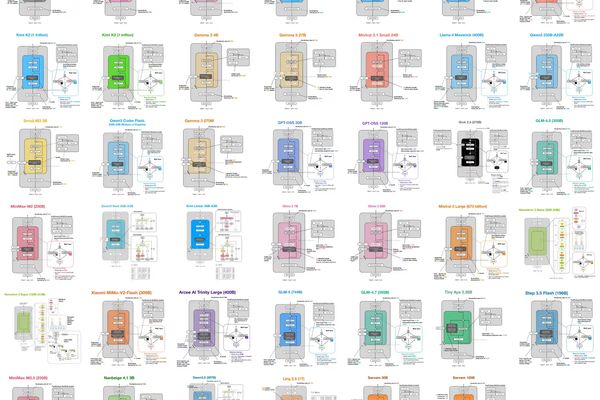

LLM Architecture Gallery | Sebastian Raschka, PhD

3/15/2026

LLMs

Sarvam AI Open-Sources 30B and 105B Reasoning Models

3/7/2026

AI

Microsoft Foundry Expands AI Arsenal with Qwen3.5 Medium Model Series

3/3/2026

LLMs

Step 3.5 Flash: Fast Enough to Think. Reliable Enough to Act.

2/19/2026

LLMs

Baidu's ERNIE 5.0: A 2.4 Trillion Parameter MoE Model, But What Does It Actually Do?

1/24/2026