Anthropic's sudden pricing change for Claude Code sparked confusion and backlash before being reversed, raising questions about transparency and strategy in the competitive AI coding assistant market.

Anthropic's recent pricing experiment with Claude Code has ignited a firestorm of controversy in the AI community, exposing the delicate balance between revenue optimization and user trust. What began as a quiet update to the claude.com/pricing page quickly escalated into a full-blown crisis of confidence, ultimately forcing the company to reverse course within hours.

The Pricing Puzzle

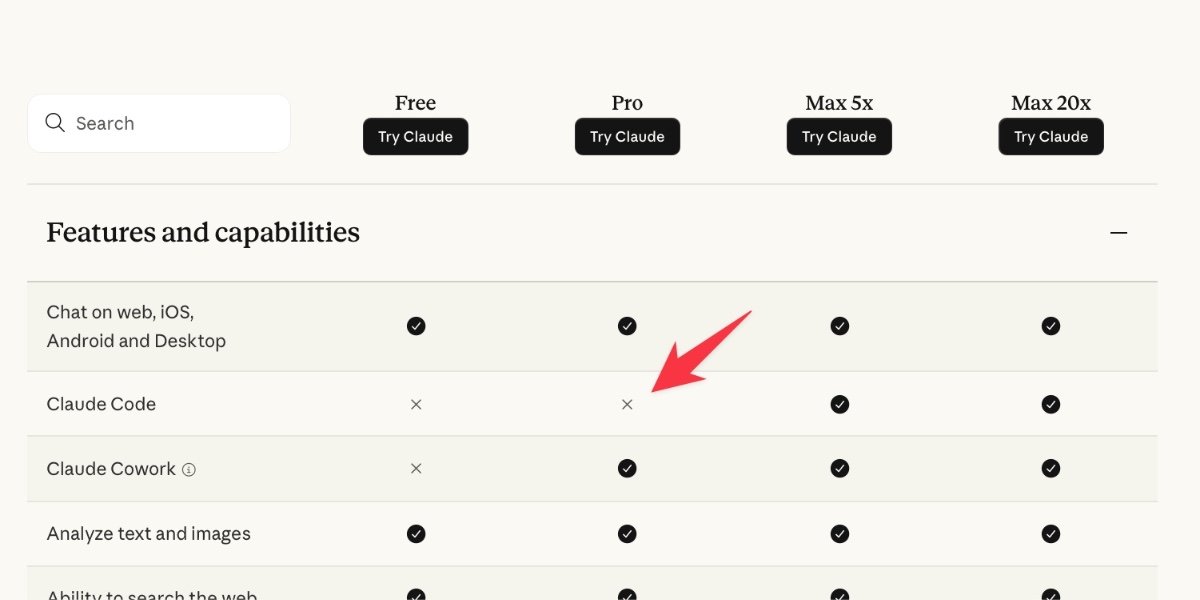

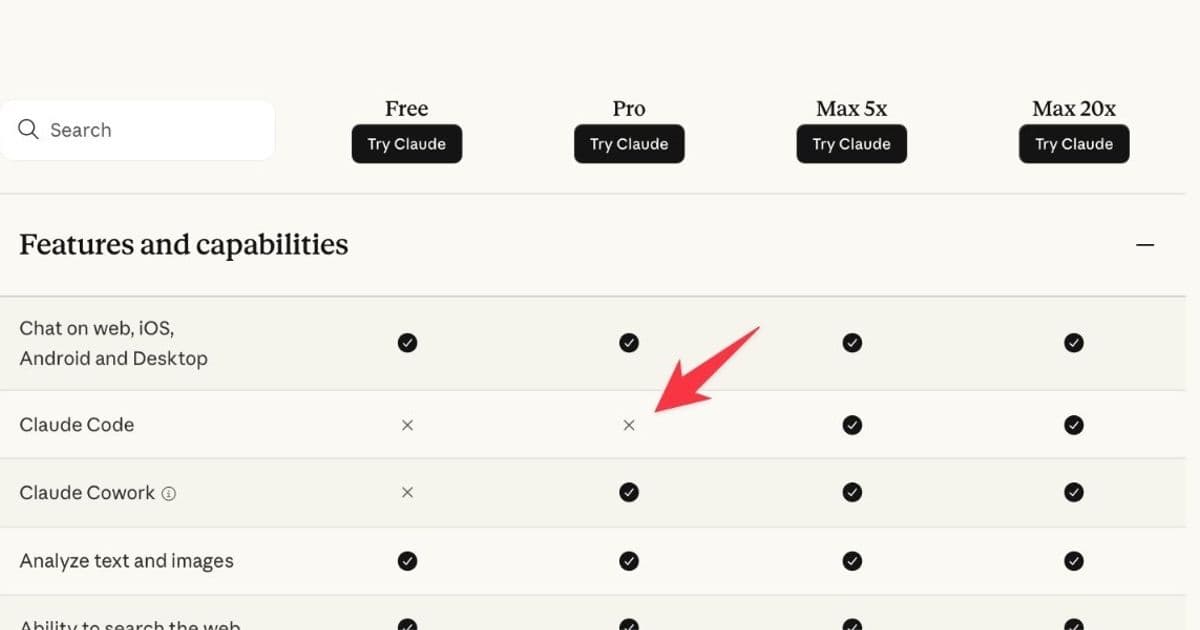

The confusion started when Anthropic's pricing page mysteriously dropped Claude Code from the $20/month Pro plan, making it exclusive to the $100/month Max and $200/month Max plans. This change appeared without any official announcement, creating immediate uncertainty among users who had come to rely on the tool.

What made this particularly perplexing was the inconsistency across Anthropic's own documentation. While the pricing grid showed the change, their main "Choosing a Claude plan" page—which appears first in Google searches—remained unchanged. This disconnect suggested either poor coordination or a deliberate test that had escaped proper containment.

The "2% Test" Explanation Falls Flat

Anthropic's Head of Growth, Amol Avasare, attempted to clarify the situation on Twitter, claiming this was merely a test running on "~2% of new prosumer signups" that wouldn't affect existing subscribers. However, this explanation strained credibility. The Internet Archive had already captured the changed pricing page, and numerous users across Reddit, Hacker News, and Twitter reported seeing the same update.

Avasare later clarified that the confusion stemmed from the landing page and documentation being updated for the test, even though only 2% of new signups would actually see the experimental pricing. This explanation raises more questions than it answers—why expose 100% of visitors to changes that only 2% would experience?

Strategic Missteps and Market Implications

The timing of this experiment proved particularly unfortunate for Anthropic. Claude Code had established itself as the category-defining coding agent, responsible for billions in annual revenue and earning a stellar reputation among developers. By threatening to quintuple the minimum price from $20 to $100, Anthropic created an opening for competitors.

OpenAI's Codex team wasted no time capitalizing on the confusion. Thibault Sottiaux, Codex's engineering lead, publicly emphasized that their tool would remain available on both free and $20 plans, positioning OpenAI as the more transparent and user-friendly alternative. His statement about transparency and trust being "two principles we will not break, even if it means momentarily earning less" was a direct jab at Anthropic's handling of the situation.

The Trust Deficit

Beyond the immediate pricing concerns, Anthropic's approach damaged something far more valuable: user trust. The lack of clear communication, the inconsistent documentation, and the apparent disconnect between different parts of the organization created a perception of opacity that runs counter to the company's stated values.

For educators and content creators like Simon Willison, who has produced over 105 posts teaching people to use Claude Code, the uncertainty poses a practical problem. Investing time in creating educational materials for a tool that might become unaffordable for most users represents a significant risk. Willison's planned tutorial for journalists at the NICAR data journalism conference exemplifies this dilemma—teaching a tool that starts at $100/month would exclude the very audience he aims to serve.

The Rapid Reversal

In a testament to the power of community feedback, Anthropic reversed the pricing change within hours of it going live. The checkbox for Claude Code reappeared in the Pro plan column, and the company began damage control by explaining the test's scope and promising to revert the public-facing documentation.

However, the damage to trust may prove more lasting than the pricing confusion itself. Users now question whether future changes might similarly appear without warning, and whether Anthropic's commitment to accessibility extends beyond marketing statements.

What This Means for the AI Industry

This incident highlights several critical lessons for AI companies navigating the transition from experimental technology to mainstream product:

Transparency matters more than ever. In an industry where user trust is paramount, opaque testing practices can backfire spectacularly. Users need to understand not just what changes are being made, but why and how they might affect them.

Pricing strategy requires careful consideration. The jump from $20 to $100 represents more than a 5x increase—it crosses a psychological threshold that fundamentally changes the nature of the purchase. For many users, especially those outside high-salary countries, this difference is prohibitive.

Competitive dynamics are intensifying. OpenAI's rapid response demonstrates how quickly market leadership can shift in the AI space. Companies that prioritize user trust and clear communication may gain advantages over those perceived as opaque or manipulative.

The developer community has a voice. The swift backlash and Anthropic's quick reversal show that the developer community can influence company decisions when united in concern. This represents a new form of accountability in the tech industry.

Moving Forward

For Anthropic to rebuild trust, they'll need to do more than simply revert the pricing change. A clear, public commitment to keeping Claude Code accessible on the $20/month plan would help, as would improved communication practices for future tests or changes.

Users like Willison, who pay for Claude Max and find it worthwhile, still care deeply about the accessibility of the broader ecosystem. The choice between investing in Claude Code versus alternatives like Codex now carries implications beyond personal preference—it affects which tools will be available to the communities these users teach and support.

As the AI coding assistant market continues to evolve, the companies that succeed will likely be those that balance revenue optimization with user trust, transparency with innovation, and competitive pressure with community responsibility. Anthropic's pricing experiment serves as a cautionary tale about what happens when that balance tips too far in one direction.

The incident also raises questions about the broader AI pricing landscape. As companies like Anthropic and OpenAI compete for market share, how they handle pricing changes, communicate with users, and respond to community feedback will increasingly determine their success. In an industry built on trust in complex technology, transparency isn't just good ethics—it's good business.

Comments

Please log in or register to join the discussion