This article examines the distributed systems principles behind WhatsApp's 1:1 messaging, analyzing how Erlang, persistent connections, and session routing enable low-latency delivery while exploring the inherent trade-offs in consistency, resource usage, and failure handling that emerge at massive scale.

WhatsApp's ability to deliver messages instantly to over two billion users masks sophisticated engineering trade-offs. What appears as a simple client-server interaction relies on carefully balanced distributed systems principles—each optimization addressing specific scale challenges while introducing new complexities. Understanding these choices reveals fundamental patterns applicable to any high-concurrency service.

The Concurrency Foundation: Erlang's Role

WhatsApp's backend leverages Erlang/OTP, a language designed for telecom systems requiring nine-nines availability. Erlang's lightweight processes (each consuming ~KB of memory) and preemptive scheduler enable handling millions of concurrent connections on modest hardware. The actor model isolates failures—when one process crashes, others remain unaffected via supervision trees.

Trade-off: This comes with operational costs. Erlang's syntax and OTP conventions create a steeper learning curve for teams accustomed to imperative languages. Talent pools are smaller, and debugging distributed Erlang systems requires specialized tools like dbg and observer. For WhatsApp, the payoff in fault tolerance justified this investment; for most startups, the operational overhead may outweigh benefits unless extreme reliability is non-negotiable.

Persistent Connections: Latency vs. Resource Pressure

Instead of HTTP's request-response cycle, WhatsApp maintains long-lived TCP connections between clients and gateway servers. This eliminates TLS handshake and socket establishment overhead for each message, reducing median latency from hundreds of milliseconds to single digits.

Trade-off: Each persistent connection consumes server memory and file descriptors. At peak scale, WhatsApp manages hundreds of millions of simultaneous connections—requiring careful tuning of OS parameters (e.g., ulimit, TCP buffer sizes) and efficient connection multiplexing. Mobile networks exacerbate this: frequent network switches force reconnections, partially negating the benefit. Alternative approaches like QUIC (used in HTTP/3) offer similar latency improvements with better mobile handoff support but require broader client/server adoption.

Session Service: The Routing Indirection

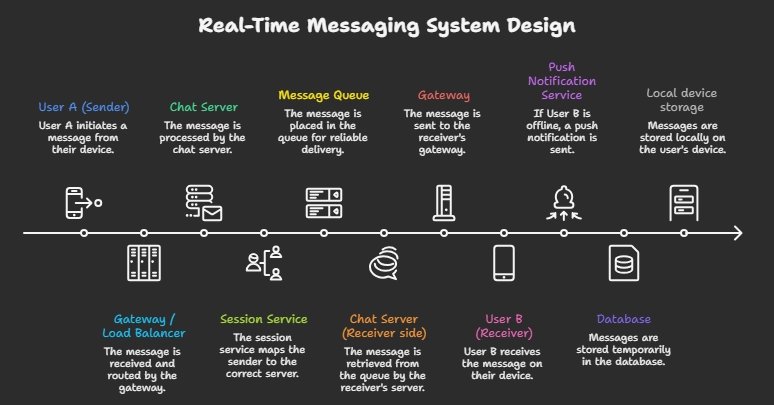

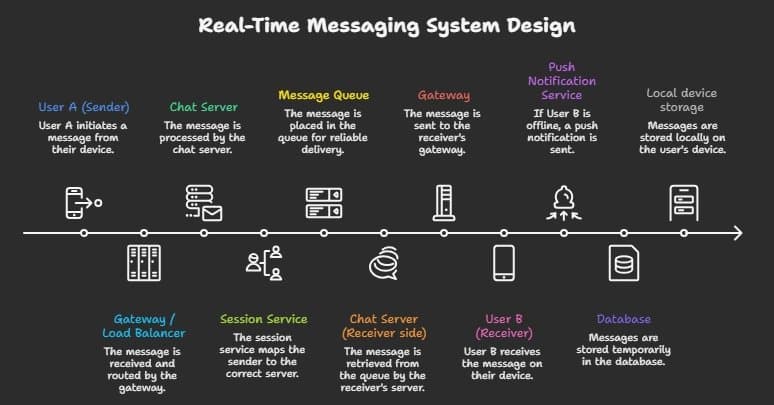

A critical innovation is the session service—a distributed hash map tracking which gateway server handles each user's active connection. When User A messages User B:

- A's gateway queries the session service for B's current server

- The message forwards directly to B's gateway

- B's gateway delivers to the device

This avoids broadcasting messages to all servers (a common pub/sub inefficiency) and enables horizontal scaling of gateways.

Trade-off: The session service becomes a potential bottleneck and single point of failure if not designed carefully. WhatsApp mitigates this by sharding the service across multiple clusters and using eventual consistency for updates—accepting brief routing inaccuracies during failover in exchange for availability. The system must also handle connection churn: when users switch networks, the session service updates must propagate quickly to prevent message misrouting.

Message Flow and Queuing: Managing Bursts

Messages traverse: Sender Gateway → Chat Server → Session Service → Receiver Gateway → Receiver Device. Crucially, chat servers place messages into durable queues (often backed by Mnesia or Redis) before forwarding.

Trade-off: Queues absorb traffic spikes (e.g., during events) and decouple sender/receiver processing speeds, preventing overload. However, they introduce variable latency—messages may wait in queue during congestion. WhatsApp tunes queue depth and consumer count based on real-time metrics, accepting slightly higher p99 latency to guarantee delivery during surges. For latency-sensitive applications (e.g., trading), this trade-off would be unacceptable; for messaging, eventual delivery within seconds aligns with user expectations.

Delivery Guarantees: The Ack Hierarchy

WhatsApp implements a three-state acknowledgment model:

- Sent: Message received by sender's gateway

- Delivered: Stored on receiver's gateway (device online)

- Read: Application processed the message

Each state requires explicit acknowledgments flowing back through the chain. This provides transparency but adds round-trip overhead—each message generates at least two additional network trips (deliver/read acks).

Trade-off: In unreliable networks, frequent retransmissions occur when acks are lost. WhatsApp uses exponential backoff and idempotent receivers to handle duplicates, but this increases complexity. Simpler systems (e.g., basic UDP-based chat) forego guarantees for lower overhead; WhatsApp prioritizes user trust in delivery status, accepting the cost.

Encryption and Offline Handling: Privacy vs. Performance

End-to-end encryption (using Signal Protocol) occurs purely on client devices—servers only handle encrypted blobs. This ensures provider cannot access content but shifts cryptographic computation to mobile CPUs.

Trade-off: Modern phones handle AES-256 and elliptic curve operations efficiently, but encryption adds ~1-2ms per message on older devices. For WhatsApp's volume, this aggregates to significant server-side savings (no decryption/re-encryption) but impacts client battery life—a consideration less critical for desktop-first services.

For offline recipients, messages are stored in the receiver's gateway queue with a TTL (typically 30 days). Push notifications alert users upon reconnection.

Trade-off: Storing encrypted messages requires gateway storage capacity proportional to offline user count and message volume. WhatsApp balances this by aggressively expiring old messages and using compression—accepting that very old offline messages may be lost if users remain disconnected for extended periods. Alternative designs (e.g., peer-to-peer storage) would increase complexity dramatically for minimal gain given typical offline durations.

Local Storage and Scalability: The Mobile Edge

Clients use SQLite for local message storage, chosen for its zero-configuration, ACID transactions, and efficient disk I/O on mobile flash storage. This enables instant app startup and offline message composition.

Trade-off: SQLite's single-writer limitation can cause contention during heavy usage (e.g., group chats with rapid messaging). WhatsApp mitigates this with careful transaction batching and WAL mode, but high-end Android/iOS devices still experience occasional UI jitter during backups or restores. For write-heavy scenarios, alternatives like Realm or custom LSM-trees offer better concurrency at the cost of increased client-side complexity.

Scalability emerges from stateless backend services—chat servers, session services, and gateways store no long-term user state locally. State lives in databases (Mnesia for session data, custom stores for queues) or client devices. This allows adding servers behind load balancers without rebalancing user assignments.

Trade-off: Statelessness shifts state management complexity to the data layer. WhatsApp invests heavily in database sharding, replication lag monitoring, and conflict resolution strategies (e.g., for session updates during network partitions). A stateful design might simplify some logic but would severely limit horizontal scaling—proving untenable at WhatsApp's scale.

Broader Implications

WhatsApp's architecture exemplifies how distributed systems principles solve real-world scale challenges: using the right tool for the job (Erlang for concurrency), optimizing for the common case (persistent connections for active users), and embracing eventual consistency where strong consistency isn't required (session routing, offline storage).

Critically, it shows that "simple" user experiences often mask sophisticated systems where every optimization involves explicit trade-offs. Engineers designing similar systems must ask: What latency is acceptable? What failure modes can we tolerate? Where does complexity provide the most user value? The answers—shaped by user expectations, infrastructure constraints, and business priorities—determine whether a system merely works at scale or delivers a consistently excellent experience.

For those implementing messaging features, studying these patterns offers valuable lessons beyond copying specific technologies. The true insight lies in understanding why WhatsApp chose each approach—and what they deliberately chose not to do.

Comments

Please log in or register to join the discussion