UK's ICO launches investigation into Meta's Ray-Ban smart glasses after reports that human contractors in Kenya accessed intimate footage captured by unsuspecting users, raising serious GDPR compliance concerns.

The UK's Information Commissioner's Office (ICO) has launched an investigation into Meta's Ray-Ban smart glasses following explosive reports that human contractors reviewing footage from the devices were exposed to deeply private moments captured without users' knowledge.

The controversy erupted after Swedish news outlets Svenska Dagbladet and Göteborgs-Posten published findings from interviews with dozens of workers employed by a Meta subcontractor in Nairobi, Kenya. These contractors revealed they routinely review video, audio, and transcripts collected from the smart glasses as part of their job improving Meta's AI systems.

According to the investigation, the review queue extends far beyond harmless AI interactions. Workers described viewing footage of people getting dressed, using the toilet, and engaging in private conversations about relationships, politics, and alleged wrongdoing. Some clips reportedly captured bank cards, personal paperwork, and other identifying details inadvertently caught on camera.

"We see everything," one employee told the Swedish outlets, capturing the scope of what these contractors encounter in their daily work.

GDPR Compliance Questions Loom Large

The case raises serious questions about cross-border data transfers under the EU's General Data Protection Regulation (GDPR). Companies transferring personal data to contractors outside the European Economic Area must implement approved safeguards to protect that information. The fact that Meta's review process involves workers in Kenya adds another layer of complexity to potential GDPR violations.

Meta's terms acknowledge that some interactions may be reviewed by humans to improve the system, but the Swedish investigation suggests the reality extends well beyond what users might reasonably expect. The company's privacy policy states that recordings are only used to improve AI systems in certain circumstances, such as when users choose to share interactions to help train the technology.

However, the ICO's intervention indicates these assurances may not meet UK data protection requirements. The watchdog emphasized that organizations deploying products capturing personal data must be transparent about what information is collected, how it's used, and who may have access to it.

Smart Glasses Market Faces Reality Check

This controversy emerges as the broader smart glasses market confronts significant challenges. Recent reports indicate that both Apple and Meta's VR shipments fizzled in 2025, suggesting consumer enthusiasm for immersive wearable technology may be waning.

Meta has already retreated from its ambitious metaverse plans after what many observers called a "virtual reality check." The company's pivot to AI-powered smart glasses represented an attempt to find a more practical application for wearable computing technology.

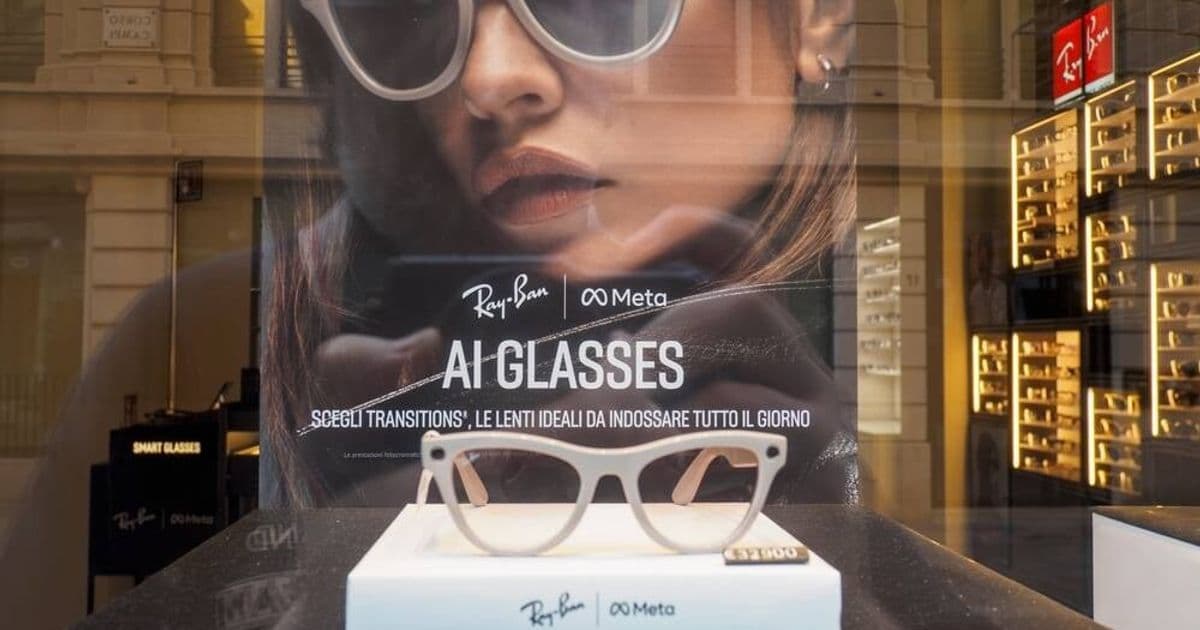

However, the current privacy scandal threatens to undermine whatever consumer trust Meta had built in this space. The Ray-Ban Meta smart glasses, which look like ordinary eyewear but contain cameras, microphones, and AI assistants capable of taking photos, shooting video, and responding to voice commands, now face scrutiny over their data collection practices.

The Human Element in AI Training

This case highlights a persistent reality in AI development: despite marketing claims about autonomous systems, human review remains integral to training and improving AI models. The "AI-powered" label often masks the fact that humans somewhere in the loop are watching, labeling, and categorizing the very data that trains these systems.

For Meta's smart glasses users, this means that intimate moments captured by their devices may be viewed by contractors thousands of miles away, potentially without their knowledge or meaningful consent. The disconnect between user expectations and the reality of AI training processes represents a fundamental challenge for companies marketing these devices.

Regulatory Response and Industry Implications

The ICO's decision to write to Meta requesting information on how it's meeting its obligations under UK data protection law signals potential enforcement action. If the regulator finds violations, Meta could face substantial fines under GDPR, which allows penalties of up to 4% of global annual turnover for serious breaches.

This investigation also serves as a warning to other companies developing AI-powered consumer devices. The regulatory environment for data protection continues to tighten, with watchdogs increasingly willing to intervene when companies' practices fall short of legal requirements.

Meta's response to the BBC emphasized that users can manage their data through device settings and delete recordings at any time. However, this self-service approach may not satisfy regulators concerned about initial data collection practices and the extent of human review.

The smart glasses privacy probe represents another chapter in the ongoing tension between technological innovation and personal privacy. As wearable devices become more sophisticated and AI systems more pervasive, companies will need to navigate increasingly complex regulatory requirements while maintaining user trust.

For now, Meta faces the dual challenge of defending its AI training practices while potentially defending against regulatory enforcement in multiple jurisdictions. The outcome of the ICO's investigation could have significant implications not just for Meta, but for the entire smart glasses industry and the broader market for AI-powered consumer devices.

Comments

Please log in or register to join the discussion